Yesterday, Apple unveiled the iPhone 11, iPhone 11 Pro, and iPhone 11 Pro Max. Though largely iterative, the new phone lineup still has a few interesting tidbits technologists need to be aware of.

We won’t get knee-deep in comparing and contrasting phones. Instead, we’re going to focus on what you need to know to optimize your apps and services.

Goodbye, 3D Touch

3D Touch is no more. The under-utilized feature that has been lingering since the iPhone 6S (2014!) is officially dead.

None of the three latest iPhones have 3D Touch screen technology. This is not only a slight modification in how we’re meant to interact with the devices, but a change in how Apple manufacturers iPhones: 3D Touch required a layer of pressure-sensitive ribbons to be sandwiched within the screen itself, which was another stop in an already tedious manufacturing process. Now, Apple can skip that altogether.

In its place is Haptic touch, which identifies long-presses on the screen and returns haptic feedback. It acts a lot like 3D Touch, but without the peek-and-pop feature or ability to use pressure to activate certain things such as a share menu. Haptic Touch also means you can’t force-press an icon to bring up a unique menu, as long-press is already assigned for app rearrangement.

3D Touch is still documented, as legacy hardware still accommodates the feature, but it’s now unnecessary to support moving forward. If your app uses it, now is the time to consider how to transition away from 3D Touch.

‘Pro’ Means Photographers, Now

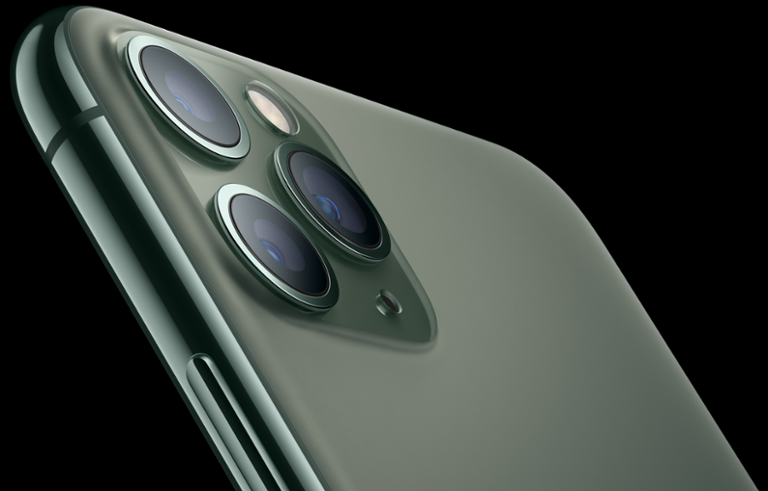

There’s a third camera on the iPhones Pro, which means its array is as follows: ultra-wide, wide, and telephoto. The iPhone 11 has two cameras: wide, and ultra-wide.

The idea is that you can zoom out, optically, to capture more of the scene. You can also zoom in like never before with the telephoto lens. The three-camera system is also going to be open to developers; Filmic is already tapping into it for its Filmic Pro app.

Video recording is also far better, as is editing of those videos. You can even switch between the cameras on the fly while recording video, now.

If your app accesses the camera at all, keep a close eye on new APIs as they become available. There’s opportunity with new features that may seem silly at first glance (slo-mo selfies, or ‘slofies,’ is just the worst thing right now), but have a way to catch on for social or other purposes. It’s also worth noting a long-press on the photo shutter button no longer takes burst photos; now, you’ll be taking short videos.

‘Pro’ Also Means Machine Learning

Here are a few quotes from Apple’s iPhone 11 Pro landing page:

Two new machine learning accelerators on the CPU run matrix math computations up to six times faster, allowing the CPU to perform over one trillion operations per second.

And:

The 8‑core, Apple‑designed Neural Engine is up to 20% faster and uses up to 15% less power. It’s a driving force behind the triple‑camera system, Face ID, AR apps, and more.

Oh, also:

To help developers leverage the machine learning power of A13 Bionic, Core ML 3 works with the Machine Learning Controller to automatically direct tasks to the CPU, GPU, or Neural Engine.

The entire A13 SoC was designed for machine learning processing, which currently focusses on photo and video shooting and editing. Unfortunately, rumors of the third camera and new SoC being meant for augmented reality were false flags.

Fortunately, there’s nothing precluding the hardware from being optimized for augmented reality. If we’re (still) being speculative, the A13 SoC is powerful enough to manage advanced augmented reality features... and we’d have to think Apple focusing on the camera system and machine learning is foreshadowing for the next few years of hardware development.

Essentially, Apple uses its ML-focused A13 to analyze nine different images when you snap a photo. The engine takes the 24 million pixels made available to it from those nine images to return the optimal pixel-by-pixel image, which Apple says helps the Pro’s low-light performance. Apple’s Phil Schiller called it “computational photography mad science” on stage.

So, Where’s The Augmented Reality?

Let’s take stock of what we have, and what this means moving forward for AR.

The A13 SoC is too powerful for its own good. It’s adept at managing top-end photo and video processing, but those are (like augmented reality) niche events. Apple is making excellent use of the chipset, but we just don’t believe it designed a special SoC just to help you take better selfies or improve low-light imagery.

Apple also optimized the A13 to be more power-efficient. And it can reach a 50 percent charge in 30 minutes with the included fast-charging hardware.

Spatial Audio is also part of the new iPhones, which can be quite useful for augmented reality. It has Dolby Atmos, too, where “sound moves around you in 3D space, so you feel like you’re inside the action.”

At WWDC 2019, Apple unveiled body tracking features for ARKit, and the three-camera array helps with depth perception as far as augmented reality is concerned. If Apple’s AR glasses do indeed launch next year, or later this year, we expect the A13 to do the heavy lifting... and we may even see the three-camera system replicated in eyewear. We’ll see.

iOS 13

It launches September 19. The phones arrive September 20. If you’re not optimizing for dark mode, nows the time to get it all squared away. Sign In With Apple is also available on iOS 13, and Siri Shortcuts is better for third-party apps.