Apple’s annual Fall event happens Tuesday, and will undoubtedly bring new iPhones. But what else may Apple have in store for us? More specifically, what does it mean for technologists?

iPhone 11

Three, specifically. This year, Apple is believed to bring us three new iPhones: a refreshed XR, and an iteration on its flagship XS and XS Max devices. This time, the XS is believed to be re-branded as a ‘Pro’ device.

According to AppleInsider, the lineup will look like this: iPhone 11 replaces the XR, iPhone 11 Pro replaces the XS, and the iPhone 11 Pro Max replaces the iPhone XS Max. The main differentiator would be display technology; the 11 would carry an LCD screen, while the "Pro" models would have OLED panels.

Developers should be intrigued by a rumored third camera on the new iPhones. It’s unclear if Apple is planning to make the three-camera array a mainstay across its three new phones, but the rumor mill suggests the lower-cost XR replacement will house two shooters with the third camera an upgrade-only option for the ‘Pro’ phones.

This third camera will (allegedly) capture a wider angle view, which will accompany software that can stitch it together with what the two other cameras capture. The idea here is to help everyone snap the perfect image, every time. Instead of backing up and asking everyone to scoot into the frame, group shots can simply be snapped, and the images captured from the three cameras will be managed to return the perfect group shot.

This third camera is also said to be a trojan horse of sorts for augmented reality. Apple is expected to frame (pun intended) augmented reality as a feature for adding effect to live video, much like it has now for its Clips app. It will also have a new chip, dubbed AMX, to handle the heavy lifting of augmented reality, sources claim.

So what’s it mean for you? If you’re weaving camera features into your app, there may be a lot more tooling come September 23, when iOS 13 arrives. Currently, the phones are believed to land September 27.

New Accessories

Many expect Apple will introduce a Tile-like tracker on Tuesday, which should be a massive hit. In a nutshell, it is said to be just like a Tile – those little bluetooth tracker you slip into any bag, wallet, or use as a keychain – but utilize the entire iOS platform to help you track items.

If this is indeed the case – that is to say, if Apple really is turning the entire iPhone ecosystem into a network to track Tile-like dongles – expect this feature to be a sandboxed environment that can’t really be tapped into, much like Apple Wallet. That said, we also expect there to be some developer tooling to weave tracking into an app. While Apple will likely make its dongles viewable via the new-look Find Me app for iOS, it should also allow OEMs the ability to add tracking within their own apps.

We expect this product will serve as an introduction to a new Made for iPhone – or MFi – offering from Apple. While dongles are handy for luggage or bags, we think manufacturers will be interested in weaving the tech straight into items, even if that means using Apple-made chipsets or beacons.

Price is the curious point. A Tile can be purchased for $30 or so, which leads us to believe Apple’s dongles will cost around $50.

Apple AR

Remember Google Glass? Remember how terrible it was?

Remember how terrible the introduction of Google Glass was? Apple can do better.

Apple’s heads-up AR glasses are probably coming, and we may get a glimpse of them Tuesday. MacRumors spotted mention of an app named STARTester in the final version of iOS 13, which has a toggle for switching between normal and “head-mounted” mode. It also has a view controller and a scene manager, as well as “worn” and “held” modes.

“Held” and “worn” are curious, and makes us wonder if Apple’s AR accessory program will have two unique items: glasses, and some sort of dongle. It’s unclear, but if we do see a glimpse of Apple’s AR plans at this event, we also wonder if there will be a glimpse of rOS, Apple’s alleged augmented reality operating system. Either way, augmented reality looms large, and this is of special interest for developers and engineers.

9to5Mac reports STARTester is essentially like early days of WatchKit:

Stereo AR apps on iPhone work similar to CarPlay, with support for stereo AR declared in the app’s manifest. These apps can run in either “held mode”, which is basically normal AR mode, or “worn mode”, which is when used with one of these external devices. A new system shell – called StarBoard – hosts extensions that support the new AR mode, similar to how WatchKit apps worked in the original Watch.

It also reports there's support for a third-party AR device named "HoloKit," which suggests the HoloLens will be the development device for AR apps and services on Apple's platforms. The "external devices" it mentions are allegedly two Apple devices, but we're not going to jump to any conclusions. If anything, it's starting to feel like Apple just did a poor job of picking up its breadcrumbs in iOS betas, and we'll see a broader AR offering at WWDC 2020.

Watch the Event, Keep an Eye on the Portal

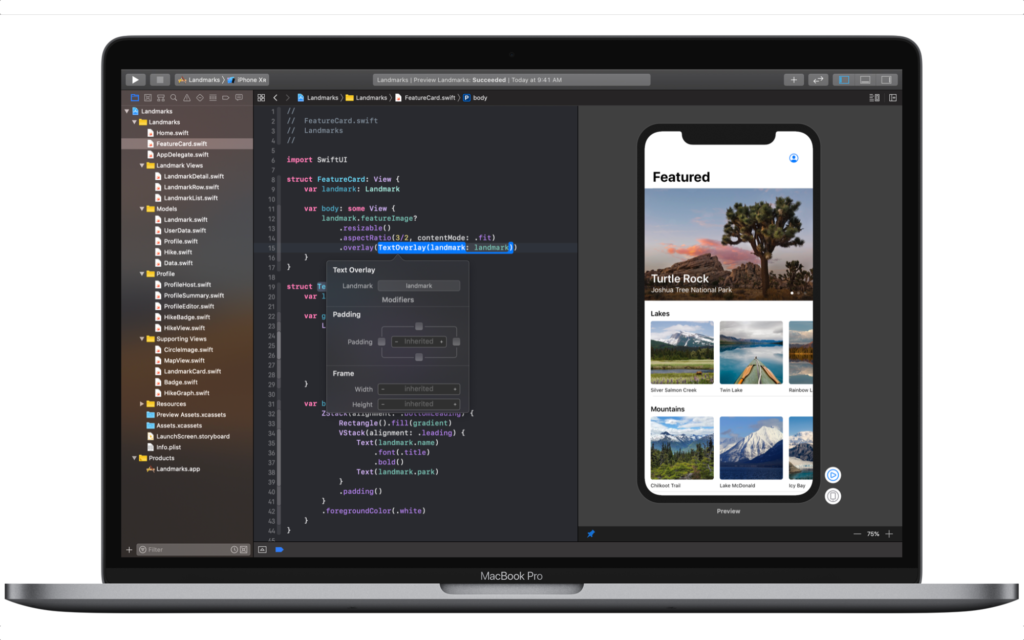

With iOS 13, macOS Catalina, and new watchOS and tvOS versions coming very soon (iOS 13 and watchOS 6 will land September 20, when new iPhones are expected to arrive), expect Apple’s developer documentation to change. Specifically, we’re looking for more documentation around SwiftUI and its associated frameworks.

There’s also chatter new iPhones will drop support for 3D Touch, so if your app or service supports it, keep a close eye on how apple plans to handle support for it with iOS 13. It’s still documented and supported for iOS, but we’re not sure how long it has at this point. Developer implementation of the feature was never great, and it can be replicated with Force Touch. If it leaves iPhone, expect it to also vanish from Apple Watch.

We don’t expect a new Xcode to land Tuesday, as macOS has arrived a week or so after iOS the past few years. It could also take longer; its feature-set is just not ready for delivery, and its use of SwiftUI within the simulator is really wonky. If Apple held off on Catalina and/or Xcode, that’s not a bad thing.

Waiting for March (and June)

Apple likes to hold two product-launch events annually, and WWDC in June. September is reserved for iPhone, while March is when it tends to discuss new iPads, Apple Watches, and the like.

So don’t expect radically new Apple Watches with fancy new sensors; watchOS 6 doesn’t even allude to features that will require new hardware, though AppleInsider reports new Apple Watches may be shown off nonetheless. They just won't be a massive refresh of any kind.

iPads don’t need to be updated – they’re already getting iPadOS. Still, the rumor mill says we'll see an iPad refresh in October. As with the Apple Watch, this is likely an iterative spec bump.

Apple may announce the Mac Pro is going on sale soon, but you’re not gonna buy one anyway. AirPods could use a refresh to add noise canceling and water resistance, but we expect that to come in March as well.

We also expect talk of services, like Apple Arcade (and possibly a new Apple TV alongside it) or Apple TV+, but those are just launch announcements.

If Apple discusses AR peripherals, the logic suggests getting them ready for holiday shopping is a priority – but Apple failed to get AirPods primed for the holiday gift-giving season, and they’re ubiquitous now. We expect its AR glasses/whatever could also debut in full at the company’s March event, with a preview announcement Tuesday.

For us, the real curiosity around AR is the development environment for heads-up displays, and when it will become available. The smart bet is June 2020 at WWDC.