- “Socially beneficial.”

- Avoid creating or reinforcing unfair bias.

- Be built and tested for safety.

- Be accountable to people.

- Incorporate privacy design principles.

- Uphold high standards of scientific excellence.

- Be made available for users who accord with these principles.

Microsoft Hints at Ethics Review for A.I. Development

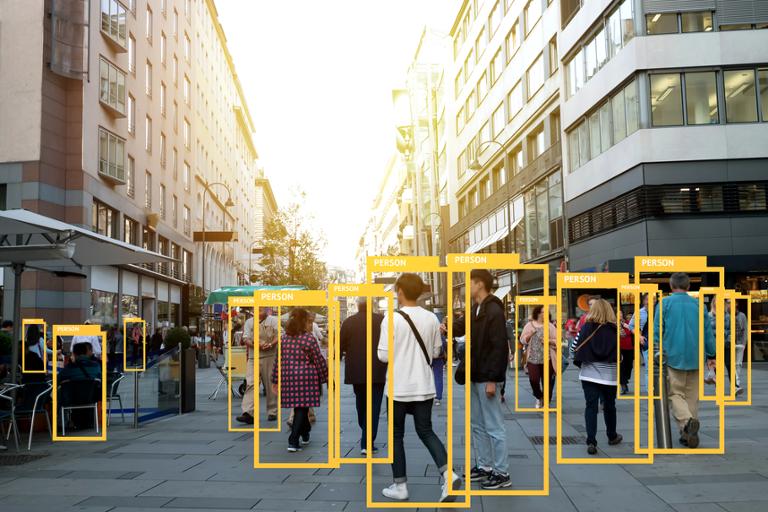

A number of A.I. researchers think that the technology industry needs to impose an ethical framework on artificial intelligence and machine-learning platforms. Aside from the obvious questions over what kind of ethics should apply, there’s also the uncertainty over when and how those ethics can be coded into A.I. and ML platforms. Microsoft has an idea about the latter conundrum, and it’s preparing to put it into action. Specifically, the company will add an “A.I. ethics review” to the checklist of audits preceding the release of new products (that’s in addition to audits for privacy, security, and accessibility). “We are working hard to get ahead of the challenges posed by AI creation,” said Harry Shum, executive vice president of Microsoft’s A.I. and Research group, told the audience at MIT Technology Review’s EmTech Digital conference. “But these are hard problems that can’t be solved with technology alone, so we really need the cooperation across academia and industry. We also need to educate consumers about where the content comes from that they are seeing and using.” Shum also cited the need for ethics auditing as part of any A.I. build: “This is the point in the cycle… where we need to engineer responsibility into the very fabric of the technology.” Microsoft isn’t the first company to baby-step into the thorny realm of A.I. ethics. Last summer, Google published a corporate blog posting that broke down ethical principles for artificial intelligence. “How A.I. is developed and used will have a significant impact on society for many years to come,” Google CEO Sundar Pichai wrote at the time. “As a leader in A.I., we feel a deep responsibility to get this right.” Google would align any new A.I. software with a few key objectives, Pichai added. For example, all software would need to be: