Google Opens Its Maps API to Augmented Reality Development

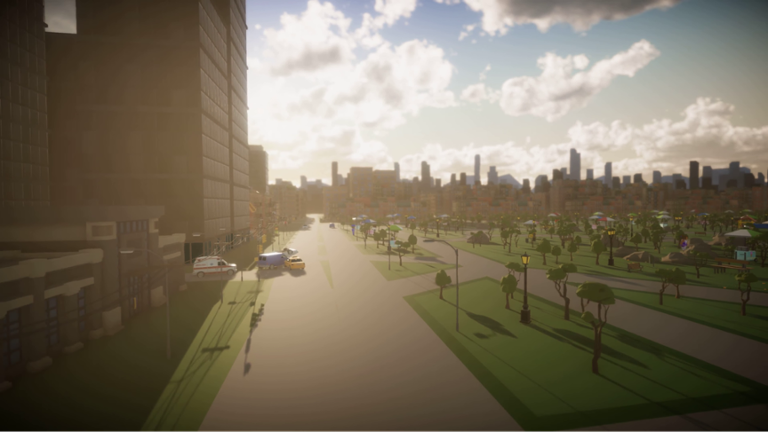

The blockbuster success of “Pokémon Go” in 2016 demonstrated that augmented reality, when married with the right content, could prove wildly successful among a broad cross-section of users. But in the years since that game hit the street (literally), relatively few developers have attempted to create games or apps that take advantage of real-world locations. That in itself is surprising—some pundits expected the Google and Apple app stores to fill with “Pokémon Go” rip-offs. But it might be a consequence of tooling, or a lack thereof: ARKit and ARCore, the AR development kits for Apple and Google (respectively), are still somewhat new to tech pros, and most developers don’t have access to the resources that Niantic Labs and The Pokémon Company could deploy in building “Pokémon Go.” Now Google is aiming to democratize the process of building real-world AR games, by opening up the Google Maps APIs. “With Google Maps’ real-time updates and rich location data, developers can find the best places for playing games, no matter where their players are,” read Google’s official blog posting on the release. “We turn buildings, roads, and parks into GameObjects in Unity, where developers can then add texture, style, and customization to match the look and feel of your game.” The idea of giving developers access to Google Maps’ hundreds of millions of 3D buildings and roads worldwide is a powerful one. Google claims that its Maps receive some 25 million data updates daily, and handle more than a billion active users. Google is pushing Unity as the game-development platform ideal for building games with Google Maps data. Google isn’t Unity’s only partner when it comes to AR; IBM recently announced an open-source SDK that would allow Unity developers to better integrate natural-language queries into a game’s flow. (Google’s announcements also come just as Unreal unveiled a cross-platform AR development tool for both iOS and Android.) In addition to opening up the Google Maps APIs, Google is also open-sourcing DeepLab V3+, a semantic image segmentation A.I. model, and Resonance Audio, a spatial audio SDK. DeepLab V3+ helps computers recognize objects in photos; Resonance Audio makes audio more “realistic” in the context of AR and VR. With all of these advances, Google is clearly all-in on AR. But will developers gravitate toward these offerings?