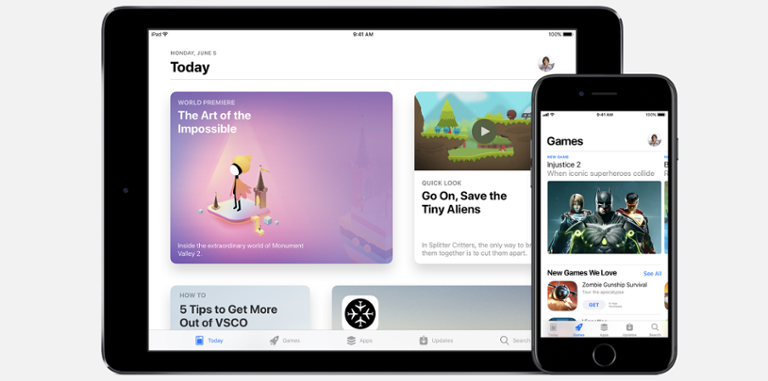

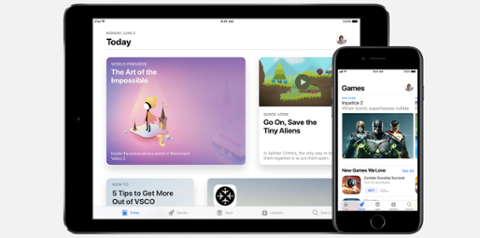

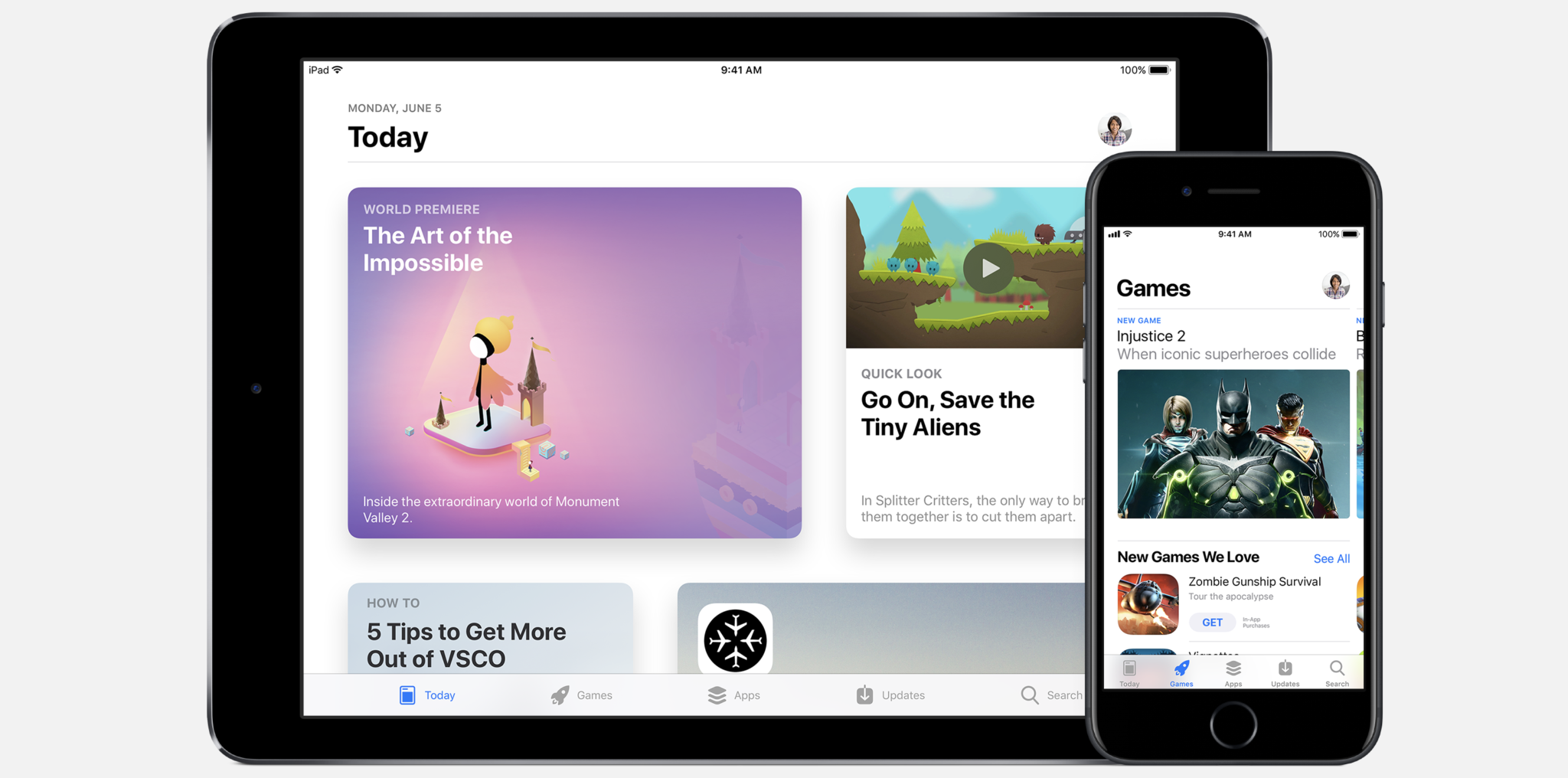

[caption id="attachment_142149" align="aligncenter" width="2970"]

The App Store in iOS 11[/caption] Today’s the day: iOS 11, the next version of Apple's mobile operating system, will hit hundreds of millions of phones and tablets worldwide. What used to be an iterative upgrade is now critical for developers, as iOS 11 brings a litany of new features and tools they need to be aware of. In many ways, iOS 11 represents a sea change in the same way iOS 7 and 8 did. There is a lot of visual clean-up, and the underlying structure is also changing. With the introduction of a totally new hardware format (as represented by iPhone X) and augmented reality (AR), today’s update represents a critical moment for iOS-based mobile app development. Not to worry – we’ve got you covered. Though we can’t get into all the nooks and crannies of what iOS 11 will mean for developers, here are five things you need to keep your eye on. [caption id="attachment_138519" align="aligncenter" width="1360"]

Mac App Store WWDC 2016[/caption]

The App Store

What used to be a cursory backend app for updating apps is

quickly becoming a destination for users. The new-look App Store is both a visual update and changes the way users discover apps. It’s also altering how developers present their apps. App landing pages are now critical to an app’s success. Developers used to treat them as benign download portals, but that simply won’t suffice in iOS 11. In addition to slimming the App Store down to three categories – apps, games and ‘today’ – app landing pages are also re-imagined. App pages are now more like bespoke websites for apps. The information gleaned from those pages hasn’t changed (users can still see reviews and the like); but developers are now being asked to carefully consider video, app screenshots and descriptive text for their app. Such data is more critical than ever now that Apple has chosen to remove the App Store from desktop iTunes. As it stands, the only Apple channel for users to discover new apps is via their phones or tablets, and the interaction they have with app landing pages will be critical. Studies show users decide

very quickly which apps they want to download. [caption id="attachment_142053" align="aligncenter" width="3443"]

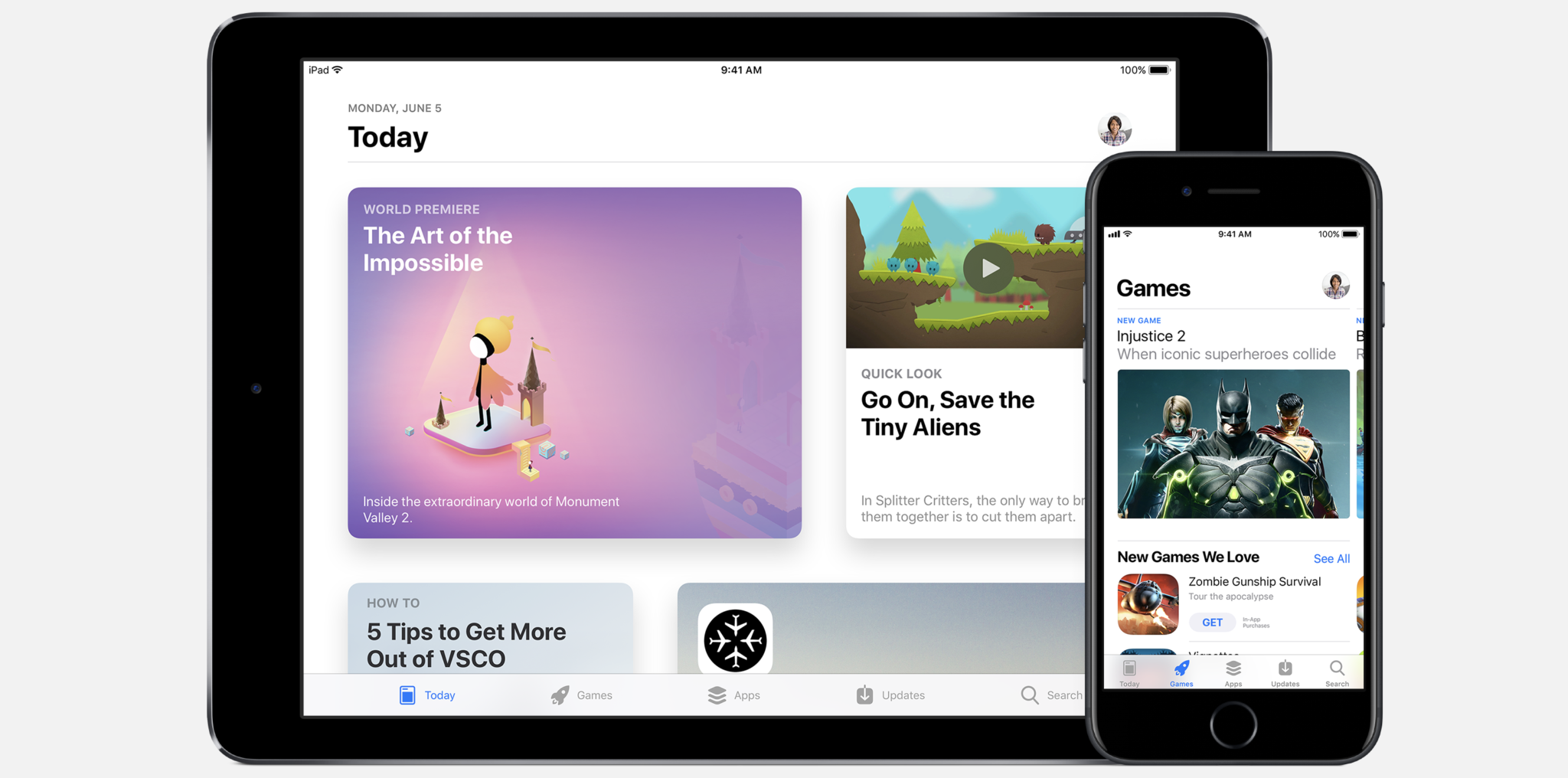

Augmented Reality via ARKit at WWDC 2017[/caption]

Augmented Reality

Apple’s latest operating system ships with an

actual reality distortion field: ARKit. ARKit is Apple’s best stab at augmented reality (AR) for mobile. It’s been mimicked by Google’s ARCore, which has yet to ship. Early returns show ARKit to be a fairly robust offering. It’s also

changing the mobile paradigm. Many apps have great use-cases for augmented reality, so ARKit will have an immediate and noticeable impact. Games, the largest revenue generation section in the App Store, may just plain explode with AR functionality. ARKit’s framework does a lot for developers. At its core, the platform uses the device's camera to find flat surfaces to virtually place objects on, and then anchors those virtual objects' positions relative to the device. This technology is called Visual Inertial Odometry; walking around a scene presents a 3D image rather than moving 2D landscape. ARKit leans into SceneKit, Apple’s framework for creating 3D content for iOS, and Metal, the company’s low-overhead graphics rendering framework.

Machine Learning and Neural Networks

ARKit covers the fun side of development. CoreML, Apple’s machine learning framework, does the heavy lifting.

CoreML (the ‘ML’ is for ‘machine learning’) is a bit of a shell for established machine-learning tools. Apple didn’t try to re-invent the wheel here. Rather, CoreML is used as a sort of protection layer between machine learning models and your app. So why do developers need it? First, it’s the only direct method of weaving a machine learning model into an iOS app. Second, it makes machine learning really easy to implement and use. As CoreML taps into existing machine learning models, it’s also a good way to get up and running with things like language processing and image scanning without asking users to spend hours building a fresh model. With CoreML, a developer could target a specific use case, too; an app doing little more than translating English and Portuguese, for example, is easy to spin up with CoreML. The iPhone X

also comes with a neural network, and there's every reason to think all future iOS devices will, as well. To that, machine learning is becoming critical to success in mobile. [caption id="attachment_143571" align="aligncenter" width="1248"]

iPhone X safe area[/caption]

Safe Area!

About six weeks after iOS 11 lands,

iPhone X arrives. This is a bigger deal than many realize. With the larger screen comes fewer bezels. The best way developers can prepare for this is via safe areas. To put it succinctly, a safe area is the portion of the screen where text and imagery can safely populate. without it, existing apps may look really bad on the iPhone X; they may even be rejected if they’re not tweaked appropriately. If you think a $1,000-plus smartphone is niche, think again. A full five days after launch, you can still pick up an iPhone 8 or 8 Plus from Apple Stores everywhere on Friday; that's unusual, as iPhone ship dates typically slip to weeks or months soon after pre-orders pop up. This year, it seems everyone is waiting for the iPhone X to come out. If you’re curious about best practices for iPhone X optimization, we suggest reading our handy guide

on what’s in the pipeline. The fixes aren’t hard, but they will soon become very necessary.

iPad

If your app is

optimized for the iPad, there’s a good chance you’ll need to re-think it, especially if it’s a productivity app, which is a segment Apple seems to be increasingly focused on with its largest mobile device. Drag-and-drop is a split-screen view for iOS 11 apps that also allows for interactivity between the two windows. The underlying tech is boilerplate stuff, but optimizing for it is a choice developers will have to make. Within the Drag and Drop API are several protocols and classes that can be implemented for use with split-screen and Apple’s new multi-tough interactions. In addition, iOS 11 also ushers in APFS, Apple’s new file management system. With it comes Files, a mobile app for managing files across apps and services. There’s not much work for developers there (it just corrals documents into one place), but extensions like Open in Place make it easier to open a file from anywhere. It’s a workaround for proper windowing of apps, but does need some attention from developers. This is also a good time to re-visit Apple Pencil support. Apple is big on Apple Pencil for the iPad Pro, and supporting it is as simple as adding a bit of code that appreciates estimated touch values for those times the pencil lags:

var estimates : [NSNumber : StrokeSample] func addSamples(for touches: [UITouch]) { if let stroke = strokeCollection?.activeStroke { for touch in touches { if touch == touches.last { let sample = StrokeSample(point: touch.location(in: self), forceValue: touch.force) stroke.add(sample: sample) registerForEstimates(touch: touch, sample: sample) } else { let sample = StrokeSample(point: touch.location(in: self), forceValue: touch.force, coalesced: true) stroke.add(sample: sample) registerForEstimates(touch: touch, sample: sample) } } self.setNeedsDisplay() } } func registerForEstimates(touch : UITouch, sample : StrokeSample) { if touch.estimatedPropertiesExpectingUpdates.contains(.force) { estimates[touch.estimationUpdateIndex!] = sample } }

[caption id="attachment_138521" align="aligncenter" width="3563"]

App Store Guidelines Comic WWDC 2016[/caption]

iOS 11 Support is Easy

This sounds like a lot of work, but it’s really just a lot

to do. Much of it is optional, too; a game doesn’t need to support split-screen on the iPad, for instance. Other features, like re-visiting your App Store landing page, are critical focuses of developer attention; so are safe zones. Moving forward, those are two areas that will dictate success for mobile developers in the foreseeable future.

The App Store in iOS 11[/caption] Today’s the day: iOS 11, the next version of Apple's mobile operating system, will hit hundreds of millions of phones and tablets worldwide. What used to be an iterative upgrade is now critical for developers, as iOS 11 brings a litany of new features and tools they need to be aware of. In many ways, iOS 11 represents a sea change in the same way iOS 7 and 8 did. There is a lot of visual clean-up, and the underlying structure is also changing. With the introduction of a totally new hardware format (as represented by iPhone X) and augmented reality (AR), today’s update represents a critical moment for iOS-based mobile app development. Not to worry – we’ve got you covered. Though we can’t get into all the nooks and crannies of what iOS 11 will mean for developers, here are five things you need to keep your eye on. [caption id="attachment_138519" align="aligncenter" width="1360"]

The App Store in iOS 11[/caption] Today’s the day: iOS 11, the next version of Apple's mobile operating system, will hit hundreds of millions of phones and tablets worldwide. What used to be an iterative upgrade is now critical for developers, as iOS 11 brings a litany of new features and tools they need to be aware of. In many ways, iOS 11 represents a sea change in the same way iOS 7 and 8 did. There is a lot of visual clean-up, and the underlying structure is also changing. With the introduction of a totally new hardware format (as represented by iPhone X) and augmented reality (AR), today’s update represents a critical moment for iOS-based mobile app development. Not to worry – we’ve got you covered. Though we can’t get into all the nooks and crannies of what iOS 11 will mean for developers, here are five things you need to keep your eye on. [caption id="attachment_138519" align="aligncenter" width="1360"]  Mac App Store WWDC 2016[/caption]

Mac App Store WWDC 2016[/caption]

Augmented Reality via ARKit at WWDC 2017[/caption]

Augmented Reality via ARKit at WWDC 2017[/caption]

iPhone X safe area[/caption]

iPhone X safe area[/caption]

App Store Guidelines Comic WWDC 2016[/caption]

App Store Guidelines Comic WWDC 2016[/caption]