[caption id="attachment_137343" align="aligncenter" width="654"]

Apple's Siri digital assistant on macOS.[/caption] You know how digital assistants and translation software work, but have you ever wondered how they know different languages? While machine learning plays a big part, the initial process can be surprisingly analog. Google has something called a

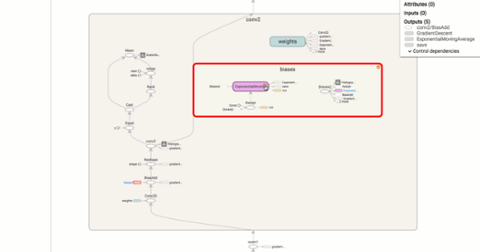

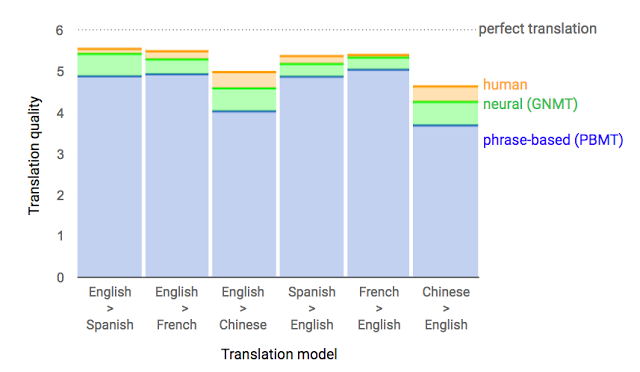

Neural Machine Translation system (GNMT), which it leans into for translation. It’s mostly machine learning, and pretty potent. From Google:

A few years ago we started using Recurrent Neural Networks (RNNs) to directly learn the mapping between an input sequence (e.g. a sentence in one language) to an output sequence (that same sentence in another language) [2]. Whereas Phrase-Based Machine Translation (PBMT) breaks an input sentence into words and phrases to be translated largely independently, Neural Machine Translation (NMT) considers the entire input sentence as a unit for translation.The advantage of this approach is that it requires fewer engineering design choices than previous Phrase-Based translation systems. When it first came out, NMT showed equivalent accuracy with existing Phrase-Based translation systems on modest-sized public benchmark data sets.

Google goes on to describe how it breaks words down to vectors, which are used to recognize new words. It’s a bit of language hacking; Google’s engine parses those word fragments to understand new words and phrases that use the same bits, and studies the emphasis placed on those identifiers (as well as the pauses between them). [caption id="attachment_140389" align="aligncenter" width="640"]

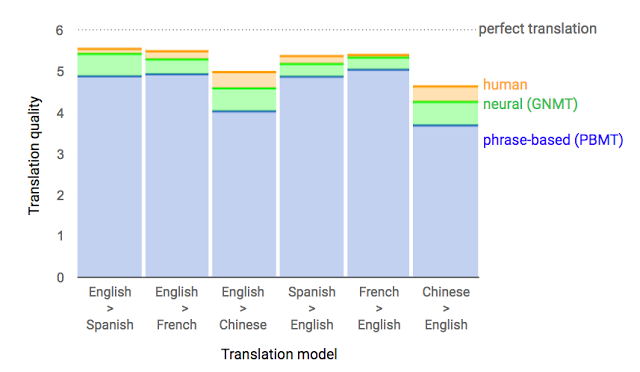

Google language translation[/caption] The translation software can learn new languages, too. Google found that teaching its engine how to translate two languages to English also generated a rough translation between the two other languages. In a

whitepaper, researchers say the "zero-shot translation" used no direct data for some translations: "A multilingual NMT model trained with Portuguese→English and English→Spanish examples can generate reasonable translations for Portuguese→Spanish, although it has not seen any data for that language pair." To learn a completely new language, Google and Apple both rely on humans. Google uses a '

trusted tester' program that invites native tongues to teach its system in the wild. A bit clandestine, it's the same

kind of human touch that Apple uses. Speaking to

Reuters, Apple's head of speech Alex Acero says the company first brings in humans to read passages in a variety of languages and dialects. Those readings are transcribed by hand and fed to Siri so it can compare written and verbal cues. Siri then compiles a language model and attempts to predict new words and phrases. Once Apple is comfortable with what Siri is capable of, it deploys “dictation mode,” a text-to-speech translator, in the new language. When customers use this feature, Apple snips bits of their spoken phrases, anonymizes them, and feeds them back to Siri. It’s a means to round out Siri’s understanding of a new language, and boost the platform's ability to separate speech from ambient noise. Siri is then released in the wild, but with

limited functionality. Acero says Apple only makes Siri available for the most common questions on launch, and updates it bi-monthly (with the anonymized data Apple is collecting) to quietly expand its capabilities. The results are striking. Siri is capable of understanding and speaking 21 languages, localized for 36 countries. Google Assistant can converse in four languages. Alexa is only fluent in English and German, and Cortana knows eight languages for use in 13 countries. Being able to speak to your favorite digital assistant in your native tongue is obviously desirable, but the answers they return are more critical. Google and Apple differ there. Siri may be more fluent in more languages, but leans on Bing for search results it doesn’t natively know, which probably

doesn’t have Google too worried.

(Correction: A previous version of this story compared two disparate machine learning technologies. We've edited our article to clarify some points surrounding translation and learning a new language.)  Apple's Siri digital assistant on macOS.[/caption] You know how digital assistants and translation software work, but have you ever wondered how they know different languages? While machine learning plays a big part, the initial process can be surprisingly analog. Google has something called a Neural Machine Translation system (GNMT), which it leans into for translation. It’s mostly machine learning, and pretty potent. From Google:

Apple's Siri digital assistant on macOS.[/caption] You know how digital assistants and translation software work, but have you ever wondered how they know different languages? While machine learning plays a big part, the initial process can be surprisingly analog. Google has something called a Neural Machine Translation system (GNMT), which it leans into for translation. It’s mostly machine learning, and pretty potent. From Google:

Google language translation[/caption] The translation software can learn new languages, too. Google found that teaching its engine how to translate two languages to English also generated a rough translation between the two other languages. In a whitepaper, researchers say the "zero-shot translation" used no direct data for some translations: "A multilingual NMT model trained with Portuguese→English and English→Spanish examples can generate reasonable translations for Portuguese→Spanish, although it has not seen any data for that language pair." To learn a completely new language, Google and Apple both rely on humans. Google uses a 'trusted tester' program that invites native tongues to teach its system in the wild. A bit clandestine, it's the same kind of human touch that Apple uses. Speaking to Reuters, Apple's head of speech Alex Acero says the company first brings in humans to read passages in a variety of languages and dialects. Those readings are transcribed by hand and fed to Siri so it can compare written and verbal cues. Siri then compiles a language model and attempts to predict new words and phrases. Once Apple is comfortable with what Siri is capable of, it deploys “dictation mode,” a text-to-speech translator, in the new language. When customers use this feature, Apple snips bits of their spoken phrases, anonymizes them, and feeds them back to Siri. It’s a means to round out Siri’s understanding of a new language, and boost the platform's ability to separate speech from ambient noise. Siri is then released in the wild, but with limited functionality. Acero says Apple only makes Siri available for the most common questions on launch, and updates it bi-monthly (with the anonymized data Apple is collecting) to quietly expand its capabilities. The results are striking. Siri is capable of understanding and speaking 21 languages, localized for 36 countries. Google Assistant can converse in four languages. Alexa is only fluent in English and German, and Cortana knows eight languages for use in 13 countries. Being able to speak to your favorite digital assistant in your native tongue is obviously desirable, but the answers they return are more critical. Google and Apple differ there. Siri may be more fluent in more languages, but leans on Bing for search results it doesn’t natively know, which probably doesn’t have Google too worried. (Correction: A previous version of this story compared two disparate machine learning technologies. We've edited our article to clarify some points surrounding translation and learning a new language.)

Google language translation[/caption] The translation software can learn new languages, too. Google found that teaching its engine how to translate two languages to English also generated a rough translation between the two other languages. In a whitepaper, researchers say the "zero-shot translation" used no direct data for some translations: "A multilingual NMT model trained with Portuguese→English and English→Spanish examples can generate reasonable translations for Portuguese→Spanish, although it has not seen any data for that language pair." To learn a completely new language, Google and Apple both rely on humans. Google uses a 'trusted tester' program that invites native tongues to teach its system in the wild. A bit clandestine, it's the same kind of human touch that Apple uses. Speaking to Reuters, Apple's head of speech Alex Acero says the company first brings in humans to read passages in a variety of languages and dialects. Those readings are transcribed by hand and fed to Siri so it can compare written and verbal cues. Siri then compiles a language model and attempts to predict new words and phrases. Once Apple is comfortable with what Siri is capable of, it deploys “dictation mode,” a text-to-speech translator, in the new language. When customers use this feature, Apple snips bits of their spoken phrases, anonymizes them, and feeds them back to Siri. It’s a means to round out Siri’s understanding of a new language, and boost the platform's ability to separate speech from ambient noise. Siri is then released in the wild, but with limited functionality. Acero says Apple only makes Siri available for the most common questions on launch, and updates it bi-monthly (with the anonymized data Apple is collecting) to quietly expand its capabilities. The results are striking. Siri is capable of understanding and speaking 21 languages, localized for 36 countries. Google Assistant can converse in four languages. Alexa is only fluent in English and German, and Cortana knows eight languages for use in 13 countries. Being able to speak to your favorite digital assistant in your native tongue is obviously desirable, but the answers they return are more critical. Google and Apple differ there. Siri may be more fluent in more languages, but leans on Bing for search results it doesn’t natively know, which probably doesn’t have Google too worried. (Correction: A previous version of this story compared two disparate machine learning technologies. We've edited our article to clarify some points surrounding translation and learning a new language.)