Ever since research scientists coined the term ‘artificial intelligence’ more than sixty years ago, the idea of a self-thinking computer has occupied a special place in the public consciousness. But now companies seem to have come around to the idea that, with enough technology and talent, A.I. can become an actual product. Those firms include Google, IBM, Apple, Facebook, and Infosys. And they’re all fishing in the same talent pool for technology professionals who can build a workable A.I. platform. Such an unusual endeavor demands unusual skill-sets, making it difficult to land the right candidates for the job.

Teaching Machine Learners

A.I. programmers have to come from somewhere. Perhaps unsurprisingly, the typical starting point is college. “As someone who teaches an undergraduate A.I. course, I typically encounter students with no explicit prior A.I. programming experience,” said Jim Boerkoel, assistant professor of computer science at Harvey Mudd College. “Increasingly, students will have had some prior experience developing A.I. solutions as part of a summer internship or research program.” While an understanding of A.I. and machine learning is becoming more commonplace, “there is still a pronounced shortage of talent,” added Gary Kazantsev, head of machine learning at Bloomberg, also speaking via e-mail. “In fact, it is getting worse as more and more enterprises form their own A.I. groups and make A.I. part of their corporate strategy.” If people with sufficient A.I. experience cannot be found, there are other ways to fill the skill gap. “[We] expect everyone to do a lot of on-the-job learning. It is relatively uncommon to find candidates who have years of experience with machine learning problems, though it would be great if we could,” said Joel Dodge, a software engineer at Infer, via email. Another hiring approach is to take a specialist in one field and teach them the skills for another, said Abdul Razack, senior VP and head of platforms at Infosys. That might mean taking a statistical programmer and training them in data strategy, or teaching more statistics to someone skilled in data processing. Some skillsets do undergird a useful foundation for additional training in A.I. “The best way to break in to A.I. is to just get your feet wet and to learn… A number of our engineers took Andrew Ng's Coursera course on machine learning before starting here, and this course is a truly great introduction to the field,” Dodge said. “Mathematical background—solid grasp of probability, statistics, linear algebra, mathematical optimization—is crucial for those who wish to develop their own algorithms or modify existing ones to fit specific purposes and constraints,” Kazantsev said.

Intelligent Thinking

Skill sets aside, programming for A.I. requires a change in conceptual thinking. “The old paradigm was you knew the problem you had to solve… and you threw technology and skilled people at it,” Razack said. “When looking at applying artificial intelligence to certain scenarios… there is this notion of problem finding,” he continued. This deductive skill is very much in demand, and exposes another facet of A.I. design: the first iteration of an artificially intelligent platform will probably get a lot of things wrong. An A.I. program has to “learn” its tasks through multiple iterations over time, all the while tapping into a large dataset for its information. “For predictive apps, we also want the finished product to be ‘good enough’ at the predictive problem it is solving. This can open up subtle questions about how the product is used that requires a lot of care to deal with,” Dodge added. Developing innovative A.I. applications “requires a deeper understanding of how an A.I. algorithm makes the decisions it does,” Boerkoel said. The programmer who lacks this knowledge reduces A.I. to the level of a “black box,” mitigating the ability to effectively iterate or grow the platform. “One cannot treat these methods as magic black boxes—that can be very dangerous in the wrong environment," Kazantsev echoed. “The first, non-negotiable rule we have to teach new developers is ‘look at your data.’ It is not sufficient to look at the results of your algorithm pipeline—you have to look at both ends of it.” Testing the systems requires a high degree of involvement. “You need to be aware of over-fitting, outliers and other inconvenient properties of your data set, and be able to detect and avoid these issues,” Kazantsev said. Like a lot of computer science, the idea of A.I. came along before the technology that made it possible, Razack observed: “What changed is the amount of data available for you to process and the ability to compute.” Multiple applications are now possible, albeit often in rough form. The ability to run an A.I. platform off commodity hardware, as opposed to a supercomputer, is also a game-changer.

Watch Your Language… Mind Your Business

Experts offered varying (but overlapping) advice when it came to the ideal programming languages for A.I., often citing R and Python. Dodge added a few more open-source packages: NumPy, SciPy and scikit-learn. “Languages like Lisp and Prolog are particularly well-suited for solving certain A.I. problems. However, there are libraries that make programming in any popular language (Python, C++, Java, MATLAB, etc.) straightforward. The ‘right’ language depends significantly on the nature of the problem and the needs of the application,” Boerkoel said. “For high performance computing applications, GPU programming, FPGAs, one is mostly constrained to C and C++. Java is quite popular due to wide use in Apache projects, and Scala, another JVM language, is becoming more popular,” Kazantsev added. The ability to perform GPU programming and parallel processing are also key skills. Still, there are things A.I. can or cannot do, and programmers should be aware of the technology’s limits. “Playing by the rules” is one of the biggest strengths of A.I. Facial recognition, interpreting medical X-rays, driving a car, or even playing a game of Go are all within software’s algorithmic grasp. Yet one must be mindful of the data—is it stable over time? Is it causing feedback loops and throwing off results? Is the programmer’s choice of model correctly reflecting their mathematical assumptions? “A.I., or machine learning, is not magic. No amount of A.I. will help you to solve a problem which is incorrectly posed, or a problem where the data does not contain enough information to predict the target variable." Kazantsev said.

Pretty Soon, the Future is Present

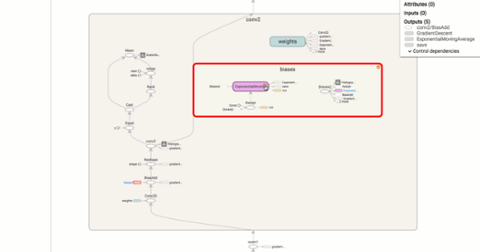

Artificial intelligence is already well on its way towards common usage. But what will the future look like? “Finding good engineering talent is always challenging—people who can effectively apply their knowledge to building compelling products are rare. I don't think ‘A.I. programming’ is going to be any different from ‘regular programming’ in that sense," Kazantsev said. For his part, Dodge believes that A.I. will become a well-integrated part of the software engineering toolkit: “That said, data will continue to be tricky to work with, and machine learning and A.I. will continue to provide deep and difficult challenges for humans.” Organizations such as the Allen Institute for Artificial Intelligence are working on solutions to key problems in the field. Meanwhile, Facebook and Google have been open-sourcing powerful A.I. and machine learning tools

such as TensorFlow. While this makes AI an exciting place to be, Boerkoel also sounded a skeptical note: “The question is, will there be enough programmers who understand the algorithms and their data well-enough to do truly innovative, transformative things?” Transformation is happening at the product level at Infosys, which is pitching its MANA AI platform to corporate clients. Right now A.I. shows a lot of promise in systems management, Razack said, adding: “We are still in the early stages of applying A.I.” Problem discovery is the challenge of the era. But once programmers know what problems they want to solve with regard to machine intelligence, they will know what datasets they need to help them build the future.

Ever since research scientists coined the term ‘artificial intelligence’ more than sixty years ago, the idea of a self-thinking computer has occupied a special place in the public consciousness. But now companies seem to have come around to the idea that, with enough technology and talent, A.I. can become an actual product. Those firms include Google, IBM, Apple, Facebook, and Infosys. And they’re all fishing in the same talent pool for technology professionals who can build a workable A.I. platform. Such an unusual endeavor demands unusual skill-sets, making it difficult to land the right candidates for the job.

Ever since research scientists coined the term ‘artificial intelligence’ more than sixty years ago, the idea of a self-thinking computer has occupied a special place in the public consciousness. But now companies seem to have come around to the idea that, with enough technology and talent, A.I. can become an actual product. Those firms include Google, IBM, Apple, Facebook, and Infosys. And they’re all fishing in the same talent pool for technology professionals who can build a workable A.I. platform. Such an unusual endeavor demands unusual skill-sets, making it difficult to land the right candidates for the job.

Ever since research scientists coined the term ‘artificial intelligence’ more than sixty years ago, the idea of a self-thinking computer has occupied a special place in the public consciousness. But now companies seem to have come around to the idea that, with enough technology and talent, A.I. can become an actual product. Those firms include Google, IBM, Apple, Facebook, and Infosys. And they’re all fishing in the same talent pool for technology professionals who can build a workable A.I. platform. Such an unusual endeavor demands unusual skill-sets, making it difficult to land the right candidates for the job.

Ever since research scientists coined the term ‘artificial intelligence’ more than sixty years ago, the idea of a self-thinking computer has occupied a special place in the public consciousness. But now companies seem to have come around to the idea that, with enough technology and talent, A.I. can become an actual product. Those firms include Google, IBM, Apple, Facebook, and Infosys. And they’re all fishing in the same talent pool for technology professionals who can build a workable A.I. platform. Such an unusual endeavor demands unusual skill-sets, making it difficult to land the right candidates for the job.