For years, Intel has focused on two programming languages:

C++ and Fortran. Now they have released a third language implementation:

Python. The release includes a number of packages used in high-performance computing, including NumPy/SciPy. So how does Intel’s distribution compare to standard Python (i.e., CPython)? The beta is available for Windows, Linux, and Mac OS X, and supports Python versions 2.7 and 3.5. It features performance accelerations via Intel MKL, Intel MPI, Intel TBB, and Intel DAAL. On the Mac, you need OS/X 10.11; Windows 7-10 is supported. My own preference is Linux; all of the following examples were run in VirtualBox running Ubuntu 14.04 LTS. (The Linux download is 800 MB, and that includes many relevant open-source packages provided in binary, with no compilation required; note that this software is 64-bit.) Intel Python

comes with Conda for managing packages; if you already use “full” Anaconda for running and updating packages and dependencies, you’ll need to follow the (short) instructions for

using the Intel Distribution with Anaconda. Once you’ve completed installation, you can use the "which python" for 2.7 or "which python3" commands for 3.5 to show the path to the installation. On Linux, the standard path for CPython is

/usr/bin/python. On my Ubuntu 14.04 LTS, I installed Intel Python 3.5; “which python3” shows the following:

/opt/intel/intelpython35/bin/python3

The examples compare the standard CPython that powers Python 3 (3.4 to be precise) against Intel Python 3.5. The command

python3.4 runs scripts using the older Python, and

python3 runs them using the Intel Python. This small test program, test1.py, shows which Python ran the program, to confirm that the correct Python is running the example: [code language="python"] import sys print (sys.version) [/code] This outputs: [code language="python"] >python3 test1.py 3.5.1 (default, Mar 22 2016, 01:32:51) [GCC Intel(R) C++ gcc 4.8 mode] >python3.4 test1.py 3.4.3 (default, Oct 14 2015, 20:28:29) [GCC 4.8.4] [/code]

What's Different about Intel Python?

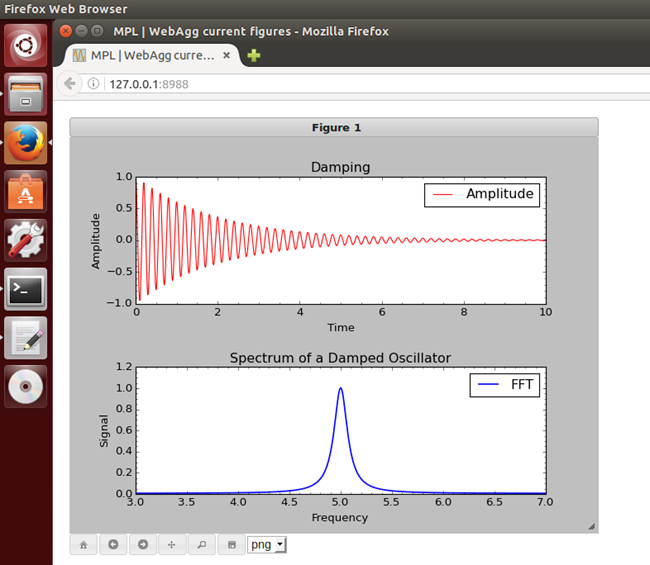

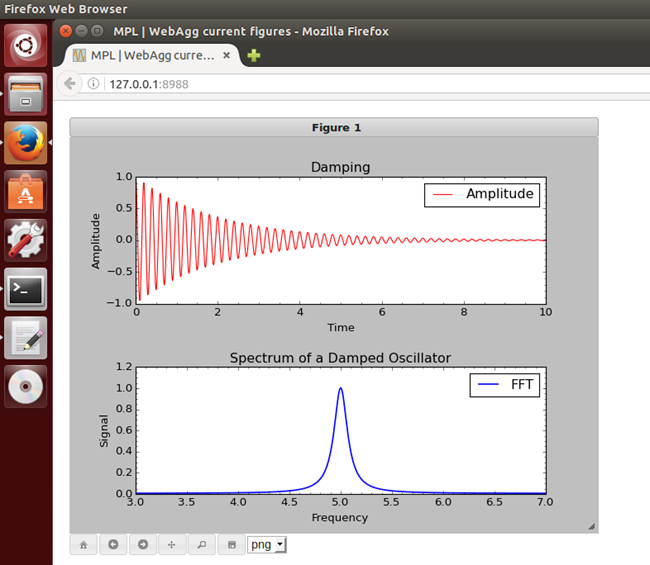

The recent beta updates many packages while adding a few new ones. This extends the range of Linux support to the recent Ubuntu 16.04, not to mention Debian 7, RHEL, Red Hat Fedora and Suse Enterprise Server. The developers of Intel Python emphasize its performance gains, achieved by Intel integrating the aforementioned NumPy and SciPy with Intel TBB, Intel MKL and Intel DAAL; the TBB package accelerates threads when used with Numpy, Scipy, pyDAAL, Dask, and Joblib (more on that below) As a quick test of NumPy and matplotlib, I used the numpy_fft example from

this college’s examples, using numpy and matplotlib. It ran perfectly:

But not all programs run faster. For example, Intel random.rand runs at half the speed of CPython. To compensate, Intel provides its own, quicker version; replacing numpy.random with numpy.random_intel

not only results in faster random number generation, but also allows many other algorithms to be used from the Intel MKL. In some cases, there are dramatic improvements, and not just in high-performance code. The following program, used to calculate pi with 50-digit precision, takes 29 seconds under Python 3.4 but just one second with Intel's distribution. Extending the precision to 500 digits extends those times to 43 and 1.6 seconds, respectively: [code language="python"] from decimal import * getcontext().prec = 50 s = Decimal(1); #Sign pi = Decimal(3); n = 500000 for i in range (2, n * 2, 2): pi = pi + s * (Decimal(4) / (Decimal(i) * (Decimal(i) + Decimal(1)) * (Decimal(i) + Decimal(2)))) s = -1 * s print ("Approximate value of PI :",pi," for ",n) [/code]

What is Intel DAAL?

Included with Intel Python

is Intel DAAL, the Intel Data Analytics Accelerator Library. This C++ library for processing data can work with data from a variety of sources and process it in-memory; it uses Intel TBB and Intel for extra processing speed. A large variety of machine learning algorithms are included for analysis, training and prediction. The pydaal package provides a Python interface to DAAL.

Multi-threading in Python

Python was not designed for multi-threading. It runs on one thread, and while you can run code on other threads, those threads cannot access any Python Objects without invoking special trickery with an object called the Global Interpreter Lock. It gets very messy. Intel’s Python features a module that enables threading composability between thread-enabled libraries, streamlining at least some of the complexity associated with running multi-threading on Python. If you decide to toy around with Intel's distribution, make sure to examine Intel TBB (Intel Thread Building Blocks) as a potential tool for multi-threading; Intel

already offers some documentation.

Final Note

The inclusion of Intel DAAL into the platform is particularly clever. While it’s not the only Big Data solution available to Python users, it may end up one of the fastest, potentially making the programming language even more of a data-analytics player.

For years, Intel has focused on two programming languages: C++ and Fortran. Now they have released a third language implementation: Python. The release includes a number of packages used in high-performance computing, including NumPy/SciPy. So how does Intel’s distribution compare to standard Python (i.e., CPython)? The beta is available for Windows, Linux, and Mac OS X, and supports Python versions 2.7 and 3.5. It features performance accelerations via Intel MKL, Intel MPI, Intel TBB, and Intel DAAL. On the Mac, you need OS/X 10.11; Windows 7-10 is supported. My own preference is Linux; all of the following examples were run in VirtualBox running Ubuntu 14.04 LTS. (The Linux download is 800 MB, and that includes many relevant open-source packages provided in binary, with no compilation required; note that this software is 64-bit.) Intel Python comes with Conda for managing packages; if you already use “full” Anaconda for running and updating packages and dependencies, you’ll need to follow the (short) instructions for using the Intel Distribution with Anaconda. Once you’ve completed installation, you can use the "which python" for 2.7 or "which python3" commands for 3.5 to show the path to the installation. On Linux, the standard path for CPython is /usr/bin/python. On my Ubuntu 14.04 LTS, I installed Intel Python 3.5; “which python3” shows the following:

For years, Intel has focused on two programming languages: C++ and Fortran. Now they have released a third language implementation: Python. The release includes a number of packages used in high-performance computing, including NumPy/SciPy. So how does Intel’s distribution compare to standard Python (i.e., CPython)? The beta is available for Windows, Linux, and Mac OS X, and supports Python versions 2.7 and 3.5. It features performance accelerations via Intel MKL, Intel MPI, Intel TBB, and Intel DAAL. On the Mac, you need OS/X 10.11; Windows 7-10 is supported. My own preference is Linux; all of the following examples were run in VirtualBox running Ubuntu 14.04 LTS. (The Linux download is 800 MB, and that includes many relevant open-source packages provided in binary, with no compilation required; note that this software is 64-bit.) Intel Python comes with Conda for managing packages; if you already use “full” Anaconda for running and updating packages and dependencies, you’ll need to follow the (short) instructions for using the Intel Distribution with Anaconda. Once you’ve completed installation, you can use the "which python" for 2.7 or "which python3" commands for 3.5 to show the path to the installation. On Linux, the standard path for CPython is /usr/bin/python. On my Ubuntu 14.04 LTS, I installed Intel Python 3.5; “which python3” shows the following:

But not all programs run faster. For example, Intel random.rand runs at half the speed of CPython. To compensate, Intel provides its own, quicker version; replacing numpy.random with numpy.random_intel not only results in faster random number generation, but also allows many other algorithms to be used from the Intel MKL. In some cases, there are dramatic improvements, and not just in high-performance code. The following program, used to calculate pi with 50-digit precision, takes 29 seconds under Python 3.4 but just one second with Intel's distribution. Extending the precision to 500 digits extends those times to 43 and 1.6 seconds, respectively: [code language="python"] from decimal import * getcontext().prec = 50 s = Decimal(1); #Sign pi = Decimal(3); n = 500000 for i in range (2, n * 2, 2): pi = pi + s * (Decimal(4) / (Decimal(i) * (Decimal(i) + Decimal(1)) * (Decimal(i) + Decimal(2)))) s = -1 * s print ("Approximate value of PI :",pi," for ",n) [/code]

But not all programs run faster. For example, Intel random.rand runs at half the speed of CPython. To compensate, Intel provides its own, quicker version; replacing numpy.random with numpy.random_intel not only results in faster random number generation, but also allows many other algorithms to be used from the Intel MKL. In some cases, there are dramatic improvements, and not just in high-performance code. The following program, used to calculate pi with 50-digit precision, takes 29 seconds under Python 3.4 but just one second with Intel's distribution. Extending the precision to 500 digits extends those times to 43 and 1.6 seconds, respectively: [code language="python"] from decimal import * getcontext().prec = 50 s = Decimal(1); #Sign pi = Decimal(3); n = 500000 for i in range (2, n * 2, 2): pi = pi + s * (Decimal(4) / (Decimal(i) * (Decimal(i) + Decimal(1)) * (Decimal(i) + Decimal(2)))) s = -1 * s print ("Approximate value of PI :",pi," for ",n) [/code]