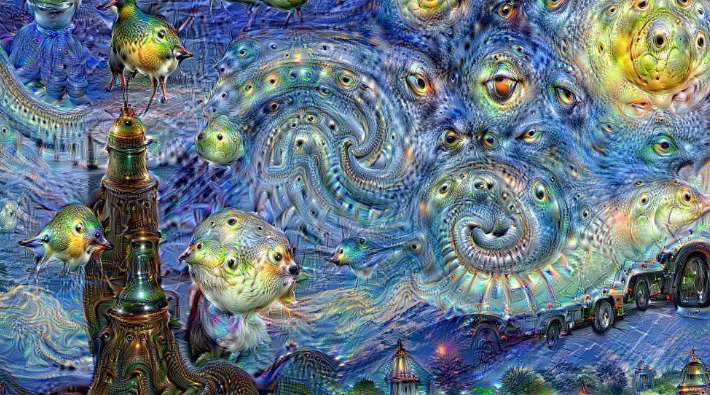

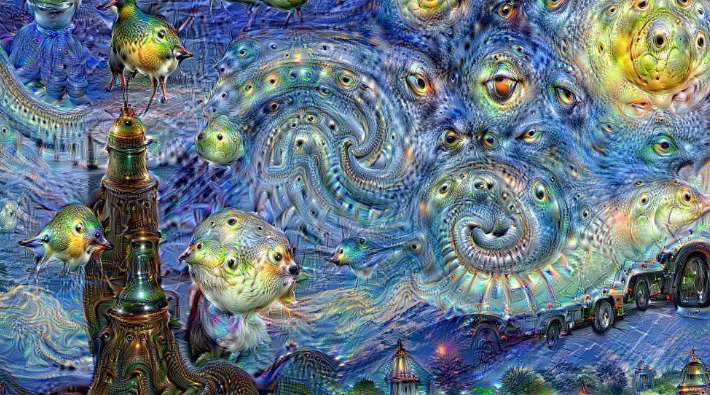

Google’s next big artificial-intelligence project: teaching a computer to make art. The project, codenamed “Magenta,” will focus on “developing algorithms that can learn how to generate art and music, potentially creating compelling and artistic content.” It will involve artists, coders, and machine-learning researchers. How does one actually turn a hunk of silicon and electrical impulses into the second coming of David Bowie? Neural networks can already alter images in weird and wonderful ways (as evidenced by Google’s own DeepDream Generator, which created the above artwork), but generating images from nothing is another problem entirely, one that Google’s researchers think can be solved through machine learning. Even if those researchers come up with an effective generation model, however, there’s the question of imbuing the product with the dynamism that comes with good art. “This leads to perhaps our biggest challenge: combining generation, attention and surprise to tell a compelling story,” read a note posted on the Magenta project’s official blog. “So much machine-generated music and art is good in small chunks, but lacks any sort of long-term narrative arc.” From a software perspective, Magenta will rely on TensorFlow, Google’s open-source machine-learning system with a Python-based interface. TensorFlow focuses on flexibility and portability, allowing users on a variety of systems to leverage its machine-learning capabilities. Over the next several months, those working on the project will release new TensorFlow-supported models and tools onto Magenta’s GitHub page. If you’re interested in exploring how computers can potentially craft art, check it out; but it could be quite some time before the project produces a machine-made song that’s any good.

Google’s next big artificial-intelligence project: teaching a computer to make art. The project, codenamed “Magenta,” will focus on “developing algorithms that can learn how to generate art and music, potentially creating compelling and artistic content.” It will involve artists, coders, and machine-learning researchers. How does one actually turn a hunk of silicon and electrical impulses into the second coming of David Bowie? Neural networks can already alter images in weird and wonderful ways (as evidenced by Google’s own DeepDream Generator, which created the above artwork), but generating images from nothing is another problem entirely, one that Google’s researchers think can be solved through machine learning. Even if those researchers come up with an effective generation model, however, there’s the question of imbuing the product with the dynamism that comes with good art. “This leads to perhaps our biggest challenge: combining generation, attention and surprise to tell a compelling story,” read a note posted on the Magenta project’s official blog. “So much machine-generated music and art is good in small chunks, but lacks any sort of long-term narrative arc.” From a software perspective, Magenta will rely on TensorFlow, Google’s open-source machine-learning system with a Python-based interface. TensorFlow focuses on flexibility and portability, allowing users on a variety of systems to leverage its machine-learning capabilities. Over the next several months, those working on the project will release new TensorFlow-supported models and tools onto Magenta’s GitHub page. If you’re interested in exploring how computers can potentially craft art, check it out; but it could be quite some time before the project produces a machine-made song that’s any good. Google Wants Software to Make Art, Music

Google’s next big artificial-intelligence project: teaching a computer to make art. The project, codenamed “Magenta,” will focus on “developing algorithms that can learn how to generate art and music, potentially creating compelling and artistic content.” It will involve artists, coders, and machine-learning researchers. How does one actually turn a hunk of silicon and electrical impulses into the second coming of David Bowie? Neural networks can already alter images in weird and wonderful ways (as evidenced by Google’s own DeepDream Generator, which created the above artwork), but generating images from nothing is another problem entirely, one that Google’s researchers think can be solved through machine learning. Even if those researchers come up with an effective generation model, however, there’s the question of imbuing the product with the dynamism that comes with good art. “This leads to perhaps our biggest challenge: combining generation, attention and surprise to tell a compelling story,” read a note posted on the Magenta project’s official blog. “So much machine-generated music and art is good in small chunks, but lacks any sort of long-term narrative arc.” From a software perspective, Magenta will rely on TensorFlow, Google’s open-source machine-learning system with a Python-based interface. TensorFlow focuses on flexibility and portability, allowing users on a variety of systems to leverage its machine-learning capabilities. Over the next several months, those working on the project will release new TensorFlow-supported models and tools onto Magenta’s GitHub page. If you’re interested in exploring how computers can potentially craft art, check it out; but it could be quite some time before the project produces a machine-made song that’s any good.

Google’s next big artificial-intelligence project: teaching a computer to make art. The project, codenamed “Magenta,” will focus on “developing algorithms that can learn how to generate art and music, potentially creating compelling and artistic content.” It will involve artists, coders, and machine-learning researchers. How does one actually turn a hunk of silicon and electrical impulses into the second coming of David Bowie? Neural networks can already alter images in weird and wonderful ways (as evidenced by Google’s own DeepDream Generator, which created the above artwork), but generating images from nothing is another problem entirely, one that Google’s researchers think can be solved through machine learning. Even if those researchers come up with an effective generation model, however, there’s the question of imbuing the product with the dynamism that comes with good art. “This leads to perhaps our biggest challenge: combining generation, attention and surprise to tell a compelling story,” read a note posted on the Magenta project’s official blog. “So much machine-generated music and art is good in small chunks, but lacks any sort of long-term narrative arc.” From a software perspective, Magenta will rely on TensorFlow, Google’s open-source machine-learning system with a Python-based interface. TensorFlow focuses on flexibility and portability, allowing users on a variety of systems to leverage its machine-learning capabilities. Over the next several months, those working on the project will release new TensorFlow-supported models and tools onto Magenta’s GitHub page. If you’re interested in exploring how computers can potentially craft art, check it out; but it could be quite some time before the project produces a machine-made song that’s any good.