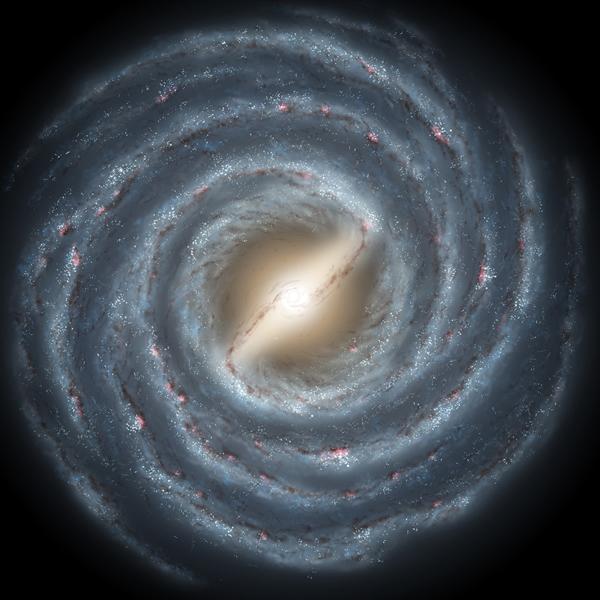

I've recently begun working on a space-themed game that will be set in a galaxy and I've decided to create the background based on a photograph. I generate the background by displaying a 100x100 grid of colors, with the color of each block determined by the brightness of the corresponding section of the photograph. The image I started out with is a 600 x 600 pixel photograph of a spiral galaxy from NASA (above), which I divided into a 100x100 grid (to match the grid I’m using in my game). Each section of the grid is assigned a value between 0 and 99 representing its color. So far, all of this is pretty simple. But there's one problem: How do you quantify the brightness of a pixel? Click here to find C# jobs.

Brightness of Pixels

Luckily, I'm not the first to try to measure brightness. Six years ago, NBD-Tech's Nir published an article on a similar topic. The article explores how to convert an RGB value into a brightness value, taking into account the perceived brightness of color. It simplifies what would otherwise be a fairly complex subject that would require a conversion from RGB to HSL (Hue, Saturation and Lightness) or HSV (Hue, Saturation and Value). HSL and HSV are schemes that map RGB models of images into models that are more representative of how we perceive light. As that Wikipedia article shows, it's a pretty technical process -- and it explains why image editing software can be so complex when handling colors. The NBD-Tech article simplifies it down to one simple equation (below) by defining a few constants. Read his article, and find out which method you prefer.

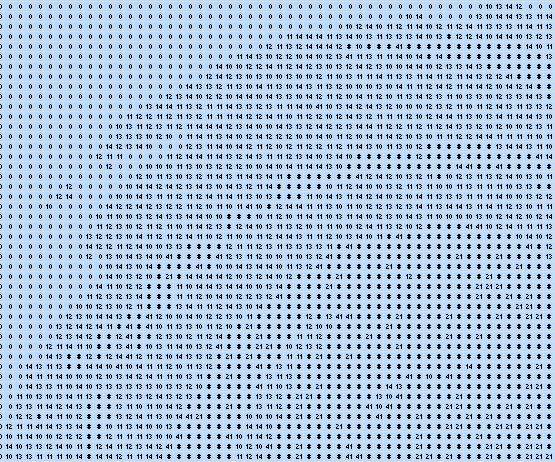

Brightness = sqrt( 0.241 x R^2 + 0.691 x G^2 + 0.068 x B^2 ).

The equation above produces a value in the range 0 to 255. To scale it so it’s between 0 and 99 (the range I've chosen for the game), you just divide the value by 2.55.  Further complicating the problem, because my original image is 600 x 600 pixels and my grid is 100 x 100, the value of each block in the grid must represent the brightness of all 36 pixels it contains (6 x 6). To find the value of a block, all 36 pixels must be converted into individual brightness values and then averaged together. (The averages are then written to a csv file.)

Further complicating the problem, because my original image is 600 x 600 pixels and my grid is 100 x 100, the value of each block in the grid must represent the brightness of all 36 pixels it contains (6 x 6). To find the value of a block, all 36 pixels must be converted into individual brightness values and then averaged together. (The averages are then written to a csv file.)

The Code

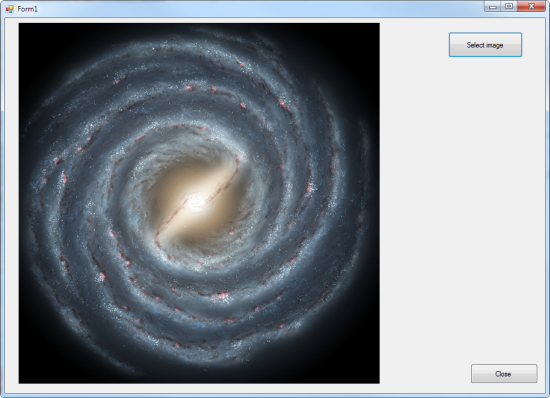

I thought C# would be the easiest language to write this in because of the way it handles image processing. I set up a WinForms project and created a form with a 600 x 600 PictureBox and two buttons: A Select Image button and a Close button. The Select Image button uses an OpenFileDialog component to select a file. The file is then processed by the method ProcessImage().  The ProcessImage() method accesses the PictureBox Image field as a bitmap, providing fast individual pixel access. A simple helper class -- ImageBlock -- handles the conversion of the 600 x 600 pixels to 100 x 100 values. It takes each 6 x 6 block, sums up the individual red, green and blue components of each pixel within it (each a 0-255 value), converts them to brightness values and then takes the average and divides by 2.55, to scale it to a value between 0 and 99. If you take a look at the code (available for download here) you’ll notice there’s one final step, which is applying a filter to the values so that anything below 20 is zeroed and the rest are brought into a range between 10 and 50. That’s just the starting position for the game (and not a necessary step). Later I added a check box that allows the user to choose whether the filter is applied, and a button to reprocess the image In case the filter is turned off.

The ProcessImage() method accesses the PictureBox Image field as a bitmap, providing fast individual pixel access. A simple helper class -- ImageBlock -- handles the conversion of the 600 x 600 pixels to 100 x 100 values. It takes each 6 x 6 block, sums up the individual red, green and blue components of each pixel within it (each a 0-255 value), converts them to brightness values and then takes the average and divides by 2.55, to scale it to a value between 0 and 99. If you take a look at the code (available for download here) you’ll notice there’s one final step, which is applying a filter to the values so that anything below 20 is zeroed and the rest are brought into a range between 10 and 50. That’s just the starting position for the game (and not a necessary step). Later I added a check box that allows the user to choose whether the filter is applied, and a button to reprocess the image In case the filter is turned off.