Google’s BigQuery analytics platform allows developers to crunch massive amounts of data while providing secure SSL access and group- and user-based permissions via Google accounts. It’s one of Google’s more powerful public-facing analytics tools, having added a variety of capabilities since its May 2012 release (including

Big JOIN and native support for importing and querying timestamp data). Whatever the underlying analytics platform, however, working with truly epic datasets creates its own issues, not the least of which is the need to optimize processes so they’re efficient and hopefully not too resource-intensive. Google’s Cloud Platform Blog offers some of those optimization scenarios, and the

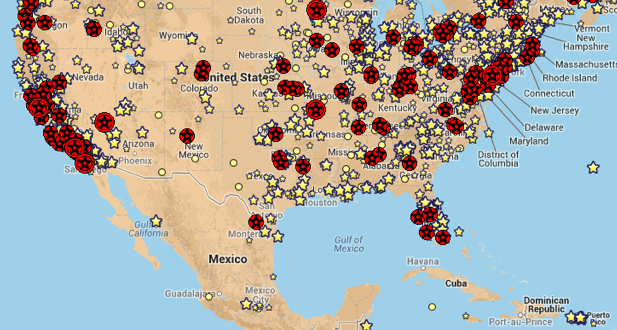

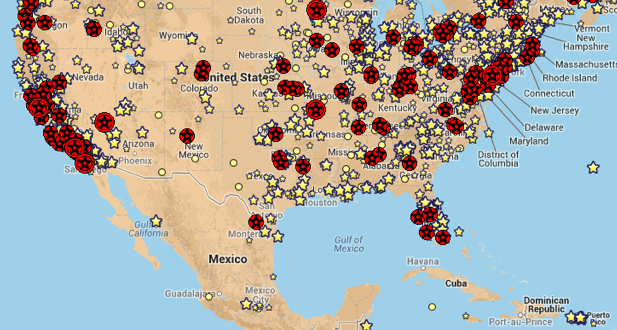

latest one could prove interesting to any developer with a need to aggregate numbers (such as IP addresses) by geo-location. In the included example, Google BigQuery is used to figure out the top cities contributing edits to Wikipedia, using the latter’s own publicly-available data in conjunction with the Creative Commons-licensed GeoLite City IPv4 (made available by MaxMind in .csv format). After uploading blocks and locations tables from GeoLite into BigQuery, a developer can squeeze out better performance via some additional processing; as related by Google developer programs engineer Felipe Hoffa:

“To get better performance later, some processing was needed: For each rule I extracted into a new column its class B prefix (192.168.x.x) and generated duplicate rules for segments that spanned more than one B class. I also joined both original tables, to skip that step when processing data. In the StackOverflow question 'user2881671' went even further, generating additional rules for segments without a location mapping (cleverly using the LAG() window function), but I skipped that step here (so addresses without a location will be skipped rather than counted). In total, only 32,702 new rows were needed.”

Hoffa continued: “The final query JOINs the class B prefix from your IP addresses with the lookup table, to prevent the performance hit of doing a full cross join.” Of course, such queries aren’t limited to Wikipedia; anyone with a dataset containing IPv4 IP addresses can attempt to join that information with other data. While BigQuery isn’t the only tool out there for “big analytics” (not by a long shot), it could be just the thing for those developers who want a cloud-based data-crunching tool. For those who use it, the Blog is well worth checking out.

Image: Google

Google’s BigQuery analytics platform allows developers to crunch massive amounts of data while providing secure SSL access and group- and user-based permissions via Google accounts. It’s one of Google’s more powerful public-facing analytics tools, having added a variety of capabilities since its May 2012 release (including Big JOIN and native support for importing and querying timestamp data). Whatever the underlying analytics platform, however, working with truly epic datasets creates its own issues, not the least of which is the need to optimize processes so they’re efficient and hopefully not too resource-intensive. Google’s Cloud Platform Blog offers some of those optimization scenarios, and the latest one could prove interesting to any developer with a need to aggregate numbers (such as IP addresses) by geo-location. In the included example, Google BigQuery is used to figure out the top cities contributing edits to Wikipedia, using the latter’s own publicly-available data in conjunction with the Creative Commons-licensed GeoLite City IPv4 (made available by MaxMind in .csv format). After uploading blocks and locations tables from GeoLite into BigQuery, a developer can squeeze out better performance via some additional processing; as related by Google developer programs engineer Felipe Hoffa:

Google’s BigQuery analytics platform allows developers to crunch massive amounts of data while providing secure SSL access and group- and user-based permissions via Google accounts. It’s one of Google’s more powerful public-facing analytics tools, having added a variety of capabilities since its May 2012 release (including Big JOIN and native support for importing and querying timestamp data). Whatever the underlying analytics platform, however, working with truly epic datasets creates its own issues, not the least of which is the need to optimize processes so they’re efficient and hopefully not too resource-intensive. Google’s Cloud Platform Blog offers some of those optimization scenarios, and the latest one could prove interesting to any developer with a need to aggregate numbers (such as IP addresses) by geo-location. In the included example, Google BigQuery is used to figure out the top cities contributing edits to Wikipedia, using the latter’s own publicly-available data in conjunction with the Creative Commons-licensed GeoLite City IPv4 (made available by MaxMind in .csv format). After uploading blocks and locations tables from GeoLite into BigQuery, a developer can squeeze out better performance via some additional processing; as related by Google developer programs engineer Felipe Hoffa: