[caption id="attachment_17591" align="aligncenter" width="618"]

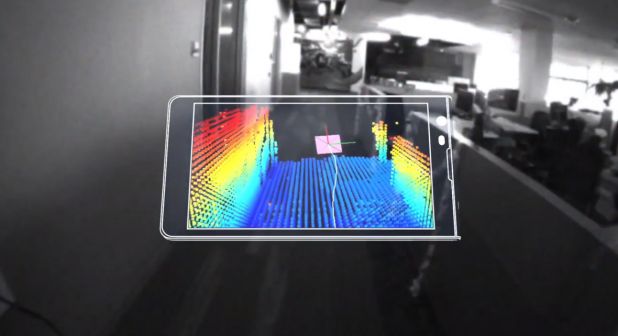

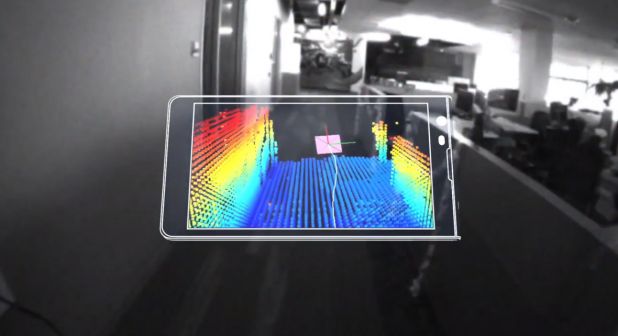

A Google visualization of how Project Tango works.[/caption] Google’s

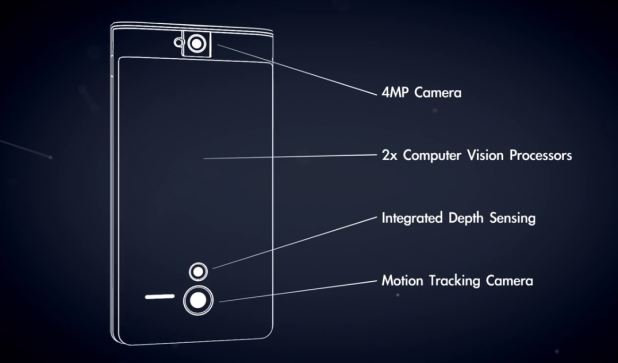

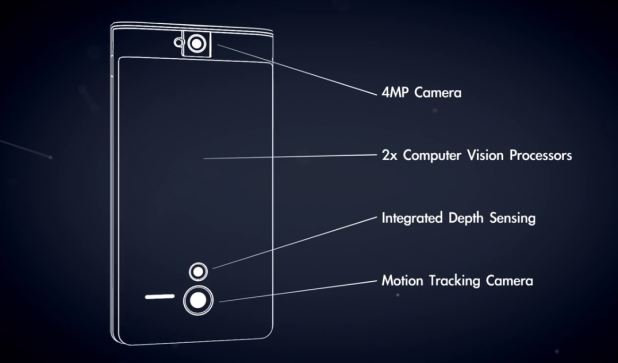

Advanced Technology and Projects Group is working on a new initiative, Project Tango, which could allow developers to quickly map objects and interiors in 3D At the heart of Project Tango is a prototype smartphone with a 5-inch screen, packed with hardware and software optimized to take 3D measurements of the surrounding environment. The associated development APIs can feed tons of positioning and orientation data to Android applications written in Java, C/C++, and the Unity Game Engine. In addition to a “standard” 4-megapixel camera, the device features a motion-tracking camera and an aperture for integrated depth sensing; integrated into the circuitry are two computer-vision processors.

Google claims it only has 200 developer units in stock, and it’s willing to give them to independent developers who can submit a detailed idea for a project involving 3D mapping of some sort. The deadline for unit distribution is March 14, 2014.

“We are physical beings that live in a 3D world,” read

Google’s Website devoted to the Project Tango. “Yet, our mobile devices assume that physical world ends at the boundaries of the screen.” With this effort, Google wants “to give mobile devices a human-scale understanding of space and motion.” This isn’t a new initiative: Google’s team has spent the past year working with universities and research labs around the world, seeking to knit together various threads of research into robotics and “computer vision.” (Partners have included the University of Minnesota, the Open Source Robotics Foundation, George Washington University, and others.) In theory, developers could use ultra-portable 3D mapping to create better maps, visualizations, and games. (“What if you could search for a product and see where the exact shelf is located in a super-store?” Google’s Website asks at one point.) The bigger question is what Google intends to do with the technology if it proves effective. Google Maps with super-detailed interiors, anyone?

Images: Google  A Google visualization of how Project Tango works.[/caption] Google’s Advanced Technology and Projects Group is working on a new initiative, Project Tango, which could allow developers to quickly map objects and interiors in 3D At the heart of Project Tango is a prototype smartphone with a 5-inch screen, packed with hardware and software optimized to take 3D measurements of the surrounding environment. The associated development APIs can feed tons of positioning and orientation data to Android applications written in Java, C/C++, and the Unity Game Engine. In addition to a “standard” 4-megapixel camera, the device features a motion-tracking camera and an aperture for integrated depth sensing; integrated into the circuitry are two computer-vision processors.

A Google visualization of how Project Tango works.[/caption] Google’s Advanced Technology and Projects Group is working on a new initiative, Project Tango, which could allow developers to quickly map objects and interiors in 3D At the heart of Project Tango is a prototype smartphone with a 5-inch screen, packed with hardware and software optimized to take 3D measurements of the surrounding environment. The associated development APIs can feed tons of positioning and orientation data to Android applications written in Java, C/C++, and the Unity Game Engine. In addition to a “standard” 4-megapixel camera, the device features a motion-tracking camera and an aperture for integrated depth sensing; integrated into the circuitry are two computer-vision processors.  Google claims it only has 200 developer units in stock, and it’s willing to give them to independent developers who can submit a detailed idea for a project involving 3D mapping of some sort. The deadline for unit distribution is March 14, 2014.

Google claims it only has 200 developer units in stock, and it’s willing to give them to independent developers who can submit a detailed idea for a project involving 3D mapping of some sort. The deadline for unit distribution is March 14, 2014.  “We are physical beings that live in a 3D world,” read Google’s Website devoted to the Project Tango. “Yet, our mobile devices assume that physical world ends at the boundaries of the screen.” With this effort, Google wants “to give mobile devices a human-scale understanding of space and motion.” This isn’t a new initiative: Google’s team has spent the past year working with universities and research labs around the world, seeking to knit together various threads of research into robotics and “computer vision.” (Partners have included the University of Minnesota, the Open Source Robotics Foundation, George Washington University, and others.) In theory, developers could use ultra-portable 3D mapping to create better maps, visualizations, and games. (“What if you could search for a product and see where the exact shelf is located in a super-store?” Google’s Website asks at one point.) The bigger question is what Google intends to do with the technology if it proves effective. Google Maps with super-detailed interiors, anyone? Images: Google

“We are physical beings that live in a 3D world,” read Google’s Website devoted to the Project Tango. “Yet, our mobile devices assume that physical world ends at the boundaries of the screen.” With this effort, Google wants “to give mobile devices a human-scale understanding of space and motion.” This isn’t a new initiative: Google’s team has spent the past year working with universities and research labs around the world, seeking to knit together various threads of research into robotics and “computer vision.” (Partners have included the University of Minnesota, the Open Source Robotics Foundation, George Washington University, and others.) In theory, developers could use ultra-portable 3D mapping to create better maps, visualizations, and games. (“What if you could search for a product and see where the exact shelf is located in a super-store?” Google’s Website asks at one point.) The bigger question is what Google intends to do with the technology if it proves effective. Google Maps with super-detailed interiors, anyone? Images: Google