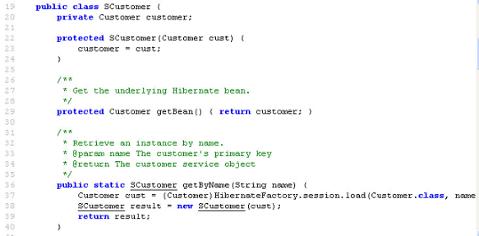

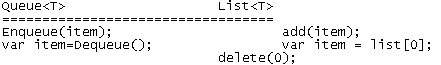

In .NET, it's quite common to store data in a generic List – a List<T> where T is some type such as an int or a class. In addition to its standard uses, a generic list can be implemented as a generic queue in place of a .NET Queue<T>, since a Queue<T> is just a specialized form of List<T> in which items are only added to the end of the list (Enqueue) and taken from the front of the list (Dequeue). Here’s how you add an item to the end and remove from the front with each:  Generally there's no great reason to use a queue instead of a list, even when you only need the functionality of a queue, though Queues is a little safer as it lacks all the methods that List provides. However, queues are only for scenarios in which you remove items in the order they were added -- you shouldn't use them in cases where you want to remove an item from the middle.

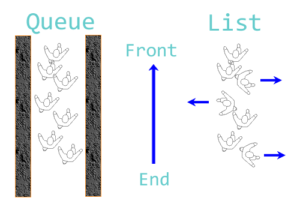

Generally there's no great reason to use a queue instead of a list, even when you only need the functionality of a queue, though Queues is a little safer as it lacks all the methods that List provides. However, queues are only for scenarios in which you remove items in the order they were added -- you shouldn't use them in cases where you want to remove an item from the middle.  Another way to think of it: A queue is like people walking down a narrow alley -- they can only enter at one end and leave at the other. A list is like walking in a field where you are unconstrained and can walk in any direction.

Another way to think of it: A queue is like people walking down a narrow alley -- they can only enter at one end and leave at the other. A list is like walking in a field where you are unconstrained and can walk in any direction.

What About Threading?

In a non-threaded situation there's not much difference between using a queue and using a list to simulate a queue, but when it comes to a multi-threaded case that changes. Unless declared statically, a List or a Queue is not thread safe. The reason is that a List object may be in the middle of being modified in one thread when another thread tries to read it. It may work successfully 90 percent of the time, but you'll get inconsistencies or exceptions. The one thing you can say about non-safe thread code in threaded applications is that bugs are guaranteed to strike eventually. Don’t risk it. .NET 4 introduced a new namespace, System.Collections.Concurrent, which enormously simplifies the issue of using collections in multithreaded applications. Before I go into details, here's one use case that worked well.

Use Case: Logging

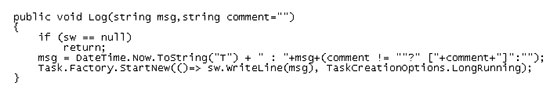

I needed a very simple logging system for a mobile app I wrote and ended up calling a method that output a text message to a StreamWriter. To keep it fast and, in particular, non-blocking, I had the log method call it via the Task Parallels library using Task.Factory.StartNew(..).  This works (sw is a statically declared StreamWriter) but it's not the best code as the StreamWriter is kept open through the app's lifetime. If the log messages are still in memory, a crash could lose the last few messages or corrupt the stream. Crashes in apps are one of the reasons you need logging in the first place, so losing messages is not an option. To address this, I did a rewrite using a ConcurrentQueue<T>, one of the new classes in the System.Collections.Concurrent namespace. ConcurrentQueue<T> is like Queue<T> but it’s guaranteed thread safe, automatically handling blocking, and optimized for maximum efficiency. The new log method adds the msg string to a concurrent queue of strings. A timer that runs every couple of seconds calls DumpLog() to append the queue’s contents to a text file, removing each item from the front of the queue. The new version is below:

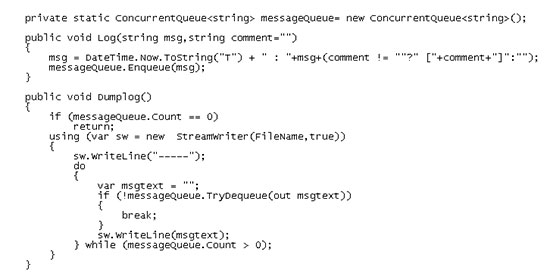

This works (sw is a statically declared StreamWriter) but it's not the best code as the StreamWriter is kept open through the app's lifetime. If the log messages are still in memory, a crash could lose the last few messages or corrupt the stream. Crashes in apps are one of the reasons you need logging in the first place, so losing messages is not an option. To address this, I did a rewrite using a ConcurrentQueue<T>, one of the new classes in the System.Collections.Concurrent namespace. ConcurrentQueue<T> is like Queue<T> but it’s guaranteed thread safe, automatically handling blocking, and optimized for maximum efficiency. The new log method adds the msg string to a concurrent queue of strings. A timer that runs every couple of seconds calls DumpLog() to append the queue’s contents to a text file, removing each item from the front of the queue. The new version is below:  Here the StreamWriter is only open while the contents of the queue are being appended to the file. Note that TryDequeue returns false and exits DumpLog if it can't remove the first item.

Here the StreamWriter is only open while the contents of the queue are being appended to the file. Note that TryDequeue returns false and exits DumpLog if it can't remove the first item.

Why Should You Use This?

Writing robust multithreaded code is hard but using these thread safe collections makes it a lot easier. In mobile apps in particular, unhandled exceptions will usually terminate the app, so anything that increases robustness is worth it. However, there is no thread safe equivalent for lists, so when it comes to concurrency, the ability to use a queue is significant.