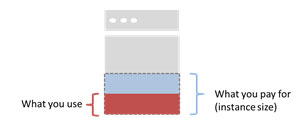

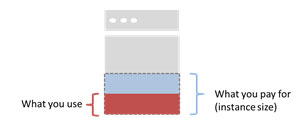

In the cloud, the only resources truly billed on a usage basis are bandwidth and storage. Perhaps that's due to the maturity of network and storage infrastructure, honed over years of usage-based policy enforcement, security and metering. Such capabilities were easily adapted to billing on a per-usage basis, on a utility model. Compute resources, on the other hand, are not so capable. Not even in the cloud, where pay-per-use is the song we're taught to dance to. When you go to provision resources for an application you're presented with a choice of instances of varying sizes, each with a pre-allocated set of compute resources. You're faced with an unpalatable set of choices regarding how to provision and manage future capacity needs. Do you provision minimally or for expected maximums (oversubscribe)? Do you rely on scale out via auto-scaling (which is auto only in real-time, configuration is highly manual even today) or scale up? It's a telling choice, because you're going to be charged for the compute whether you use it or not. While the instance is running, all available resources provisioned to that virtual instance are yours -- bought and paid for. If you only use 25 percent, well, that's too bad. You're paying for 100 percent. Now, from a certain point of view you are paying for what you use. After all, your virtual instance is what you're paying for, you're using it and the resources allocated to it. No one else can use them while your instance is active. Compute isn't shared like the network, after all. It's allocated by the system to a specific process. We could get into the weeds easily by explaining heaps and memory allocation schemes at the system level, but it's the result that's important: Resources allocated to your virtual machine are yours and yours alone. Even if your application isn't using them at the moment, you are by virtue of launching that virtual machine, and that's what you're paying for. You're paying for access to a given set of resources. Oversubscription, in other words, is the order of the day. Cloud, traditional, it doesn't matter what deployment model is being used -- you're oversubscribing the compute available to your application because that's the way the systems work today -- and might always work based on the isolation and security (the basics of multi-tenancy) required in a shared environment like cloud.

In the cloud, the only resources truly billed on a usage basis are bandwidth and storage. Perhaps that's due to the maturity of network and storage infrastructure, honed over years of usage-based policy enforcement, security and metering. Such capabilities were easily adapted to billing on a per-usage basis, on a utility model. Compute resources, on the other hand, are not so capable. Not even in the cloud, where pay-per-use is the song we're taught to dance to. When you go to provision resources for an application you're presented with a choice of instances of varying sizes, each with a pre-allocated set of compute resources. You're faced with an unpalatable set of choices regarding how to provision and manage future capacity needs. Do you provision minimally or for expected maximums (oversubscribe)? Do you rely on scale out via auto-scaling (which is auto only in real-time, configuration is highly manual even today) or scale up? It's a telling choice, because you're going to be charged for the compute whether you use it or not. While the instance is running, all available resources provisioned to that virtual instance are yours -- bought and paid for. If you only use 25 percent, well, that's too bad. You're paying for 100 percent. Now, from a certain point of view you are paying for what you use. After all, your virtual instance is what you're paying for, you're using it and the resources allocated to it. No one else can use them while your instance is active. Compute isn't shared like the network, after all. It's allocated by the system to a specific process. We could get into the weeds easily by explaining heaps and memory allocation schemes at the system level, but it's the result that's important: Resources allocated to your virtual machine are yours and yours alone. Even if your application isn't using them at the moment, you are by virtue of launching that virtual machine, and that's what you're paying for. You're paying for access to a given set of resources. Oversubscription, in other words, is the order of the day. Cloud, traditional, it doesn't matter what deployment model is being used -- you're oversubscribing the compute available to your application because that's the way the systems work today -- and might always work based on the isolation and security (the basics of multi-tenancy) required in a shared environment like cloud. Even in the Cloud, You're Oversubscribed

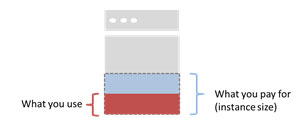

Ever since Nicholas Carr wrote The Big Switch and introduced the concept of utility computing, the industry has been enamored with the idea. "Pay for what you use, and nothing more" is the current mantra and mindset, and the entire industry is moving inexorably toward such a model. Except the model’s not there – and it may never be. A true utility model, based on consumption, is not available for most infrastructure resources simply because the systems don't exist to track such fine-grained usage data as memory and CPU cycles. Oh, the data is there. If you've examined the output from top on a Linux box or taken a close look at the task manager in Windows, you’ll find it. But it's not being collected on a CPU and memory per process or application basis by billing systems.  In the cloud, the only resources truly billed on a usage basis are bandwidth and storage. Perhaps that's due to the maturity of network and storage infrastructure, honed over years of usage-based policy enforcement, security and metering. Such capabilities were easily adapted to billing on a per-usage basis, on a utility model. Compute resources, on the other hand, are not so capable. Not even in the cloud, where pay-per-use is the song we're taught to dance to. When you go to provision resources for an application you're presented with a choice of instances of varying sizes, each with a pre-allocated set of compute resources. You're faced with an unpalatable set of choices regarding how to provision and manage future capacity needs. Do you provision minimally or for expected maximums (oversubscribe)? Do you rely on scale out via auto-scaling (which is auto only in real-time, configuration is highly manual even today) or scale up? It's a telling choice, because you're going to be charged for the compute whether you use it or not. While the instance is running, all available resources provisioned to that virtual instance are yours -- bought and paid for. If you only use 25 percent, well, that's too bad. You're paying for 100 percent. Now, from a certain point of view you are paying for what you use. After all, your virtual instance is what you're paying for, you're using it and the resources allocated to it. No one else can use them while your instance is active. Compute isn't shared like the network, after all. It's allocated by the system to a specific process. We could get into the weeds easily by explaining heaps and memory allocation schemes at the system level, but it's the result that's important: Resources allocated to your virtual machine are yours and yours alone. Even if your application isn't using them at the moment, you are by virtue of launching that virtual machine, and that's what you're paying for. You're paying for access to a given set of resources. Oversubscription, in other words, is the order of the day. Cloud, traditional, it doesn't matter what deployment model is being used -- you're oversubscribing the compute available to your application because that's the way the systems work today -- and might always work based on the isolation and security (the basics of multi-tenancy) required in a shared environment like cloud.

In the cloud, the only resources truly billed on a usage basis are bandwidth and storage. Perhaps that's due to the maturity of network and storage infrastructure, honed over years of usage-based policy enforcement, security and metering. Such capabilities were easily adapted to billing on a per-usage basis, on a utility model. Compute resources, on the other hand, are not so capable. Not even in the cloud, where pay-per-use is the song we're taught to dance to. When you go to provision resources for an application you're presented with a choice of instances of varying sizes, each with a pre-allocated set of compute resources. You're faced with an unpalatable set of choices regarding how to provision and manage future capacity needs. Do you provision minimally or for expected maximums (oversubscribe)? Do you rely on scale out via auto-scaling (which is auto only in real-time, configuration is highly manual even today) or scale up? It's a telling choice, because you're going to be charged for the compute whether you use it or not. While the instance is running, all available resources provisioned to that virtual instance are yours -- bought and paid for. If you only use 25 percent, well, that's too bad. You're paying for 100 percent. Now, from a certain point of view you are paying for what you use. After all, your virtual instance is what you're paying for, you're using it and the resources allocated to it. No one else can use them while your instance is active. Compute isn't shared like the network, after all. It's allocated by the system to a specific process. We could get into the weeds easily by explaining heaps and memory allocation schemes at the system level, but it's the result that's important: Resources allocated to your virtual machine are yours and yours alone. Even if your application isn't using them at the moment, you are by virtue of launching that virtual machine, and that's what you're paying for. You're paying for access to a given set of resources. Oversubscription, in other words, is the order of the day. Cloud, traditional, it doesn't matter what deployment model is being used -- you're oversubscribing the compute available to your application because that's the way the systems work today -- and might always work based on the isolation and security (the basics of multi-tenancy) required in a shared environment like cloud.

In the cloud, the only resources truly billed on a usage basis are bandwidth and storage. Perhaps that's due to the maturity of network and storage infrastructure, honed over years of usage-based policy enforcement, security and metering. Such capabilities were easily adapted to billing on a per-usage basis, on a utility model. Compute resources, on the other hand, are not so capable. Not even in the cloud, where pay-per-use is the song we're taught to dance to. When you go to provision resources for an application you're presented with a choice of instances of varying sizes, each with a pre-allocated set of compute resources. You're faced with an unpalatable set of choices regarding how to provision and manage future capacity needs. Do you provision minimally or for expected maximums (oversubscribe)? Do you rely on scale out via auto-scaling (which is auto only in real-time, configuration is highly manual even today) or scale up? It's a telling choice, because you're going to be charged for the compute whether you use it or not. While the instance is running, all available resources provisioned to that virtual instance are yours -- bought and paid for. If you only use 25 percent, well, that's too bad. You're paying for 100 percent. Now, from a certain point of view you are paying for what you use. After all, your virtual instance is what you're paying for, you're using it and the resources allocated to it. No one else can use them while your instance is active. Compute isn't shared like the network, after all. It's allocated by the system to a specific process. We could get into the weeds easily by explaining heaps and memory allocation schemes at the system level, but it's the result that's important: Resources allocated to your virtual machine are yours and yours alone. Even if your application isn't using them at the moment, you are by virtue of launching that virtual machine, and that's what you're paying for. You're paying for access to a given set of resources. Oversubscription, in other words, is the order of the day. Cloud, traditional, it doesn't matter what deployment model is being used -- you're oversubscribing the compute available to your application because that's the way the systems work today -- and might always work based on the isolation and security (the basics of multi-tenancy) required in a shared environment like cloud.

In the cloud, the only resources truly billed on a usage basis are bandwidth and storage. Perhaps that's due to the maturity of network and storage infrastructure, honed over years of usage-based policy enforcement, security and metering. Such capabilities were easily adapted to billing on a per-usage basis, on a utility model. Compute resources, on the other hand, are not so capable. Not even in the cloud, where pay-per-use is the song we're taught to dance to. When you go to provision resources for an application you're presented with a choice of instances of varying sizes, each with a pre-allocated set of compute resources. You're faced with an unpalatable set of choices regarding how to provision and manage future capacity needs. Do you provision minimally or for expected maximums (oversubscribe)? Do you rely on scale out via auto-scaling (which is auto only in real-time, configuration is highly manual even today) or scale up? It's a telling choice, because you're going to be charged for the compute whether you use it or not. While the instance is running, all available resources provisioned to that virtual instance are yours -- bought and paid for. If you only use 25 percent, well, that's too bad. You're paying for 100 percent. Now, from a certain point of view you are paying for what you use. After all, your virtual instance is what you're paying for, you're using it and the resources allocated to it. No one else can use them while your instance is active. Compute isn't shared like the network, after all. It's allocated by the system to a specific process. We could get into the weeds easily by explaining heaps and memory allocation schemes at the system level, but it's the result that's important: Resources allocated to your virtual machine are yours and yours alone. Even if your application isn't using them at the moment, you are by virtue of launching that virtual machine, and that's what you're paying for. You're paying for access to a given set of resources. Oversubscription, in other words, is the order of the day. Cloud, traditional, it doesn't matter what deployment model is being used -- you're oversubscribing the compute available to your application because that's the way the systems work today -- and might always work based on the isolation and security (the basics of multi-tenancy) required in a shared environment like cloud.