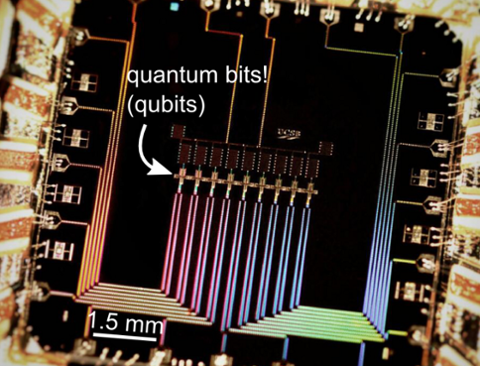

Who knows what's going on in there?[/caption] Confirming anything whose defining characteristic is uncertainty is obviously difficult, even when the confirmation involves whether a computer sold two years ago works the way it's supposed to. Confirming that the first quantum computer developed and sold for commercial use uses specific quantum phenomena to perform calculations is a pretty complicated matter—especially when one of the phenomena in question is finding the simplest solution to a problem based on changes in probability. Quantum computers should be exponentially more powerful than standard computers due to an increase in the number of variables that can be considered. In standard computing, the smallest piece of information is the bit that can represent either a one or a zero, but must be one or the other. In quantum computing, the smallest unit of information is the quantum bit (qubit), which can also represent either a one or a zero, but can also be both, or neither. Manufacturing processors capable of handling a significant number of qubits in the same calculation has proven difficult for most would-be quantum-processor manufacturers. D-Wave's presentation of its chip as a working 128-qubit processor was a major breakthrough; but competitors, IT analysts and specialists in quantum mechanics have raised questions about how D-Wave's secret process actually works.

Who knows what's going on in there?[/caption] Confirming anything whose defining characteristic is uncertainty is obviously difficult, even when the confirmation involves whether a computer sold two years ago works the way it's supposed to. Confirming that the first quantum computer developed and sold for commercial use uses specific quantum phenomena to perform calculations is a pretty complicated matter—especially when one of the phenomena in question is finding the simplest solution to a problem based on changes in probability. Quantum computers should be exponentially more powerful than standard computers due to an increase in the number of variables that can be considered. In standard computing, the smallest piece of information is the bit that can represent either a one or a zero, but must be one or the other. In quantum computing, the smallest unit of information is the quantum bit (qubit), which can also represent either a one or a zero, but can also be both, or neither. Manufacturing processors capable of handling a significant number of qubits in the same calculation has proven difficult for most would-be quantum-processor manufacturers. D-Wave's presentation of its chip as a working 128-qubit processor was a major breakthrough; but competitors, IT analysts and specialists in quantum mechanics have raised questions about how D-Wave's secret process actually works.

Study Confirms Quantum Computers are Actually Quantum

[caption id="attachment_10740" align="aligncenter" width="500"]  Who knows what's going on in there?[/caption] Confirming anything whose defining characteristic is uncertainty is obviously difficult, even when the confirmation involves whether a computer sold two years ago works the way it's supposed to. Confirming that the first quantum computer developed and sold for commercial use uses specific quantum phenomena to perform calculations is a pretty complicated matter—especially when one of the phenomena in question is finding the simplest solution to a problem based on changes in probability. Quantum computers should be exponentially more powerful than standard computers due to an increase in the number of variables that can be considered. In standard computing, the smallest piece of information is the bit that can represent either a one or a zero, but must be one or the other. In quantum computing, the smallest unit of information is the quantum bit (qubit), which can also represent either a one or a zero, but can also be both, or neither. Manufacturing processors capable of handling a significant number of qubits in the same calculation has proven difficult for most would-be quantum-processor manufacturers. D-Wave's presentation of its chip as a working 128-qubit processor was a major breakthrough; but competitors, IT analysts and specialists in quantum mechanics have raised questions about how D-Wave's secret process actually works.

Who knows what's going on in there?[/caption] Confirming anything whose defining characteristic is uncertainty is obviously difficult, even when the confirmation involves whether a computer sold two years ago works the way it's supposed to. Confirming that the first quantum computer developed and sold for commercial use uses specific quantum phenomena to perform calculations is a pretty complicated matter—especially when one of the phenomena in question is finding the simplest solution to a problem based on changes in probability. Quantum computers should be exponentially more powerful than standard computers due to an increase in the number of variables that can be considered. In standard computing, the smallest piece of information is the bit that can represent either a one or a zero, but must be one or the other. In quantum computing, the smallest unit of information is the quantum bit (qubit), which can also represent either a one or a zero, but can also be both, or neither. Manufacturing processors capable of handling a significant number of qubits in the same calculation has proven difficult for most would-be quantum-processor manufacturers. D-Wave's presentation of its chip as a working 128-qubit processor was a major breakthrough; but competitors, IT analysts and specialists in quantum mechanics have raised questions about how D-Wave's secret process actually works.

Who knows what's going on in there?[/caption] Confirming anything whose defining characteristic is uncertainty is obviously difficult, even when the confirmation involves whether a computer sold two years ago works the way it's supposed to. Confirming that the first quantum computer developed and sold for commercial use uses specific quantum phenomena to perform calculations is a pretty complicated matter—especially when one of the phenomena in question is finding the simplest solution to a problem based on changes in probability. Quantum computers should be exponentially more powerful than standard computers due to an increase in the number of variables that can be considered. In standard computing, the smallest piece of information is the bit that can represent either a one or a zero, but must be one or the other. In quantum computing, the smallest unit of information is the quantum bit (qubit), which can also represent either a one or a zero, but can also be both, or neither. Manufacturing processors capable of handling a significant number of qubits in the same calculation has proven difficult for most would-be quantum-processor manufacturers. D-Wave's presentation of its chip as a working 128-qubit processor was a major breakthrough; but competitors, IT analysts and specialists in quantum mechanics have raised questions about how D-Wave's secret process actually works.

Who knows what's going on in there?[/caption] Confirming anything whose defining characteristic is uncertainty is obviously difficult, even when the confirmation involves whether a computer sold two years ago works the way it's supposed to. Confirming that the first quantum computer developed and sold for commercial use uses specific quantum phenomena to perform calculations is a pretty complicated matter—especially when one of the phenomena in question is finding the simplest solution to a problem based on changes in probability. Quantum computers should be exponentially more powerful than standard computers due to an increase in the number of variables that can be considered. In standard computing, the smallest piece of information is the bit that can represent either a one or a zero, but must be one or the other. In quantum computing, the smallest unit of information is the quantum bit (qubit), which can also represent either a one or a zero, but can also be both, or neither. Manufacturing processors capable of handling a significant number of qubits in the same calculation has proven difficult for most would-be quantum-processor manufacturers. D-Wave's presentation of its chip as a working 128-qubit processor was a major breakthrough; but competitors, IT analysts and specialists in quantum mechanics have raised questions about how D-Wave's secret process actually works.