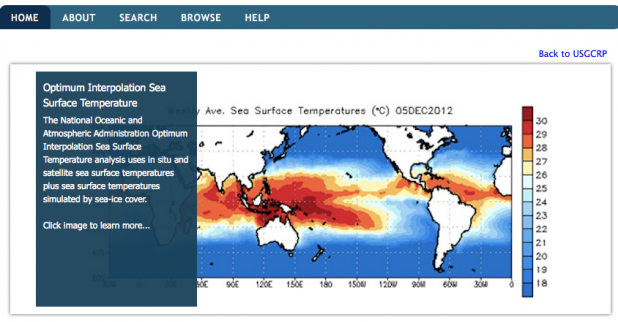

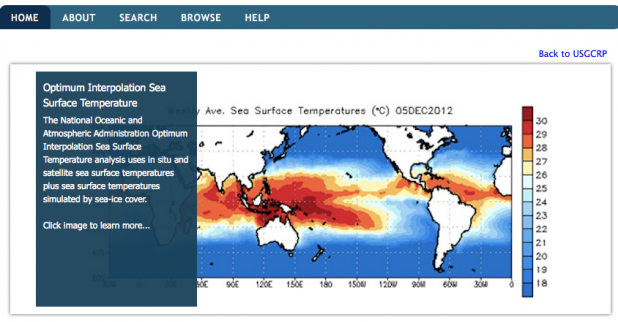

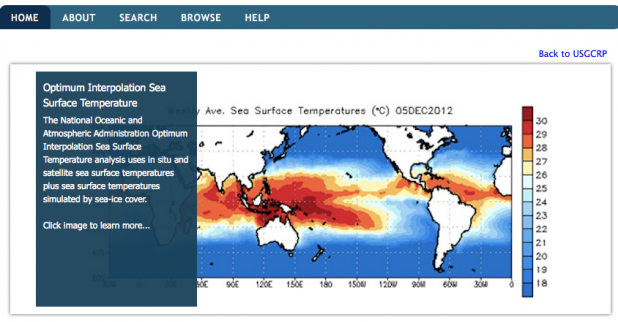

MATCH incorporates metadata from six federal agencies' datasets.[/caption] President Barack Obama wants his government to open up more datasets to entrepreneurs and other folks who could use them. In remarks given May 9 at Applied Materials in Austin, Texas, the President described a handful of startups that use government data to save money for energy customers, help patients understand medical symptoms, and assist businesses in avoiding delays or complications due to weather. “One of the things we’re doing to fuel more inventiveness like this, to fuel more private sector innovation and discovery, is to make the vast amounts of America’s data open and easy to access for the first time in history,” Obama said. “So talented entrepreneurs are doing some pretty amazing things with data that's already being collected by government.” In light of that, he continued, “I’m announcing that we’re making even more government data available, and we’re making it easier for people to find and to use.” That same day, the White House announced that Obama had signed an Executive Order requiring that “data generated by the government be made available in open, machine-readable formats, while appropriately safeguarding privacy, confidentiality, and security.” Paired with the Executive Order is a new Open Data Policy (PDF) released by the Office of Management and Budget and the Office of Science and Technology Policy, and designed to institutionalize that data transparency. National Security Systems are, of course, exempt from the policy. The policy document also offers a bit of wiggle room for those agencies not involved in national security: “Agencies should exercise judgment before publicly distributing data residing in an existing system by weighing the value of openness against the cost of making those data public.” Agencies must also take all appropriate steps to safeguard personal details disclosed in that data, in accordance with the Privacy Act of 1974 and other existing requirements. The Open Data Policy doesn’t ask for specific datasets to be exposed to public scrutiny; instead, it prods federal CIOs, CTOs and other technology-related officials to shape their respective agencies in ways that make data more open. As part of this new openness, the White House also announced that the U.S. Global Change Research Program (USGCRP) is launching an online tool designed to “accelerate research relating to climate change and human health.” This tool, known as the Metadata Access Tool for Climate and Health (MATCH), incorporates metadata from 9,000 health, environment, and climate-science datasets in the possession of six federal agencies. Obama isn’t the first politician to advocate for a government more forthcoming with its data. Former San Francisco mayor Gavin Newsom’s recent nonfiction book “Citizenville” proposes that governments open up their datasets to third-party developers and programmers, who can transform all that raw information into software and apps that serve civic functions. Others have made similar arguments, all of which certainly make sense on the surface: What could possibly go wrong with injecting a little Silicon Valley ingenuity into the stolid bureaucracy of governing a city or nation? According to some critics, the answer to that question is: a lot. “One of the main reasons why governments choose not to offload certain services to the private sector is not because they think they can do a better job at innovation or efficiency,” Evgeny Morozov wrote in a recent essay for The Baffler, “but because other considerations—like fairness and equity of access—come into play.” In theory, a federal initiative such as the Open Data Policy is kept at least somewhat in check by preexisting regulations. But implementation is another thing entirely—and while opening government datasets could well encourage entrepreneurial innovation, there’s always the question of whether such openness will result in unexpected blowback. Image: U.S. Global Change Research Program (USGCRP)

MATCH incorporates metadata from six federal agencies' datasets.[/caption] President Barack Obama wants his government to open up more datasets to entrepreneurs and other folks who could use them. In remarks given May 9 at Applied Materials in Austin, Texas, the President described a handful of startups that use government data to save money for energy customers, help patients understand medical symptoms, and assist businesses in avoiding delays or complications due to weather. “One of the things we’re doing to fuel more inventiveness like this, to fuel more private sector innovation and discovery, is to make the vast amounts of America’s data open and easy to access for the first time in history,” Obama said. “So talented entrepreneurs are doing some pretty amazing things with data that's already being collected by government.” In light of that, he continued, “I’m announcing that we’re making even more government data available, and we’re making it easier for people to find and to use.” That same day, the White House announced that Obama had signed an Executive Order requiring that “data generated by the government be made available in open, machine-readable formats, while appropriately safeguarding privacy, confidentiality, and security.” Paired with the Executive Order is a new Open Data Policy (PDF) released by the Office of Management and Budget and the Office of Science and Technology Policy, and designed to institutionalize that data transparency. National Security Systems are, of course, exempt from the policy. The policy document also offers a bit of wiggle room for those agencies not involved in national security: “Agencies should exercise judgment before publicly distributing data residing in an existing system by weighing the value of openness against the cost of making those data public.” Agencies must also take all appropriate steps to safeguard personal details disclosed in that data, in accordance with the Privacy Act of 1974 and other existing requirements. The Open Data Policy doesn’t ask for specific datasets to be exposed to public scrutiny; instead, it prods federal CIOs, CTOs and other technology-related officials to shape their respective agencies in ways that make data more open. As part of this new openness, the White House also announced that the U.S. Global Change Research Program (USGCRP) is launching an online tool designed to “accelerate research relating to climate change and human health.” This tool, known as the Metadata Access Tool for Climate and Health (MATCH), incorporates metadata from 9,000 health, environment, and climate-science datasets in the possession of six federal agencies. Obama isn’t the first politician to advocate for a government more forthcoming with its data. Former San Francisco mayor Gavin Newsom’s recent nonfiction book “Citizenville” proposes that governments open up their datasets to third-party developers and programmers, who can transform all that raw information into software and apps that serve civic functions. Others have made similar arguments, all of which certainly make sense on the surface: What could possibly go wrong with injecting a little Silicon Valley ingenuity into the stolid bureaucracy of governing a city or nation? According to some critics, the answer to that question is: a lot. “One of the main reasons why governments choose not to offload certain services to the private sector is not because they think they can do a better job at innovation or efficiency,” Evgeny Morozov wrote in a recent essay for The Baffler, “but because other considerations—like fairness and equity of access—come into play.” In theory, a federal initiative such as the Open Data Policy is kept at least somewhat in check by preexisting regulations. But implementation is another thing entirely—and while opening government datasets could well encourage entrepreneurial innovation, there’s always the question of whether such openness will result in unexpected blowback. Image: U.S. Global Change Research Program (USGCRP) President Obama: U.S. Government Will Make Data More Open

[caption id="attachment_9725" align="aligncenter" width="618"]  MATCH incorporates metadata from six federal agencies' datasets.[/caption] President Barack Obama wants his government to open up more datasets to entrepreneurs and other folks who could use them. In remarks given May 9 at Applied Materials in Austin, Texas, the President described a handful of startups that use government data to save money for energy customers, help patients understand medical symptoms, and assist businesses in avoiding delays or complications due to weather. “One of the things we’re doing to fuel more inventiveness like this, to fuel more private sector innovation and discovery, is to make the vast amounts of America’s data open and easy to access for the first time in history,” Obama said. “So talented entrepreneurs are doing some pretty amazing things with data that's already being collected by government.” In light of that, he continued, “I’m announcing that we’re making even more government data available, and we’re making it easier for people to find and to use.” That same day, the White House announced that Obama had signed an Executive Order requiring that “data generated by the government be made available in open, machine-readable formats, while appropriately safeguarding privacy, confidentiality, and security.” Paired with the Executive Order is a new Open Data Policy (PDF) released by the Office of Management and Budget and the Office of Science and Technology Policy, and designed to institutionalize that data transparency. National Security Systems are, of course, exempt from the policy. The policy document also offers a bit of wiggle room for those agencies not involved in national security: “Agencies should exercise judgment before publicly distributing data residing in an existing system by weighing the value of openness against the cost of making those data public.” Agencies must also take all appropriate steps to safeguard personal details disclosed in that data, in accordance with the Privacy Act of 1974 and other existing requirements. The Open Data Policy doesn’t ask for specific datasets to be exposed to public scrutiny; instead, it prods federal CIOs, CTOs and other technology-related officials to shape their respective agencies in ways that make data more open. As part of this new openness, the White House also announced that the U.S. Global Change Research Program (USGCRP) is launching an online tool designed to “accelerate research relating to climate change and human health.” This tool, known as the Metadata Access Tool for Climate and Health (MATCH), incorporates metadata from 9,000 health, environment, and climate-science datasets in the possession of six federal agencies. Obama isn’t the first politician to advocate for a government more forthcoming with its data. Former San Francisco mayor Gavin Newsom’s recent nonfiction book “Citizenville” proposes that governments open up their datasets to third-party developers and programmers, who can transform all that raw information into software and apps that serve civic functions. Others have made similar arguments, all of which certainly make sense on the surface: What could possibly go wrong with injecting a little Silicon Valley ingenuity into the stolid bureaucracy of governing a city or nation? According to some critics, the answer to that question is: a lot. “One of the main reasons why governments choose not to offload certain services to the private sector is not because they think they can do a better job at innovation or efficiency,” Evgeny Morozov wrote in a recent essay for The Baffler, “but because other considerations—like fairness and equity of access—come into play.” In theory, a federal initiative such as the Open Data Policy is kept at least somewhat in check by preexisting regulations. But implementation is another thing entirely—and while opening government datasets could well encourage entrepreneurial innovation, there’s always the question of whether such openness will result in unexpected blowback. Image: U.S. Global Change Research Program (USGCRP)

MATCH incorporates metadata from six federal agencies' datasets.[/caption] President Barack Obama wants his government to open up more datasets to entrepreneurs and other folks who could use them. In remarks given May 9 at Applied Materials in Austin, Texas, the President described a handful of startups that use government data to save money for energy customers, help patients understand medical symptoms, and assist businesses in avoiding delays or complications due to weather. “One of the things we’re doing to fuel more inventiveness like this, to fuel more private sector innovation and discovery, is to make the vast amounts of America’s data open and easy to access for the first time in history,” Obama said. “So talented entrepreneurs are doing some pretty amazing things with data that's already being collected by government.” In light of that, he continued, “I’m announcing that we’re making even more government data available, and we’re making it easier for people to find and to use.” That same day, the White House announced that Obama had signed an Executive Order requiring that “data generated by the government be made available in open, machine-readable formats, while appropriately safeguarding privacy, confidentiality, and security.” Paired with the Executive Order is a new Open Data Policy (PDF) released by the Office of Management and Budget and the Office of Science and Technology Policy, and designed to institutionalize that data transparency. National Security Systems are, of course, exempt from the policy. The policy document also offers a bit of wiggle room for those agencies not involved in national security: “Agencies should exercise judgment before publicly distributing data residing in an existing system by weighing the value of openness against the cost of making those data public.” Agencies must also take all appropriate steps to safeguard personal details disclosed in that data, in accordance with the Privacy Act of 1974 and other existing requirements. The Open Data Policy doesn’t ask for specific datasets to be exposed to public scrutiny; instead, it prods federal CIOs, CTOs and other technology-related officials to shape their respective agencies in ways that make data more open. As part of this new openness, the White House also announced that the U.S. Global Change Research Program (USGCRP) is launching an online tool designed to “accelerate research relating to climate change and human health.” This tool, known as the Metadata Access Tool for Climate and Health (MATCH), incorporates metadata from 9,000 health, environment, and climate-science datasets in the possession of six federal agencies. Obama isn’t the first politician to advocate for a government more forthcoming with its data. Former San Francisco mayor Gavin Newsom’s recent nonfiction book “Citizenville” proposes that governments open up their datasets to third-party developers and programmers, who can transform all that raw information into software and apps that serve civic functions. Others have made similar arguments, all of which certainly make sense on the surface: What could possibly go wrong with injecting a little Silicon Valley ingenuity into the stolid bureaucracy of governing a city or nation? According to some critics, the answer to that question is: a lot. “One of the main reasons why governments choose not to offload certain services to the private sector is not because they think they can do a better job at innovation or efficiency,” Evgeny Morozov wrote in a recent essay for The Baffler, “but because other considerations—like fairness and equity of access—come into play.” In theory, a federal initiative such as the Open Data Policy is kept at least somewhat in check by preexisting regulations. But implementation is another thing entirely—and while opening government datasets could well encourage entrepreneurial innovation, there’s always the question of whether such openness will result in unexpected blowback. Image: U.S. Global Change Research Program (USGCRP)

MATCH incorporates metadata from six federal agencies' datasets.[/caption] President Barack Obama wants his government to open up more datasets to entrepreneurs and other folks who could use them. In remarks given May 9 at Applied Materials in Austin, Texas, the President described a handful of startups that use government data to save money for energy customers, help patients understand medical symptoms, and assist businesses in avoiding delays or complications due to weather. “One of the things we’re doing to fuel more inventiveness like this, to fuel more private sector innovation and discovery, is to make the vast amounts of America’s data open and easy to access for the first time in history,” Obama said. “So talented entrepreneurs are doing some pretty amazing things with data that's already being collected by government.” In light of that, he continued, “I’m announcing that we’re making even more government data available, and we’re making it easier for people to find and to use.” That same day, the White House announced that Obama had signed an Executive Order requiring that “data generated by the government be made available in open, machine-readable formats, while appropriately safeguarding privacy, confidentiality, and security.” Paired with the Executive Order is a new Open Data Policy (PDF) released by the Office of Management and Budget and the Office of Science and Technology Policy, and designed to institutionalize that data transparency. National Security Systems are, of course, exempt from the policy. The policy document also offers a bit of wiggle room for those agencies not involved in national security: “Agencies should exercise judgment before publicly distributing data residing in an existing system by weighing the value of openness against the cost of making those data public.” Agencies must also take all appropriate steps to safeguard personal details disclosed in that data, in accordance with the Privacy Act of 1974 and other existing requirements. The Open Data Policy doesn’t ask for specific datasets to be exposed to public scrutiny; instead, it prods federal CIOs, CTOs and other technology-related officials to shape their respective agencies in ways that make data more open. As part of this new openness, the White House also announced that the U.S. Global Change Research Program (USGCRP) is launching an online tool designed to “accelerate research relating to climate change and human health.” This tool, known as the Metadata Access Tool for Climate and Health (MATCH), incorporates metadata from 9,000 health, environment, and climate-science datasets in the possession of six federal agencies. Obama isn’t the first politician to advocate for a government more forthcoming with its data. Former San Francisco mayor Gavin Newsom’s recent nonfiction book “Citizenville” proposes that governments open up their datasets to third-party developers and programmers, who can transform all that raw information into software and apps that serve civic functions. Others have made similar arguments, all of which certainly make sense on the surface: What could possibly go wrong with injecting a little Silicon Valley ingenuity into the stolid bureaucracy of governing a city or nation? According to some critics, the answer to that question is: a lot. “One of the main reasons why governments choose not to offload certain services to the private sector is not because they think they can do a better job at innovation or efficiency,” Evgeny Morozov wrote in a recent essay for The Baffler, “but because other considerations—like fairness and equity of access—come into play.” In theory, a federal initiative such as the Open Data Policy is kept at least somewhat in check by preexisting regulations. But implementation is another thing entirely—and while opening government datasets could well encourage entrepreneurial innovation, there’s always the question of whether such openness will result in unexpected blowback. Image: U.S. Global Change Research Program (USGCRP)

MATCH incorporates metadata from six federal agencies' datasets.[/caption] President Barack Obama wants his government to open up more datasets to entrepreneurs and other folks who could use them. In remarks given May 9 at Applied Materials in Austin, Texas, the President described a handful of startups that use government data to save money for energy customers, help patients understand medical symptoms, and assist businesses in avoiding delays or complications due to weather. “One of the things we’re doing to fuel more inventiveness like this, to fuel more private sector innovation and discovery, is to make the vast amounts of America’s data open and easy to access for the first time in history,” Obama said. “So talented entrepreneurs are doing some pretty amazing things with data that's already being collected by government.” In light of that, he continued, “I’m announcing that we’re making even more government data available, and we’re making it easier for people to find and to use.” That same day, the White House announced that Obama had signed an Executive Order requiring that “data generated by the government be made available in open, machine-readable formats, while appropriately safeguarding privacy, confidentiality, and security.” Paired with the Executive Order is a new Open Data Policy (PDF) released by the Office of Management and Budget and the Office of Science and Technology Policy, and designed to institutionalize that data transparency. National Security Systems are, of course, exempt from the policy. The policy document also offers a bit of wiggle room for those agencies not involved in national security: “Agencies should exercise judgment before publicly distributing data residing in an existing system by weighing the value of openness against the cost of making those data public.” Agencies must also take all appropriate steps to safeguard personal details disclosed in that data, in accordance with the Privacy Act of 1974 and other existing requirements. The Open Data Policy doesn’t ask for specific datasets to be exposed to public scrutiny; instead, it prods federal CIOs, CTOs and other technology-related officials to shape their respective agencies in ways that make data more open. As part of this new openness, the White House also announced that the U.S. Global Change Research Program (USGCRP) is launching an online tool designed to “accelerate research relating to climate change and human health.” This tool, known as the Metadata Access Tool for Climate and Health (MATCH), incorporates metadata from 9,000 health, environment, and climate-science datasets in the possession of six federal agencies. Obama isn’t the first politician to advocate for a government more forthcoming with its data. Former San Francisco mayor Gavin Newsom’s recent nonfiction book “Citizenville” proposes that governments open up their datasets to third-party developers and programmers, who can transform all that raw information into software and apps that serve civic functions. Others have made similar arguments, all of which certainly make sense on the surface: What could possibly go wrong with injecting a little Silicon Valley ingenuity into the stolid bureaucracy of governing a city or nation? According to some critics, the answer to that question is: a lot. “One of the main reasons why governments choose not to offload certain services to the private sector is not because they think they can do a better job at innovation or efficiency,” Evgeny Morozov wrote in a recent essay for The Baffler, “but because other considerations—like fairness and equity of access—come into play.” In theory, a federal initiative such as the Open Data Policy is kept at least somewhat in check by preexisting regulations. But implementation is another thing entirely—and while opening government datasets could well encourage entrepreneurial innovation, there’s always the question of whether such openness will result in unexpected blowback. Image: U.S. Global Change Research Program (USGCRP)