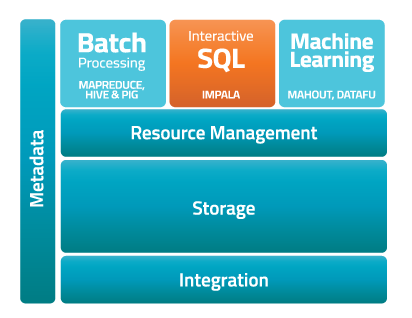

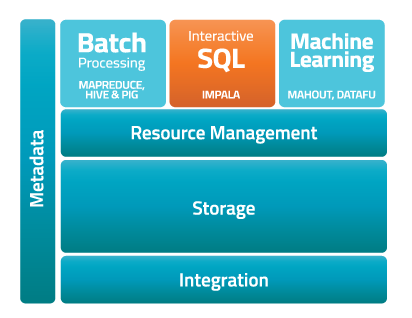

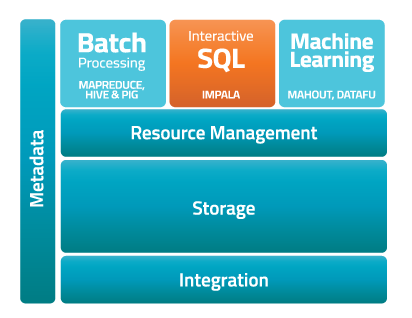

Impala operates within the Hadoop framework without the need for specialized systems or proprietary formats.[/caption] Cloudera’s Impala, its open-source SQL query engine for data stored in HDFS (Hadoop Distributed File System) and HBase, has reached general availability. Cloudera first released Impala as a public beta offering in October 2012, using the intervening months to refine the platform for better real-world performance. At the time of the public beta release, Cloudera claimed that Impala would process queries 10 to 30 times faster than Hive/MapReduce. The framework supports a broad range of standard file and data formats, which could spare companies from having to filter their data through a proprietary format or system as a part of processing. In its press materials, Cloudera refers to that processing speed as “real time.” When it comes to data analytics, however, that term is rather nebulous. In an interview last fall with SlashBI, Cloudera executive Doug Cutting, who co-wrote the Hadoop framework, suggested that “real time” meant: “It’s when you sit and wait for it to finish, as opposed to going for a cup of coffee or even letting it run overnight.” Cloudera is packaging Impala as the engine under the hood of Cloudera Enterprise RTQ, which (as a holistic platform) layers in management automation and technical support. Apache Hadoop has evolved as a favorite among organizations with a need to run intensive data applications across large hardware clusters. Research firms have attributed much of the interest in the framework to the flexibility of its open-source underpinnings. Developers have used Hadoop as a jumping-off point for the creation of platforms such as HBase, a non-relational distributed database modeled on Google BigTable and run atop HDFS. Given the interest in Hadoop, its unlikely that its evolution will slow anytime soon—and that means the platforms based off it will only speed up in years to come. When asked if Hadoop had any limits as a data processing system, Cutting responded: “Nothing that couldn’t be worked around.” However, some companies with truly epic data needs have explored building their own frameworks to handle the load, most notably Facebook with Corona. Image: Cloudera

Impala operates within the Hadoop framework without the need for specialized systems or proprietary formats.[/caption] Cloudera’s Impala, its open-source SQL query engine for data stored in HDFS (Hadoop Distributed File System) and HBase, has reached general availability. Cloudera first released Impala as a public beta offering in October 2012, using the intervening months to refine the platform for better real-world performance. At the time of the public beta release, Cloudera claimed that Impala would process queries 10 to 30 times faster than Hive/MapReduce. The framework supports a broad range of standard file and data formats, which could spare companies from having to filter their data through a proprietary format or system as a part of processing. In its press materials, Cloudera refers to that processing speed as “real time.” When it comes to data analytics, however, that term is rather nebulous. In an interview last fall with SlashBI, Cloudera executive Doug Cutting, who co-wrote the Hadoop framework, suggested that “real time” meant: “It’s when you sit and wait for it to finish, as opposed to going for a cup of coffee or even letting it run overnight.” Cloudera is packaging Impala as the engine under the hood of Cloudera Enterprise RTQ, which (as a holistic platform) layers in management automation and technical support. Apache Hadoop has evolved as a favorite among organizations with a need to run intensive data applications across large hardware clusters. Research firms have attributed much of the interest in the framework to the flexibility of its open-source underpinnings. Developers have used Hadoop as a jumping-off point for the creation of platforms such as HBase, a non-relational distributed database modeled on Google BigTable and run atop HDFS. Given the interest in Hadoop, its unlikely that its evolution will slow anytime soon—and that means the platforms based off it will only speed up in years to come. When asked if Hadoop had any limits as a data processing system, Cutting responded: “Nothing that couldn’t be worked around.” However, some companies with truly epic data needs have explored building their own frameworks to handle the load, most notably Facebook with Corona. Image: Cloudera Cloudera’s Impala Delivers SQL-on-Hadoop

[caption id="attachment_9492" align="aligncenter" width="412"]  Impala operates within the Hadoop framework without the need for specialized systems or proprietary formats.[/caption] Cloudera’s Impala, its open-source SQL query engine for data stored in HDFS (Hadoop Distributed File System) and HBase, has reached general availability. Cloudera first released Impala as a public beta offering in October 2012, using the intervening months to refine the platform for better real-world performance. At the time of the public beta release, Cloudera claimed that Impala would process queries 10 to 30 times faster than Hive/MapReduce. The framework supports a broad range of standard file and data formats, which could spare companies from having to filter their data through a proprietary format or system as a part of processing. In its press materials, Cloudera refers to that processing speed as “real time.” When it comes to data analytics, however, that term is rather nebulous. In an interview last fall with SlashBI, Cloudera executive Doug Cutting, who co-wrote the Hadoop framework, suggested that “real time” meant: “It’s when you sit and wait for it to finish, as opposed to going for a cup of coffee or even letting it run overnight.” Cloudera is packaging Impala as the engine under the hood of Cloudera Enterprise RTQ, which (as a holistic platform) layers in management automation and technical support. Apache Hadoop has evolved as a favorite among organizations with a need to run intensive data applications across large hardware clusters. Research firms have attributed much of the interest in the framework to the flexibility of its open-source underpinnings. Developers have used Hadoop as a jumping-off point for the creation of platforms such as HBase, a non-relational distributed database modeled on Google BigTable and run atop HDFS. Given the interest in Hadoop, its unlikely that its evolution will slow anytime soon—and that means the platforms based off it will only speed up in years to come. When asked if Hadoop had any limits as a data processing system, Cutting responded: “Nothing that couldn’t be worked around.” However, some companies with truly epic data needs have explored building their own frameworks to handle the load, most notably Facebook with Corona. Image: Cloudera

Impala operates within the Hadoop framework without the need for specialized systems or proprietary formats.[/caption] Cloudera’s Impala, its open-source SQL query engine for data stored in HDFS (Hadoop Distributed File System) and HBase, has reached general availability. Cloudera first released Impala as a public beta offering in October 2012, using the intervening months to refine the platform for better real-world performance. At the time of the public beta release, Cloudera claimed that Impala would process queries 10 to 30 times faster than Hive/MapReduce. The framework supports a broad range of standard file and data formats, which could spare companies from having to filter their data through a proprietary format or system as a part of processing. In its press materials, Cloudera refers to that processing speed as “real time.” When it comes to data analytics, however, that term is rather nebulous. In an interview last fall with SlashBI, Cloudera executive Doug Cutting, who co-wrote the Hadoop framework, suggested that “real time” meant: “It’s when you sit and wait for it to finish, as opposed to going for a cup of coffee or even letting it run overnight.” Cloudera is packaging Impala as the engine under the hood of Cloudera Enterprise RTQ, which (as a holistic platform) layers in management automation and technical support. Apache Hadoop has evolved as a favorite among organizations with a need to run intensive data applications across large hardware clusters. Research firms have attributed much of the interest in the framework to the flexibility of its open-source underpinnings. Developers have used Hadoop as a jumping-off point for the creation of platforms such as HBase, a non-relational distributed database modeled on Google BigTable and run atop HDFS. Given the interest in Hadoop, its unlikely that its evolution will slow anytime soon—and that means the platforms based off it will only speed up in years to come. When asked if Hadoop had any limits as a data processing system, Cutting responded: “Nothing that couldn’t be worked around.” However, some companies with truly epic data needs have explored building their own frameworks to handle the load, most notably Facebook with Corona. Image: Cloudera

Impala operates within the Hadoop framework without the need for specialized systems or proprietary formats.[/caption] Cloudera’s Impala, its open-source SQL query engine for data stored in HDFS (Hadoop Distributed File System) and HBase, has reached general availability. Cloudera first released Impala as a public beta offering in October 2012, using the intervening months to refine the platform for better real-world performance. At the time of the public beta release, Cloudera claimed that Impala would process queries 10 to 30 times faster than Hive/MapReduce. The framework supports a broad range of standard file and data formats, which could spare companies from having to filter their data through a proprietary format or system as a part of processing. In its press materials, Cloudera refers to that processing speed as “real time.” When it comes to data analytics, however, that term is rather nebulous. In an interview last fall with SlashBI, Cloudera executive Doug Cutting, who co-wrote the Hadoop framework, suggested that “real time” meant: “It’s when you sit and wait for it to finish, as opposed to going for a cup of coffee or even letting it run overnight.” Cloudera is packaging Impala as the engine under the hood of Cloudera Enterprise RTQ, which (as a holistic platform) layers in management automation and technical support. Apache Hadoop has evolved as a favorite among organizations with a need to run intensive data applications across large hardware clusters. Research firms have attributed much of the interest in the framework to the flexibility of its open-source underpinnings. Developers have used Hadoop as a jumping-off point for the creation of platforms such as HBase, a non-relational distributed database modeled on Google BigTable and run atop HDFS. Given the interest in Hadoop, its unlikely that its evolution will slow anytime soon—and that means the platforms based off it will only speed up in years to come. When asked if Hadoop had any limits as a data processing system, Cutting responded: “Nothing that couldn’t be worked around.” However, some companies with truly epic data needs have explored building their own frameworks to handle the load, most notably Facebook with Corona. Image: Cloudera

Impala operates within the Hadoop framework without the need for specialized systems or proprietary formats.[/caption] Cloudera’s Impala, its open-source SQL query engine for data stored in HDFS (Hadoop Distributed File System) and HBase, has reached general availability. Cloudera first released Impala as a public beta offering in October 2012, using the intervening months to refine the platform for better real-world performance. At the time of the public beta release, Cloudera claimed that Impala would process queries 10 to 30 times faster than Hive/MapReduce. The framework supports a broad range of standard file and data formats, which could spare companies from having to filter their data through a proprietary format or system as a part of processing. In its press materials, Cloudera refers to that processing speed as “real time.” When it comes to data analytics, however, that term is rather nebulous. In an interview last fall with SlashBI, Cloudera executive Doug Cutting, who co-wrote the Hadoop framework, suggested that “real time” meant: “It’s when you sit and wait for it to finish, as opposed to going for a cup of coffee or even letting it run overnight.” Cloudera is packaging Impala as the engine under the hood of Cloudera Enterprise RTQ, which (as a holistic platform) layers in management automation and technical support. Apache Hadoop has evolved as a favorite among organizations with a need to run intensive data applications across large hardware clusters. Research firms have attributed much of the interest in the framework to the flexibility of its open-source underpinnings. Developers have used Hadoop as a jumping-off point for the creation of platforms such as HBase, a non-relational distributed database modeled on Google BigTable and run atop HDFS. Given the interest in Hadoop, its unlikely that its evolution will slow anytime soon—and that means the platforms based off it will only speed up in years to come. When asked if Hadoop had any limits as a data processing system, Cutting responded: “Nothing that couldn’t be worked around.” However, some companies with truly epic data needs have explored building their own frameworks to handle the load, most notably Facebook with Corona. Image: Cloudera