The federal government realizes the importance of Big Data. The White House officially declared the storage and analysis of massive datasets a

“big deal,” and various agencies have their own data plans in the works. But it still has a long road ahead if it wants to actually become widely proficient in data analytics, according to a new survey created by the

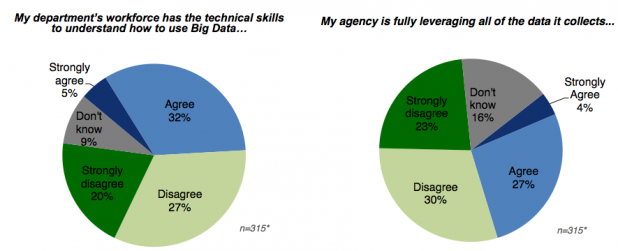

Government Business Council and funded by consulting firm Booz Allen Hamilton. That survey, conducted between Jan. 22 and Feb. 1 of this year, asked 313 federal managers from 27 agencies about their Big Data implementations and strategies. (Roughly two-thirds of respondents were ranked anywhere from GS-11 to Senior Executive Service, or equivalent grade levels.) “Federal leadership has emphasized leveraging Big Data to enhance agency operations,” read the survey’s executive summary. “However, managers are undecided if agencies are taking the appropriate steps to do so.” Roughly 37 percent of those surveyed felt their agency was taking those steps; another 18 percent simply didn’t know if any such effort was underway. “Federal managers also show low levels of data proficiency, and feel the federal workforce is similarly ill-equipped to make use of Big Data,” the summary added. “Just 18 percent of managers feel they have full professional proficiency in understanding large data sets, while 54 percent feel their agency’s workforce does not have the technical skills to understand how to use Big Data.” Because of that, just over a third of survey respondents felt their agency was actually “leveraging” all the data collected in-house—much less than the 53 percent who felt their agency was unequal to that task. Around 47 percent of respondents either “disagreed” or “strongly disagreed” with the statement, “My department’s workforce has the technical skills to understand how to use Big Data.”

Challenges

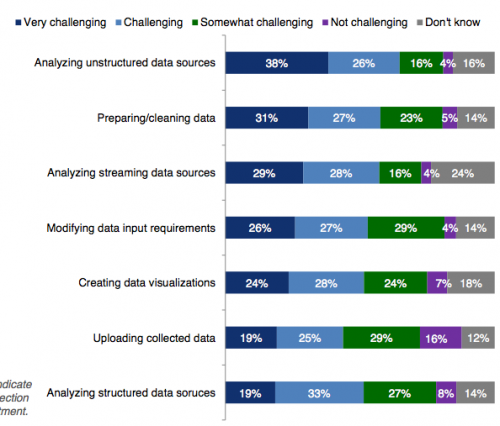

Only four percent of respondents indicated their agency was hiring new data scientists to actually analyze the datasets on-hand. Around 18 percent of managers said they had “full proficiency” with large data sets, while 33 percent suggested “elementary proficiency”; compare that with the 35 percent who believed they had “limited proficiency,” and the 14 percent who had no practical efficiency. Of the challenges facing agencies attempting to implement some sort of Big Data program, around 61 percent of respondents indicated that lack of adequate resources was the biggest; lack of data visibility came in second at 55 percent. Some 50 percent of respondents felt that their agency’s budget priorities did not support their data initiatives; almost as many—49 percent—felt that “technological barriers” prevented them from accessing data, while 43 percent indicated security concerns as the biggest barrier. Another 38 percent believed that “cultural barriers to accessing data” was the primary challenge. Breaking things down still further, significant majorities of respondents felt that everything from “analyzing unstructured data sources” to “uploading collected data” presented a challenge:

The survey included a few comments from Joshua Sullivan, a Booz Allen Hamilton vice president, about what agencies can do to mitigate some of these challenges. First, he suggested, agencies need to assess whether their workforces actually have the right skills to store and analyze data. After that, they need to list the things they want to accomplish with their data-analytics infrastructure. Third, agencies need to set up a plan to implement a Big Data solution in incremental steps, as much of the current technology was designed for gradual built-out. In any case, if one takes this survey at face value, the government clearly has some distance to cover if it wants to effectively use Big Data.

Images: Government Business Council  The survey included a few comments from Joshua Sullivan, a Booz Allen Hamilton vice president, about what agencies can do to mitigate some of these challenges. First, he suggested, agencies need to assess whether their workforces actually have the right skills to store and analyze data. After that, they need to list the things they want to accomplish with their data-analytics infrastructure. Third, agencies need to set up a plan to implement a Big Data solution in incremental steps, as much of the current technology was designed for gradual built-out. In any case, if one takes this survey at face value, the government clearly has some distance to cover if it wants to effectively use Big Data. Images: Government Business Council

The survey included a few comments from Joshua Sullivan, a Booz Allen Hamilton vice president, about what agencies can do to mitigate some of these challenges. First, he suggested, agencies need to assess whether their workforces actually have the right skills to store and analyze data. After that, they need to list the things they want to accomplish with their data-analytics infrastructure. Third, agencies need to set up a plan to implement a Big Data solution in incremental steps, as much of the current technology was designed for gradual built-out. In any case, if one takes this survey at face value, the government clearly has some distance to cover if it wants to effectively use Big Data. Images: Government Business Council