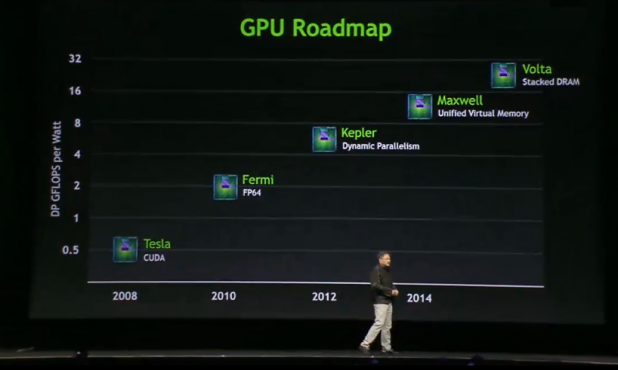

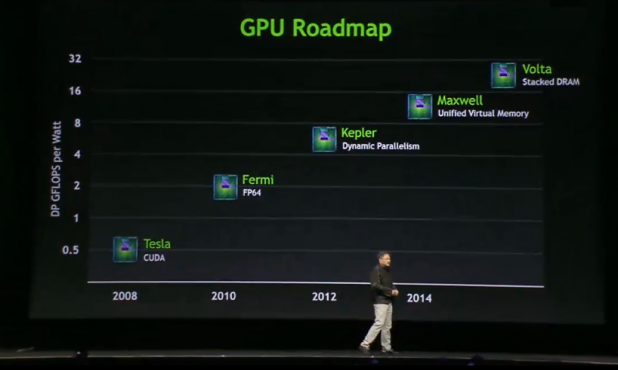

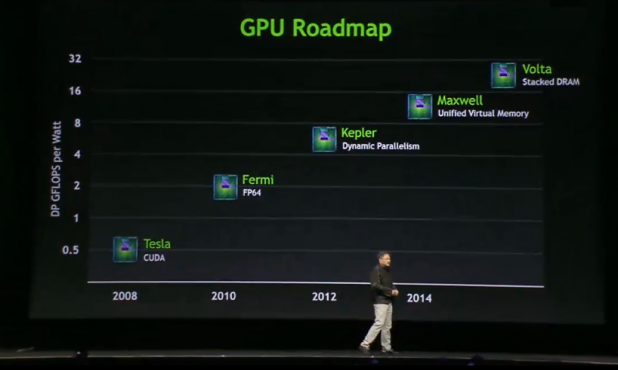

Nvidia has it all diagrammed out.[/caption] Nvidia continued its march into the enterprise hardware space, announcing its first integrated system for "remote graphics": the GRID VCA. Nvidia opened its GPU Technology Conference in San Jose with a demonstration of its GPUs’ enhanced graphics and computational performance—but saved its product announcement until the end of a two-hour presentation. The GRID Visual Computing Appliance will be 4U in height and include a pair of 16-thread Xeon processors, plus up to 394 GB of system memory. It will include eight of Nvidia's GeForce Grid GPUs, each containing two of Nvidia's most advanced "Kepler" graphics units. Nvidia will charge $24,900 for a Grid VCA with 8 Kepler GPUs, 16 CPU threads, and 192 GB of system memory; the "Max" configuration will be priced at $39,900, with 16 Kepler GPUs, 32 GPU threads, and 394 GB of memory. The base model will also require an additional $2,400 per year for a software license, while the "Max" model will require $4,800 per year; both support unlimited devices. The GRID VCA is the evolution of a corporate strategy that Nvidia began in 2006, when it started development on CUDA, then a language for tapping into the inherent parallelism within the graphics processor. Nvidia began implementing CUDA into its graphics chips in three successive generations: "Tesla," "Fermi," and last year's "Kepler" chip. Nvidia announced March 19 that its next two enterprise-class GPUs would be 2014's "Maxwell," which will integrate unified virtual memory, followed by "Volta," which will include stacked DRAM for even greater performance. Last year, Nvidia announced the GeForce Grid processors, which included a pair of Kepler cores paired together for a total of 3,072 CUDA cores and 8 GB of memory, all designed to improve cloud gaming and other applications. Jen-Hsun Huang, the chief executive of Nvidia, said that the Grid chips had been included into servers from Microsoft, Citrix, VMware, Dell, Cisco, IBM and HP, including 75 large-scale trials in all. In January, Nvidia announced the Nvidia Grid, a server capable of supporting up to 24 racks, with 20 grid servers per rack. The Grid contains 240 Nvidia GPUs for a total of 200 teraflops—the equivalent of 700 Microsoft Xbox 360 game consoles. The idea, Huang said, was to allow workers to work "remotely" even inside the office, tapping into the power of backend Nvidia hardware instead of trying to perform the tasks locally at a PC or workstation. "It made more sense to store the data on the server, copy it to the client, store it in swap space locally... you process it locally," he added. “When you're done with the result you save it back to the file server. You have a copy of that data, I have a copy of that data, we all have a copy of that data. It's the antithesis of staying in sync." Today it takes customers “literally 24 hours” just to copy the data from site: "And by the time they copy it back, the team locally has already changed the data." Working with the Grid VCA, users can tap into virtual applications that don't even run on the client platform. The Grid VCA, which ships with its own hypervisor, supports up to 156 virtual machines. Huang invited executives from SolidWorks on stage, as well as DawnRunner, which uses a virtualized version of Adobe and Autodesk for production work. In several examples, engineers demonstrated how the system supports real-time rendering on the fly.

Nvidia has it all diagrammed out.[/caption] Nvidia continued its march into the enterprise hardware space, announcing its first integrated system for "remote graphics": the GRID VCA. Nvidia opened its GPU Technology Conference in San Jose with a demonstration of its GPUs’ enhanced graphics and computational performance—but saved its product announcement until the end of a two-hour presentation. The GRID Visual Computing Appliance will be 4U in height and include a pair of 16-thread Xeon processors, plus up to 394 GB of system memory. It will include eight of Nvidia's GeForce Grid GPUs, each containing two of Nvidia's most advanced "Kepler" graphics units. Nvidia will charge $24,900 for a Grid VCA with 8 Kepler GPUs, 16 CPU threads, and 192 GB of system memory; the "Max" configuration will be priced at $39,900, with 16 Kepler GPUs, 32 GPU threads, and 394 GB of memory. The base model will also require an additional $2,400 per year for a software license, while the "Max" model will require $4,800 per year; both support unlimited devices. The GRID VCA is the evolution of a corporate strategy that Nvidia began in 2006, when it started development on CUDA, then a language for tapping into the inherent parallelism within the graphics processor. Nvidia began implementing CUDA into its graphics chips in three successive generations: "Tesla," "Fermi," and last year's "Kepler" chip. Nvidia announced March 19 that its next two enterprise-class GPUs would be 2014's "Maxwell," which will integrate unified virtual memory, followed by "Volta," which will include stacked DRAM for even greater performance. Last year, Nvidia announced the GeForce Grid processors, which included a pair of Kepler cores paired together for a total of 3,072 CUDA cores and 8 GB of memory, all designed to improve cloud gaming and other applications. Jen-Hsun Huang, the chief executive of Nvidia, said that the Grid chips had been included into servers from Microsoft, Citrix, VMware, Dell, Cisco, IBM and HP, including 75 large-scale trials in all. In January, Nvidia announced the Nvidia Grid, a server capable of supporting up to 24 racks, with 20 grid servers per rack. The Grid contains 240 Nvidia GPUs for a total of 200 teraflops—the equivalent of 700 Microsoft Xbox 360 game consoles. The idea, Huang said, was to allow workers to work "remotely" even inside the office, tapping into the power of backend Nvidia hardware instead of trying to perform the tasks locally at a PC or workstation. "It made more sense to store the data on the server, copy it to the client, store it in swap space locally... you process it locally," he added. “When you're done with the result you save it back to the file server. You have a copy of that data, I have a copy of that data, we all have a copy of that data. It's the antithesis of staying in sync." Today it takes customers “literally 24 hours” just to copy the data from site: "And by the time they copy it back, the team locally has already changed the data." Working with the Grid VCA, users can tap into virtual applications that don't even run on the client platform. The Grid VCA, which ships with its own hypervisor, supports up to 156 virtual machines. Huang invited executives from SolidWorks on stage, as well as DawnRunner, which uses a virtualized version of Adobe and Autodesk for production work. In several examples, engineers demonstrated how the system supports real-time rendering on the fly.

Nvidia Launches VCA Appliance; Tips Maxwell, Volta GPUs

[caption id="attachment_8597" align="aligncenter" width="618"]  Nvidia has it all diagrammed out.[/caption] Nvidia continued its march into the enterprise hardware space, announcing its first integrated system for "remote graphics": the GRID VCA. Nvidia opened its GPU Technology Conference in San Jose with a demonstration of its GPUs’ enhanced graphics and computational performance—but saved its product announcement until the end of a two-hour presentation. The GRID Visual Computing Appliance will be 4U in height and include a pair of 16-thread Xeon processors, plus up to 394 GB of system memory. It will include eight of Nvidia's GeForce Grid GPUs, each containing two of Nvidia's most advanced "Kepler" graphics units. Nvidia will charge $24,900 for a Grid VCA with 8 Kepler GPUs, 16 CPU threads, and 192 GB of system memory; the "Max" configuration will be priced at $39,900, with 16 Kepler GPUs, 32 GPU threads, and 394 GB of memory. The base model will also require an additional $2,400 per year for a software license, while the "Max" model will require $4,800 per year; both support unlimited devices. The GRID VCA is the evolution of a corporate strategy that Nvidia began in 2006, when it started development on CUDA, then a language for tapping into the inherent parallelism within the graphics processor. Nvidia began implementing CUDA into its graphics chips in three successive generations: "Tesla," "Fermi," and last year's "Kepler" chip. Nvidia announced March 19 that its next two enterprise-class GPUs would be 2014's "Maxwell," which will integrate unified virtual memory, followed by "Volta," which will include stacked DRAM for even greater performance. Last year, Nvidia announced the GeForce Grid processors, which included a pair of Kepler cores paired together for a total of 3,072 CUDA cores and 8 GB of memory, all designed to improve cloud gaming and other applications. Jen-Hsun Huang, the chief executive of Nvidia, said that the Grid chips had been included into servers from Microsoft, Citrix, VMware, Dell, Cisco, IBM and HP, including 75 large-scale trials in all. In January, Nvidia announced the Nvidia Grid, a server capable of supporting up to 24 racks, with 20 grid servers per rack. The Grid contains 240 Nvidia GPUs for a total of 200 teraflops—the equivalent of 700 Microsoft Xbox 360 game consoles. The idea, Huang said, was to allow workers to work "remotely" even inside the office, tapping into the power of backend Nvidia hardware instead of trying to perform the tasks locally at a PC or workstation. "It made more sense to store the data on the server, copy it to the client, store it in swap space locally... you process it locally," he added. “When you're done with the result you save it back to the file server. You have a copy of that data, I have a copy of that data, we all have a copy of that data. It's the antithesis of staying in sync." Today it takes customers “literally 24 hours” just to copy the data from site: "And by the time they copy it back, the team locally has already changed the data." Working with the Grid VCA, users can tap into virtual applications that don't even run on the client platform. The Grid VCA, which ships with its own hypervisor, supports up to 156 virtual machines. Huang invited executives from SolidWorks on stage, as well as DawnRunner, which uses a virtualized version of Adobe and Autodesk for production work. In several examples, engineers demonstrated how the system supports real-time rendering on the fly.

Nvidia has it all diagrammed out.[/caption] Nvidia continued its march into the enterprise hardware space, announcing its first integrated system for "remote graphics": the GRID VCA. Nvidia opened its GPU Technology Conference in San Jose with a demonstration of its GPUs’ enhanced graphics and computational performance—but saved its product announcement until the end of a two-hour presentation. The GRID Visual Computing Appliance will be 4U in height and include a pair of 16-thread Xeon processors, plus up to 394 GB of system memory. It will include eight of Nvidia's GeForce Grid GPUs, each containing two of Nvidia's most advanced "Kepler" graphics units. Nvidia will charge $24,900 for a Grid VCA with 8 Kepler GPUs, 16 CPU threads, and 192 GB of system memory; the "Max" configuration will be priced at $39,900, with 16 Kepler GPUs, 32 GPU threads, and 394 GB of memory. The base model will also require an additional $2,400 per year for a software license, while the "Max" model will require $4,800 per year; both support unlimited devices. The GRID VCA is the evolution of a corporate strategy that Nvidia began in 2006, when it started development on CUDA, then a language for tapping into the inherent parallelism within the graphics processor. Nvidia began implementing CUDA into its graphics chips in three successive generations: "Tesla," "Fermi," and last year's "Kepler" chip. Nvidia announced March 19 that its next two enterprise-class GPUs would be 2014's "Maxwell," which will integrate unified virtual memory, followed by "Volta," which will include stacked DRAM for even greater performance. Last year, Nvidia announced the GeForce Grid processors, which included a pair of Kepler cores paired together for a total of 3,072 CUDA cores and 8 GB of memory, all designed to improve cloud gaming and other applications. Jen-Hsun Huang, the chief executive of Nvidia, said that the Grid chips had been included into servers from Microsoft, Citrix, VMware, Dell, Cisco, IBM and HP, including 75 large-scale trials in all. In January, Nvidia announced the Nvidia Grid, a server capable of supporting up to 24 racks, with 20 grid servers per rack. The Grid contains 240 Nvidia GPUs for a total of 200 teraflops—the equivalent of 700 Microsoft Xbox 360 game consoles. The idea, Huang said, was to allow workers to work "remotely" even inside the office, tapping into the power of backend Nvidia hardware instead of trying to perform the tasks locally at a PC or workstation. "It made more sense to store the data on the server, copy it to the client, store it in swap space locally... you process it locally," he added. “When you're done with the result you save it back to the file server. You have a copy of that data, I have a copy of that data, we all have a copy of that data. It's the antithesis of staying in sync." Today it takes customers “literally 24 hours” just to copy the data from site: "And by the time they copy it back, the team locally has already changed the data." Working with the Grid VCA, users can tap into virtual applications that don't even run on the client platform. The Grid VCA, which ships with its own hypervisor, supports up to 156 virtual machines. Huang invited executives from SolidWorks on stage, as well as DawnRunner, which uses a virtualized version of Adobe and Autodesk for production work. In several examples, engineers demonstrated how the system supports real-time rendering on the fly.

Nvidia has it all diagrammed out.[/caption] Nvidia continued its march into the enterprise hardware space, announcing its first integrated system for "remote graphics": the GRID VCA. Nvidia opened its GPU Technology Conference in San Jose with a demonstration of its GPUs’ enhanced graphics and computational performance—but saved its product announcement until the end of a two-hour presentation. The GRID Visual Computing Appliance will be 4U in height and include a pair of 16-thread Xeon processors, plus up to 394 GB of system memory. It will include eight of Nvidia's GeForce Grid GPUs, each containing two of Nvidia's most advanced "Kepler" graphics units. Nvidia will charge $24,900 for a Grid VCA with 8 Kepler GPUs, 16 CPU threads, and 192 GB of system memory; the "Max" configuration will be priced at $39,900, with 16 Kepler GPUs, 32 GPU threads, and 394 GB of memory. The base model will also require an additional $2,400 per year for a software license, while the "Max" model will require $4,800 per year; both support unlimited devices. The GRID VCA is the evolution of a corporate strategy that Nvidia began in 2006, when it started development on CUDA, then a language for tapping into the inherent parallelism within the graphics processor. Nvidia began implementing CUDA into its graphics chips in three successive generations: "Tesla," "Fermi," and last year's "Kepler" chip. Nvidia announced March 19 that its next two enterprise-class GPUs would be 2014's "Maxwell," which will integrate unified virtual memory, followed by "Volta," which will include stacked DRAM for even greater performance. Last year, Nvidia announced the GeForce Grid processors, which included a pair of Kepler cores paired together for a total of 3,072 CUDA cores and 8 GB of memory, all designed to improve cloud gaming and other applications. Jen-Hsun Huang, the chief executive of Nvidia, said that the Grid chips had been included into servers from Microsoft, Citrix, VMware, Dell, Cisco, IBM and HP, including 75 large-scale trials in all. In January, Nvidia announced the Nvidia Grid, a server capable of supporting up to 24 racks, with 20 grid servers per rack. The Grid contains 240 Nvidia GPUs for a total of 200 teraflops—the equivalent of 700 Microsoft Xbox 360 game consoles. The idea, Huang said, was to allow workers to work "remotely" even inside the office, tapping into the power of backend Nvidia hardware instead of trying to perform the tasks locally at a PC or workstation. "It made more sense to store the data on the server, copy it to the client, store it in swap space locally... you process it locally," he added. “When you're done with the result you save it back to the file server. You have a copy of that data, I have a copy of that data, we all have a copy of that data. It's the antithesis of staying in sync." Today it takes customers “literally 24 hours” just to copy the data from site: "And by the time they copy it back, the team locally has already changed the data." Working with the Grid VCA, users can tap into virtual applications that don't even run on the client platform. The Grid VCA, which ships with its own hypervisor, supports up to 156 virtual machines. Huang invited executives from SolidWorks on stage, as well as DawnRunner, which uses a virtualized version of Adobe and Autodesk for production work. In several examples, engineers demonstrated how the system supports real-time rendering on the fly.

Nvidia has it all diagrammed out.[/caption] Nvidia continued its march into the enterprise hardware space, announcing its first integrated system for "remote graphics": the GRID VCA. Nvidia opened its GPU Technology Conference in San Jose with a demonstration of its GPUs’ enhanced graphics and computational performance—but saved its product announcement until the end of a two-hour presentation. The GRID Visual Computing Appliance will be 4U in height and include a pair of 16-thread Xeon processors, plus up to 394 GB of system memory. It will include eight of Nvidia's GeForce Grid GPUs, each containing two of Nvidia's most advanced "Kepler" graphics units. Nvidia will charge $24,900 for a Grid VCA with 8 Kepler GPUs, 16 CPU threads, and 192 GB of system memory; the "Max" configuration will be priced at $39,900, with 16 Kepler GPUs, 32 GPU threads, and 394 GB of memory. The base model will also require an additional $2,400 per year for a software license, while the "Max" model will require $4,800 per year; both support unlimited devices. The GRID VCA is the evolution of a corporate strategy that Nvidia began in 2006, when it started development on CUDA, then a language for tapping into the inherent parallelism within the graphics processor. Nvidia began implementing CUDA into its graphics chips in three successive generations: "Tesla," "Fermi," and last year's "Kepler" chip. Nvidia announced March 19 that its next two enterprise-class GPUs would be 2014's "Maxwell," which will integrate unified virtual memory, followed by "Volta," which will include stacked DRAM for even greater performance. Last year, Nvidia announced the GeForce Grid processors, which included a pair of Kepler cores paired together for a total of 3,072 CUDA cores and 8 GB of memory, all designed to improve cloud gaming and other applications. Jen-Hsun Huang, the chief executive of Nvidia, said that the Grid chips had been included into servers from Microsoft, Citrix, VMware, Dell, Cisco, IBM and HP, including 75 large-scale trials in all. In January, Nvidia announced the Nvidia Grid, a server capable of supporting up to 24 racks, with 20 grid servers per rack. The Grid contains 240 Nvidia GPUs for a total of 200 teraflops—the equivalent of 700 Microsoft Xbox 360 game consoles. The idea, Huang said, was to allow workers to work "remotely" even inside the office, tapping into the power of backend Nvidia hardware instead of trying to perform the tasks locally at a PC or workstation. "It made more sense to store the data on the server, copy it to the client, store it in swap space locally... you process it locally," he added. “When you're done with the result you save it back to the file server. You have a copy of that data, I have a copy of that data, we all have a copy of that data. It's the antithesis of staying in sync." Today it takes customers “literally 24 hours” just to copy the data from site: "And by the time they copy it back, the team locally has already changed the data." Working with the Grid VCA, users can tap into virtual applications that don't even run on the client platform. The Grid VCA, which ships with its own hypervisor, supports up to 156 virtual machines. Huang invited executives from SolidWorks on stage, as well as DawnRunner, which uses a virtualized version of Adobe and Autodesk for production work. In several examples, engineers demonstrated how the system supports real-time rendering on the fly.