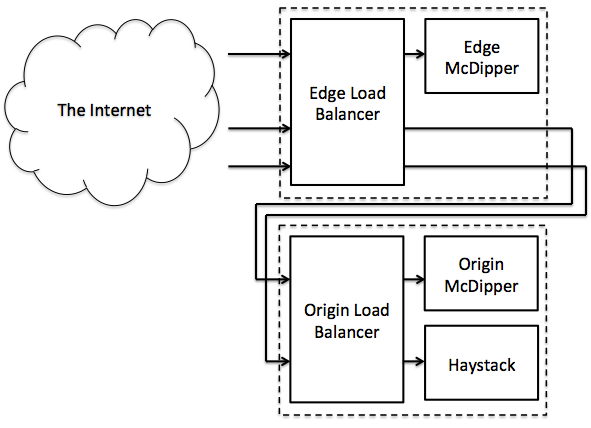

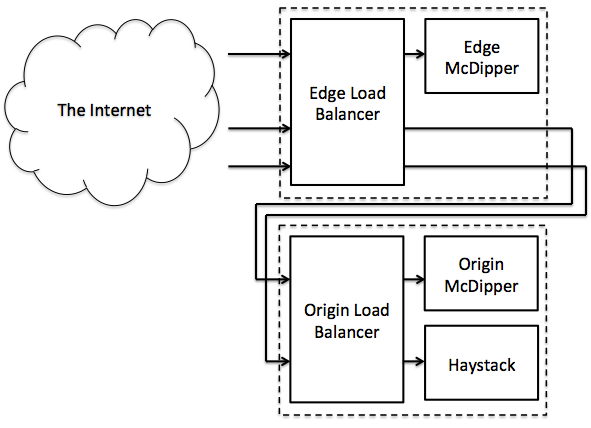

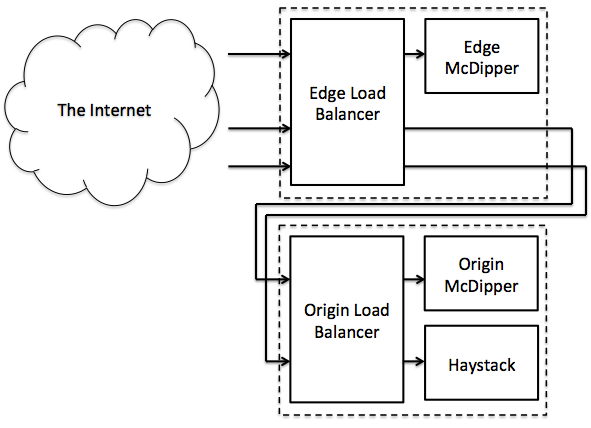

Facebook's McDipper architecture.[/caption] With more than 300 million photos uploaded to Facebook every day, storing them in a fashion that is both efficient and cost-effective is critical. To help do so, Facebook has said publicly that it’s taken the Memcached memory caching system (which typically runs in high-performance RAM) and shifted it to slower flash memory. In order to make that happen, Facebook has relied on a flash-based cache server that’s still compatible with Memcached, which it calls McDipper. After a year of successful deployment, Facebook is finally talking about its experiences with the technology. Since deploying McDipper to several large-footprint, low-request-rate pools, Facebook has managed to reduce the total number of deployed servers in some pools by as much as 90 percent, while still delivering more than 90 percent of get responses with sub-millisecond latencies. And how effective is it, performance-wise? "We serve over 150 Gb/s from McDipper forward caches in our CDN," Facebook’s team wrote in a corporate blog posting. "To put this number in perspective, it's about one Library of Congress (10 TB) every 10 minutes." Some of the Web’s largest destinations, including Reddit and Wikipedia, have relied on Memcached, which was designed by Danga Interactive for LiveJournal. It can cache objects and other data in a shared pool of RAM to speed up the performance of dynamic-database driven sites. Facebook stores its folders in what it calls "cold storage." The company’s solution is faster than, say, Amazon's "Glacier" tape storage, but still comes with more latency than Facebook's customer-facing Web servers. Jay Parikh, vice president of Facebook's infrastructure, detailed the process at the Open Compute Summit in February: a cold-storage client grabs a particular file and sends it to a staging service, where it’s broken up into chunks; from there, the streaming service works with the storage-device service, which helps place those chunks into the appropriate raw storage nodes. That’s where McDipper fits in, according to the Facebook team: there are currently two layers of McDipper caches between an end-user querying the Facebook content delivery network and the company's Haystack software, which indexes all Facebook photos. HTTP and HTTPS requests to the world-facing HTTP load balancers within the points of presence (small server deployments located close to end users) are converted to memcache requests and issued to McDipper. When those requests miss, it's off to a similarly configured, second layer of McDipper cache instances, located in or beside a set of "origin" load balancers. Should the origin cache also miss, the request is forwarded to Haystack, Facebook said. All of the photos are stored in flash, with the associated hash bucket metadata in memory. "This configuration gives us the large sequential writes we need to minimize write amplification on the flash device and allows us to serve a photo fetch with one flash storage read and complete a photo store with a single write completed as part of a more efficient bulk operation," Facebook said. Facebook also suggested it had to accommodate any issues that cropped up, such as values written twice in quick succession. Occasionally, the older value would arrive last, meaning that the wrong value would overwrite the correct one. Facebook implemented a delayed delete command, preventing any new value from being set until the delete delay had expired. Facebook maintains an open pointer into the flash device, sequentially writing new records. When enough "dirty" records or collected, another long sequential write is kicked off, resulting in long sequential writes and random reads, or gets. Storing photos might not be as complex as collating them via the dedicated hardware powering Graph Search, but it's still an enormous problem. Unfortunately, Facebook engineers haven't said whether or not they'll open-source the McDipper solution, so other Web companies will have to solve this problem on their own, at least for now. Image: Facebook

Facebook's McDipper architecture.[/caption] With more than 300 million photos uploaded to Facebook every day, storing them in a fashion that is both efficient and cost-effective is critical. To help do so, Facebook has said publicly that it’s taken the Memcached memory caching system (which typically runs in high-performance RAM) and shifted it to slower flash memory. In order to make that happen, Facebook has relied on a flash-based cache server that’s still compatible with Memcached, which it calls McDipper. After a year of successful deployment, Facebook is finally talking about its experiences with the technology. Since deploying McDipper to several large-footprint, low-request-rate pools, Facebook has managed to reduce the total number of deployed servers in some pools by as much as 90 percent, while still delivering more than 90 percent of get responses with sub-millisecond latencies. And how effective is it, performance-wise? "We serve over 150 Gb/s from McDipper forward caches in our CDN," Facebook’s team wrote in a corporate blog posting. "To put this number in perspective, it's about one Library of Congress (10 TB) every 10 minutes." Some of the Web’s largest destinations, including Reddit and Wikipedia, have relied on Memcached, which was designed by Danga Interactive for LiveJournal. It can cache objects and other data in a shared pool of RAM to speed up the performance of dynamic-database driven sites. Facebook stores its folders in what it calls "cold storage." The company’s solution is faster than, say, Amazon's "Glacier" tape storage, but still comes with more latency than Facebook's customer-facing Web servers. Jay Parikh, vice president of Facebook's infrastructure, detailed the process at the Open Compute Summit in February: a cold-storage client grabs a particular file and sends it to a staging service, where it’s broken up into chunks; from there, the streaming service works with the storage-device service, which helps place those chunks into the appropriate raw storage nodes. That’s where McDipper fits in, according to the Facebook team: there are currently two layers of McDipper caches between an end-user querying the Facebook content delivery network and the company's Haystack software, which indexes all Facebook photos. HTTP and HTTPS requests to the world-facing HTTP load balancers within the points of presence (small server deployments located close to end users) are converted to memcache requests and issued to McDipper. When those requests miss, it's off to a similarly configured, second layer of McDipper cache instances, located in or beside a set of "origin" load balancers. Should the origin cache also miss, the request is forwarded to Haystack, Facebook said. All of the photos are stored in flash, with the associated hash bucket metadata in memory. "This configuration gives us the large sequential writes we need to minimize write amplification on the flash device and allows us to serve a photo fetch with one flash storage read and complete a photo store with a single write completed as part of a more efficient bulk operation," Facebook said. Facebook also suggested it had to accommodate any issues that cropped up, such as values written twice in quick succession. Occasionally, the older value would arrive last, meaning that the wrong value would overwrite the correct one. Facebook implemented a delayed delete command, preventing any new value from being set until the delete delay had expired. Facebook maintains an open pointer into the flash device, sequentially writing new records. When enough "dirty" records or collected, another long sequential write is kicked off, resulting in long sequential writes and random reads, or gets. Storing photos might not be as complex as collating them via the dedicated hardware powering Graph Search, but it's still an enormous problem. Unfortunately, Facebook engineers haven't said whether or not they'll open-source the McDipper solution, so other Web companies will have to solve this problem on their own, at least for now. Image: Facebook Facebook's McDipper Moves Cache From RAM to Flash

[caption id="attachment_8263" align="aligncenter" width="591"]  Facebook's McDipper architecture.[/caption] With more than 300 million photos uploaded to Facebook every day, storing them in a fashion that is both efficient and cost-effective is critical. To help do so, Facebook has said publicly that it’s taken the Memcached memory caching system (which typically runs in high-performance RAM) and shifted it to slower flash memory. In order to make that happen, Facebook has relied on a flash-based cache server that’s still compatible with Memcached, which it calls McDipper. After a year of successful deployment, Facebook is finally talking about its experiences with the technology. Since deploying McDipper to several large-footprint, low-request-rate pools, Facebook has managed to reduce the total number of deployed servers in some pools by as much as 90 percent, while still delivering more than 90 percent of get responses with sub-millisecond latencies. And how effective is it, performance-wise? "We serve over 150 Gb/s from McDipper forward caches in our CDN," Facebook’s team wrote in a corporate blog posting. "To put this number in perspective, it's about one Library of Congress (10 TB) every 10 minutes." Some of the Web’s largest destinations, including Reddit and Wikipedia, have relied on Memcached, which was designed by Danga Interactive for LiveJournal. It can cache objects and other data in a shared pool of RAM to speed up the performance of dynamic-database driven sites. Facebook stores its folders in what it calls "cold storage." The company’s solution is faster than, say, Amazon's "Glacier" tape storage, but still comes with more latency than Facebook's customer-facing Web servers. Jay Parikh, vice president of Facebook's infrastructure, detailed the process at the Open Compute Summit in February: a cold-storage client grabs a particular file and sends it to a staging service, where it’s broken up into chunks; from there, the streaming service works with the storage-device service, which helps place those chunks into the appropriate raw storage nodes. That’s where McDipper fits in, according to the Facebook team: there are currently two layers of McDipper caches between an end-user querying the Facebook content delivery network and the company's Haystack software, which indexes all Facebook photos. HTTP and HTTPS requests to the world-facing HTTP load balancers within the points of presence (small server deployments located close to end users) are converted to memcache requests and issued to McDipper. When those requests miss, it's off to a similarly configured, second layer of McDipper cache instances, located in or beside a set of "origin" load balancers. Should the origin cache also miss, the request is forwarded to Haystack, Facebook said. All of the photos are stored in flash, with the associated hash bucket metadata in memory. "This configuration gives us the large sequential writes we need to minimize write amplification on the flash device and allows us to serve a photo fetch with one flash storage read and complete a photo store with a single write completed as part of a more efficient bulk operation," Facebook said. Facebook also suggested it had to accommodate any issues that cropped up, such as values written twice in quick succession. Occasionally, the older value would arrive last, meaning that the wrong value would overwrite the correct one. Facebook implemented a delayed delete command, preventing any new value from being set until the delete delay had expired. Facebook maintains an open pointer into the flash device, sequentially writing new records. When enough "dirty" records or collected, another long sequential write is kicked off, resulting in long sequential writes and random reads, or gets. Storing photos might not be as complex as collating them via the dedicated hardware powering Graph Search, but it's still an enormous problem. Unfortunately, Facebook engineers haven't said whether or not they'll open-source the McDipper solution, so other Web companies will have to solve this problem on their own, at least for now. Image: Facebook

Facebook's McDipper architecture.[/caption] With more than 300 million photos uploaded to Facebook every day, storing them in a fashion that is both efficient and cost-effective is critical. To help do so, Facebook has said publicly that it’s taken the Memcached memory caching system (which typically runs in high-performance RAM) and shifted it to slower flash memory. In order to make that happen, Facebook has relied on a flash-based cache server that’s still compatible with Memcached, which it calls McDipper. After a year of successful deployment, Facebook is finally talking about its experiences with the technology. Since deploying McDipper to several large-footprint, low-request-rate pools, Facebook has managed to reduce the total number of deployed servers in some pools by as much as 90 percent, while still delivering more than 90 percent of get responses with sub-millisecond latencies. And how effective is it, performance-wise? "We serve over 150 Gb/s from McDipper forward caches in our CDN," Facebook’s team wrote in a corporate blog posting. "To put this number in perspective, it's about one Library of Congress (10 TB) every 10 minutes." Some of the Web’s largest destinations, including Reddit and Wikipedia, have relied on Memcached, which was designed by Danga Interactive for LiveJournal. It can cache objects and other data in a shared pool of RAM to speed up the performance of dynamic-database driven sites. Facebook stores its folders in what it calls "cold storage." The company’s solution is faster than, say, Amazon's "Glacier" tape storage, but still comes with more latency than Facebook's customer-facing Web servers. Jay Parikh, vice president of Facebook's infrastructure, detailed the process at the Open Compute Summit in February: a cold-storage client grabs a particular file and sends it to a staging service, where it’s broken up into chunks; from there, the streaming service works with the storage-device service, which helps place those chunks into the appropriate raw storage nodes. That’s where McDipper fits in, according to the Facebook team: there are currently two layers of McDipper caches between an end-user querying the Facebook content delivery network and the company's Haystack software, which indexes all Facebook photos. HTTP and HTTPS requests to the world-facing HTTP load balancers within the points of presence (small server deployments located close to end users) are converted to memcache requests and issued to McDipper. When those requests miss, it's off to a similarly configured, second layer of McDipper cache instances, located in or beside a set of "origin" load balancers. Should the origin cache also miss, the request is forwarded to Haystack, Facebook said. All of the photos are stored in flash, with the associated hash bucket metadata in memory. "This configuration gives us the large sequential writes we need to minimize write amplification on the flash device and allows us to serve a photo fetch with one flash storage read and complete a photo store with a single write completed as part of a more efficient bulk operation," Facebook said. Facebook also suggested it had to accommodate any issues that cropped up, such as values written twice in quick succession. Occasionally, the older value would arrive last, meaning that the wrong value would overwrite the correct one. Facebook implemented a delayed delete command, preventing any new value from being set until the delete delay had expired. Facebook maintains an open pointer into the flash device, sequentially writing new records. When enough "dirty" records or collected, another long sequential write is kicked off, resulting in long sequential writes and random reads, or gets. Storing photos might not be as complex as collating them via the dedicated hardware powering Graph Search, but it's still an enormous problem. Unfortunately, Facebook engineers haven't said whether or not they'll open-source the McDipper solution, so other Web companies will have to solve this problem on their own, at least for now. Image: Facebook

Facebook's McDipper architecture.[/caption] With more than 300 million photos uploaded to Facebook every day, storing them in a fashion that is both efficient and cost-effective is critical. To help do so, Facebook has said publicly that it’s taken the Memcached memory caching system (which typically runs in high-performance RAM) and shifted it to slower flash memory. In order to make that happen, Facebook has relied on a flash-based cache server that’s still compatible with Memcached, which it calls McDipper. After a year of successful deployment, Facebook is finally talking about its experiences with the technology. Since deploying McDipper to several large-footprint, low-request-rate pools, Facebook has managed to reduce the total number of deployed servers in some pools by as much as 90 percent, while still delivering more than 90 percent of get responses with sub-millisecond latencies. And how effective is it, performance-wise? "We serve over 150 Gb/s from McDipper forward caches in our CDN," Facebook’s team wrote in a corporate blog posting. "To put this number in perspective, it's about one Library of Congress (10 TB) every 10 minutes." Some of the Web’s largest destinations, including Reddit and Wikipedia, have relied on Memcached, which was designed by Danga Interactive for LiveJournal. It can cache objects and other data in a shared pool of RAM to speed up the performance of dynamic-database driven sites. Facebook stores its folders in what it calls "cold storage." The company’s solution is faster than, say, Amazon's "Glacier" tape storage, but still comes with more latency than Facebook's customer-facing Web servers. Jay Parikh, vice president of Facebook's infrastructure, detailed the process at the Open Compute Summit in February: a cold-storage client grabs a particular file and sends it to a staging service, where it’s broken up into chunks; from there, the streaming service works with the storage-device service, which helps place those chunks into the appropriate raw storage nodes. That’s where McDipper fits in, according to the Facebook team: there are currently two layers of McDipper caches between an end-user querying the Facebook content delivery network and the company's Haystack software, which indexes all Facebook photos. HTTP and HTTPS requests to the world-facing HTTP load balancers within the points of presence (small server deployments located close to end users) are converted to memcache requests and issued to McDipper. When those requests miss, it's off to a similarly configured, second layer of McDipper cache instances, located in or beside a set of "origin" load balancers. Should the origin cache also miss, the request is forwarded to Haystack, Facebook said. All of the photos are stored in flash, with the associated hash bucket metadata in memory. "This configuration gives us the large sequential writes we need to minimize write amplification on the flash device and allows us to serve a photo fetch with one flash storage read and complete a photo store with a single write completed as part of a more efficient bulk operation," Facebook said. Facebook also suggested it had to accommodate any issues that cropped up, such as values written twice in quick succession. Occasionally, the older value would arrive last, meaning that the wrong value would overwrite the correct one. Facebook implemented a delayed delete command, preventing any new value from being set until the delete delay had expired. Facebook maintains an open pointer into the flash device, sequentially writing new records. When enough "dirty" records or collected, another long sequential write is kicked off, resulting in long sequential writes and random reads, or gets. Storing photos might not be as complex as collating them via the dedicated hardware powering Graph Search, but it's still an enormous problem. Unfortunately, Facebook engineers haven't said whether or not they'll open-source the McDipper solution, so other Web companies will have to solve this problem on their own, at least for now. Image: Facebook

Facebook's McDipper architecture.[/caption] With more than 300 million photos uploaded to Facebook every day, storing them in a fashion that is both efficient and cost-effective is critical. To help do so, Facebook has said publicly that it’s taken the Memcached memory caching system (which typically runs in high-performance RAM) and shifted it to slower flash memory. In order to make that happen, Facebook has relied on a flash-based cache server that’s still compatible with Memcached, which it calls McDipper. After a year of successful deployment, Facebook is finally talking about its experiences with the technology. Since deploying McDipper to several large-footprint, low-request-rate pools, Facebook has managed to reduce the total number of deployed servers in some pools by as much as 90 percent, while still delivering more than 90 percent of get responses with sub-millisecond latencies. And how effective is it, performance-wise? "We serve over 150 Gb/s from McDipper forward caches in our CDN," Facebook’s team wrote in a corporate blog posting. "To put this number in perspective, it's about one Library of Congress (10 TB) every 10 minutes." Some of the Web’s largest destinations, including Reddit and Wikipedia, have relied on Memcached, which was designed by Danga Interactive for LiveJournal. It can cache objects and other data in a shared pool of RAM to speed up the performance of dynamic-database driven sites. Facebook stores its folders in what it calls "cold storage." The company’s solution is faster than, say, Amazon's "Glacier" tape storage, but still comes with more latency than Facebook's customer-facing Web servers. Jay Parikh, vice president of Facebook's infrastructure, detailed the process at the Open Compute Summit in February: a cold-storage client grabs a particular file and sends it to a staging service, where it’s broken up into chunks; from there, the streaming service works with the storage-device service, which helps place those chunks into the appropriate raw storage nodes. That’s where McDipper fits in, according to the Facebook team: there are currently two layers of McDipper caches between an end-user querying the Facebook content delivery network and the company's Haystack software, which indexes all Facebook photos. HTTP and HTTPS requests to the world-facing HTTP load balancers within the points of presence (small server deployments located close to end users) are converted to memcache requests and issued to McDipper. When those requests miss, it's off to a similarly configured, second layer of McDipper cache instances, located in or beside a set of "origin" load balancers. Should the origin cache also miss, the request is forwarded to Haystack, Facebook said. All of the photos are stored in flash, with the associated hash bucket metadata in memory. "This configuration gives us the large sequential writes we need to minimize write amplification on the flash device and allows us to serve a photo fetch with one flash storage read and complete a photo store with a single write completed as part of a more efficient bulk operation," Facebook said. Facebook also suggested it had to accommodate any issues that cropped up, such as values written twice in quick succession. Occasionally, the older value would arrive last, meaning that the wrong value would overwrite the correct one. Facebook implemented a delayed delete command, preventing any new value from being set until the delete delay had expired. Facebook maintains an open pointer into the flash device, sequentially writing new records. When enough "dirty" records or collected, another long sequential write is kicked off, resulting in long sequential writes and random reads, or gets. Storing photos might not be as complex as collating them via the dedicated hardware powering Graph Search, but it's still an enormous problem. Unfortunately, Facebook engineers haven't said whether or not they'll open-source the McDipper solution, so other Web companies will have to solve this problem on their own, at least for now. Image: Facebook