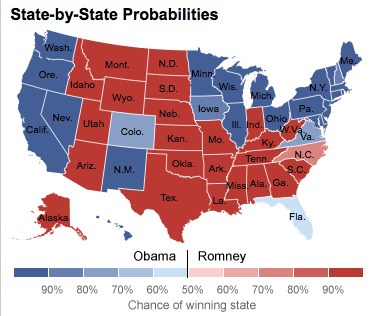

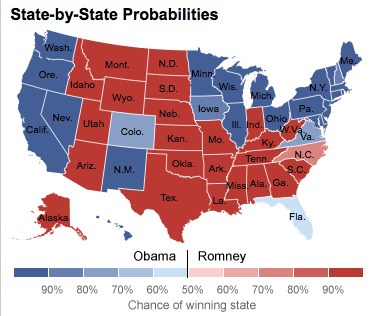

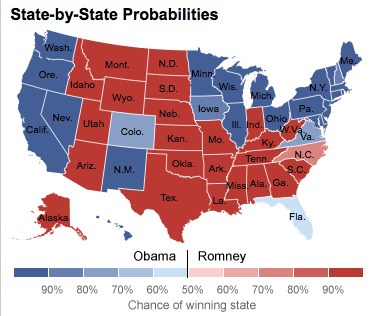

Nate Silver used a complex formula and huge datasets to predict the Electoral College.[/caption] As the 2012 U.S. presidential race rumbled to its conclusion, something peculiar happened: a handful of high-profile pundits (and more than a few bloggers) began a heated debate over the accuracy of a predictive algorithm. The algorithm in question belonged to Nate Silver, who posted its outputs—presidential election predictions that turned out to be startlingly accurate—on his FiveThirtyEight blog for The New York Times. In the days heading up to the election, Silver suggested that President Obama had an excellent chance of winning a second term. Various pundits pushed back, arguing that the race between Obama and Romney remained very much neck-and-neck. On election eve, Washington Post columnist (and former Republican speechwriter) Michael Gerson launched a missile at Silver and the use of statistical analysis in politics. “An election is not a mathematical equation; it is a nation making a decision,” he wrote. “People are weighing the priorities of their society and the quality of their leaders. Those views, at any given moment, can be roughly measured. But spreadsheets don’t add up to a political community.” But Silver argued that, when you came down to it, the election really was an equation. “Our model basically averages the polls and then simulates the Electoral College,” he explained in a television interview. “I think I get a lot of grief because I frustrate narratives that are told by pundits and journalists that don’t have a lot of grounding in objective reality, frankly.” His model mixed together national and state polls, with an added dash of historical data from previous elections; by the morning of Nov. 6, it was telling him Obama had a 90.9 percent chance of victory. Silver ended up accurately predicting Obama’s wins in all 50 states. His prognostication transformed him into a bit of a celebrity, complete with appearances on various high-profile talk shows. But will his victory translate into many future Nate Silvers crunching mountains of data and offering razor-accurate results? Or will pundits continue to dominate the majority of the daily political discussion, with Silver and other statisticians more of a sideshow? One supposes that if Silver or another statistician get a future election wrong—if their math gave the losing candidate a 98.1 percent chance of winning, for example—then pundits and talking heads will leap on the obvious: that all algorithms are potentially fallible, especially depending on the quality of the inputs. But whether that ultimately weakens the prominence or the importance of statistical analysis in politics is another question entirely. In a quest for votes and money, the Democratic and Republican campaigns erected enormous—and highly secretive—data mining systems. Numerous publications detailed Obama’s team of data scientists, developers and engineers dedicated to digging through mountains of data—most of it collected from social networks and various online sources—in search of actionable insights. According to the Associated Press, Romney’s campaign used data tools to locate and tap into potential donors. Now that the election’s over, there’ll likely be a good deal of postgame analysis about how much those data-mining efforts helped sway ultimate victory for the Obama side. All the number-crunching in the world, however, is useless unless a political party has the infrastructure to put any insights into real-world action. Image: FiveThirtyEight

Nate Silver used a complex formula and huge datasets to predict the Electoral College.[/caption] As the 2012 U.S. presidential race rumbled to its conclusion, something peculiar happened: a handful of high-profile pundits (and more than a few bloggers) began a heated debate over the accuracy of a predictive algorithm. The algorithm in question belonged to Nate Silver, who posted its outputs—presidential election predictions that turned out to be startlingly accurate—on his FiveThirtyEight blog for The New York Times. In the days heading up to the election, Silver suggested that President Obama had an excellent chance of winning a second term. Various pundits pushed back, arguing that the race between Obama and Romney remained very much neck-and-neck. On election eve, Washington Post columnist (and former Republican speechwriter) Michael Gerson launched a missile at Silver and the use of statistical analysis in politics. “An election is not a mathematical equation; it is a nation making a decision,” he wrote. “People are weighing the priorities of their society and the quality of their leaders. Those views, at any given moment, can be roughly measured. But spreadsheets don’t add up to a political community.” But Silver argued that, when you came down to it, the election really was an equation. “Our model basically averages the polls and then simulates the Electoral College,” he explained in a television interview. “I think I get a lot of grief because I frustrate narratives that are told by pundits and journalists that don’t have a lot of grounding in objective reality, frankly.” His model mixed together national and state polls, with an added dash of historical data from previous elections; by the morning of Nov. 6, it was telling him Obama had a 90.9 percent chance of victory. Silver ended up accurately predicting Obama’s wins in all 50 states. His prognostication transformed him into a bit of a celebrity, complete with appearances on various high-profile talk shows. But will his victory translate into many future Nate Silvers crunching mountains of data and offering razor-accurate results? Or will pundits continue to dominate the majority of the daily political discussion, with Silver and other statisticians more of a sideshow? One supposes that if Silver or another statistician get a future election wrong—if their math gave the losing candidate a 98.1 percent chance of winning, for example—then pundits and talking heads will leap on the obvious: that all algorithms are potentially fallible, especially depending on the quality of the inputs. But whether that ultimately weakens the prominence or the importance of statistical analysis in politics is another question entirely. In a quest for votes and money, the Democratic and Republican campaigns erected enormous—and highly secretive—data mining systems. Numerous publications detailed Obama’s team of data scientists, developers and engineers dedicated to digging through mountains of data—most of it collected from social networks and various online sources—in search of actionable insights. According to the Associated Press, Romney’s campaign used data tools to locate and tap into potential donors. Now that the election’s over, there’ll likely be a good deal of postgame analysis about how much those data-mining efforts helped sway ultimate victory for the Obama side. All the number-crunching in the world, however, is useless unless a political party has the infrastructure to put any insights into real-world action. Image: FiveThirtyEight Will Statistical Analytics Change Politics?

[caption id="attachment_5814" align="aligncenter" width="376"]  Nate Silver used a complex formula and huge datasets to predict the Electoral College.[/caption] As the 2012 U.S. presidential race rumbled to its conclusion, something peculiar happened: a handful of high-profile pundits (and more than a few bloggers) began a heated debate over the accuracy of a predictive algorithm. The algorithm in question belonged to Nate Silver, who posted its outputs—presidential election predictions that turned out to be startlingly accurate—on his FiveThirtyEight blog for The New York Times. In the days heading up to the election, Silver suggested that President Obama had an excellent chance of winning a second term. Various pundits pushed back, arguing that the race between Obama and Romney remained very much neck-and-neck. On election eve, Washington Post columnist (and former Republican speechwriter) Michael Gerson launched a missile at Silver and the use of statistical analysis in politics. “An election is not a mathematical equation; it is a nation making a decision,” he wrote. “People are weighing the priorities of their society and the quality of their leaders. Those views, at any given moment, can be roughly measured. But spreadsheets don’t add up to a political community.” But Silver argued that, when you came down to it, the election really was an equation. “Our model basically averages the polls and then simulates the Electoral College,” he explained in a television interview. “I think I get a lot of grief because I frustrate narratives that are told by pundits and journalists that don’t have a lot of grounding in objective reality, frankly.” His model mixed together national and state polls, with an added dash of historical data from previous elections; by the morning of Nov. 6, it was telling him Obama had a 90.9 percent chance of victory. Silver ended up accurately predicting Obama’s wins in all 50 states. His prognostication transformed him into a bit of a celebrity, complete with appearances on various high-profile talk shows. But will his victory translate into many future Nate Silvers crunching mountains of data and offering razor-accurate results? Or will pundits continue to dominate the majority of the daily political discussion, with Silver and other statisticians more of a sideshow? One supposes that if Silver or another statistician get a future election wrong—if their math gave the losing candidate a 98.1 percent chance of winning, for example—then pundits and talking heads will leap on the obvious: that all algorithms are potentially fallible, especially depending on the quality of the inputs. But whether that ultimately weakens the prominence or the importance of statistical analysis in politics is another question entirely. In a quest for votes and money, the Democratic and Republican campaigns erected enormous—and highly secretive—data mining systems. Numerous publications detailed Obama’s team of data scientists, developers and engineers dedicated to digging through mountains of data—most of it collected from social networks and various online sources—in search of actionable insights. According to the Associated Press, Romney’s campaign used data tools to locate and tap into potential donors. Now that the election’s over, there’ll likely be a good deal of postgame analysis about how much those data-mining efforts helped sway ultimate victory for the Obama side. All the number-crunching in the world, however, is useless unless a political party has the infrastructure to put any insights into real-world action. Image: FiveThirtyEight

Nate Silver used a complex formula and huge datasets to predict the Electoral College.[/caption] As the 2012 U.S. presidential race rumbled to its conclusion, something peculiar happened: a handful of high-profile pundits (and more than a few bloggers) began a heated debate over the accuracy of a predictive algorithm. The algorithm in question belonged to Nate Silver, who posted its outputs—presidential election predictions that turned out to be startlingly accurate—on his FiveThirtyEight blog for The New York Times. In the days heading up to the election, Silver suggested that President Obama had an excellent chance of winning a second term. Various pundits pushed back, arguing that the race between Obama and Romney remained very much neck-and-neck. On election eve, Washington Post columnist (and former Republican speechwriter) Michael Gerson launched a missile at Silver and the use of statistical analysis in politics. “An election is not a mathematical equation; it is a nation making a decision,” he wrote. “People are weighing the priorities of their society and the quality of their leaders. Those views, at any given moment, can be roughly measured. But spreadsheets don’t add up to a political community.” But Silver argued that, when you came down to it, the election really was an equation. “Our model basically averages the polls and then simulates the Electoral College,” he explained in a television interview. “I think I get a lot of grief because I frustrate narratives that are told by pundits and journalists that don’t have a lot of grounding in objective reality, frankly.” His model mixed together national and state polls, with an added dash of historical data from previous elections; by the morning of Nov. 6, it was telling him Obama had a 90.9 percent chance of victory. Silver ended up accurately predicting Obama’s wins in all 50 states. His prognostication transformed him into a bit of a celebrity, complete with appearances on various high-profile talk shows. But will his victory translate into many future Nate Silvers crunching mountains of data and offering razor-accurate results? Or will pundits continue to dominate the majority of the daily political discussion, with Silver and other statisticians more of a sideshow? One supposes that if Silver or another statistician get a future election wrong—if their math gave the losing candidate a 98.1 percent chance of winning, for example—then pundits and talking heads will leap on the obvious: that all algorithms are potentially fallible, especially depending on the quality of the inputs. But whether that ultimately weakens the prominence or the importance of statistical analysis in politics is another question entirely. In a quest for votes and money, the Democratic and Republican campaigns erected enormous—and highly secretive—data mining systems. Numerous publications detailed Obama’s team of data scientists, developers and engineers dedicated to digging through mountains of data—most of it collected from social networks and various online sources—in search of actionable insights. According to the Associated Press, Romney’s campaign used data tools to locate and tap into potential donors. Now that the election’s over, there’ll likely be a good deal of postgame analysis about how much those data-mining efforts helped sway ultimate victory for the Obama side. All the number-crunching in the world, however, is useless unless a political party has the infrastructure to put any insights into real-world action. Image: FiveThirtyEight

Nate Silver used a complex formula and huge datasets to predict the Electoral College.[/caption] As the 2012 U.S. presidential race rumbled to its conclusion, something peculiar happened: a handful of high-profile pundits (and more than a few bloggers) began a heated debate over the accuracy of a predictive algorithm. The algorithm in question belonged to Nate Silver, who posted its outputs—presidential election predictions that turned out to be startlingly accurate—on his FiveThirtyEight blog for The New York Times. In the days heading up to the election, Silver suggested that President Obama had an excellent chance of winning a second term. Various pundits pushed back, arguing that the race between Obama and Romney remained very much neck-and-neck. On election eve, Washington Post columnist (and former Republican speechwriter) Michael Gerson launched a missile at Silver and the use of statistical analysis in politics. “An election is not a mathematical equation; it is a nation making a decision,” he wrote. “People are weighing the priorities of their society and the quality of their leaders. Those views, at any given moment, can be roughly measured. But spreadsheets don’t add up to a political community.” But Silver argued that, when you came down to it, the election really was an equation. “Our model basically averages the polls and then simulates the Electoral College,” he explained in a television interview. “I think I get a lot of grief because I frustrate narratives that are told by pundits and journalists that don’t have a lot of grounding in objective reality, frankly.” His model mixed together national and state polls, with an added dash of historical data from previous elections; by the morning of Nov. 6, it was telling him Obama had a 90.9 percent chance of victory. Silver ended up accurately predicting Obama’s wins in all 50 states. His prognostication transformed him into a bit of a celebrity, complete with appearances on various high-profile talk shows. But will his victory translate into many future Nate Silvers crunching mountains of data and offering razor-accurate results? Or will pundits continue to dominate the majority of the daily political discussion, with Silver and other statisticians more of a sideshow? One supposes that if Silver or another statistician get a future election wrong—if their math gave the losing candidate a 98.1 percent chance of winning, for example—then pundits and talking heads will leap on the obvious: that all algorithms are potentially fallible, especially depending on the quality of the inputs. But whether that ultimately weakens the prominence or the importance of statistical analysis in politics is another question entirely. In a quest for votes and money, the Democratic and Republican campaigns erected enormous—and highly secretive—data mining systems. Numerous publications detailed Obama’s team of data scientists, developers and engineers dedicated to digging through mountains of data—most of it collected from social networks and various online sources—in search of actionable insights. According to the Associated Press, Romney’s campaign used data tools to locate and tap into potential donors. Now that the election’s over, there’ll likely be a good deal of postgame analysis about how much those data-mining efforts helped sway ultimate victory for the Obama side. All the number-crunching in the world, however, is useless unless a political party has the infrastructure to put any insights into real-world action. Image: FiveThirtyEight

Nate Silver used a complex formula and huge datasets to predict the Electoral College.[/caption] As the 2012 U.S. presidential race rumbled to its conclusion, something peculiar happened: a handful of high-profile pundits (and more than a few bloggers) began a heated debate over the accuracy of a predictive algorithm. The algorithm in question belonged to Nate Silver, who posted its outputs—presidential election predictions that turned out to be startlingly accurate—on his FiveThirtyEight blog for The New York Times. In the days heading up to the election, Silver suggested that President Obama had an excellent chance of winning a second term. Various pundits pushed back, arguing that the race between Obama and Romney remained very much neck-and-neck. On election eve, Washington Post columnist (and former Republican speechwriter) Michael Gerson launched a missile at Silver and the use of statistical analysis in politics. “An election is not a mathematical equation; it is a nation making a decision,” he wrote. “People are weighing the priorities of their society and the quality of their leaders. Those views, at any given moment, can be roughly measured. But spreadsheets don’t add up to a political community.” But Silver argued that, when you came down to it, the election really was an equation. “Our model basically averages the polls and then simulates the Electoral College,” he explained in a television interview. “I think I get a lot of grief because I frustrate narratives that are told by pundits and journalists that don’t have a lot of grounding in objective reality, frankly.” His model mixed together national and state polls, with an added dash of historical data from previous elections; by the morning of Nov. 6, it was telling him Obama had a 90.9 percent chance of victory. Silver ended up accurately predicting Obama’s wins in all 50 states. His prognostication transformed him into a bit of a celebrity, complete with appearances on various high-profile talk shows. But will his victory translate into many future Nate Silvers crunching mountains of data and offering razor-accurate results? Or will pundits continue to dominate the majority of the daily political discussion, with Silver and other statisticians more of a sideshow? One supposes that if Silver or another statistician get a future election wrong—if their math gave the losing candidate a 98.1 percent chance of winning, for example—then pundits and talking heads will leap on the obvious: that all algorithms are potentially fallible, especially depending on the quality of the inputs. But whether that ultimately weakens the prominence or the importance of statistical analysis in politics is another question entirely. In a quest for votes and money, the Democratic and Republican campaigns erected enormous—and highly secretive—data mining systems. Numerous publications detailed Obama’s team of data scientists, developers and engineers dedicated to digging through mountains of data—most of it collected from social networks and various online sources—in search of actionable insights. According to the Associated Press, Romney’s campaign used data tools to locate and tap into potential donors. Now that the election’s over, there’ll likely be a good deal of postgame analysis about how much those data-mining efforts helped sway ultimate victory for the Obama side. All the number-crunching in the world, however, is useless unless a political party has the infrastructure to put any insights into real-world action. Image: FiveThirtyEight