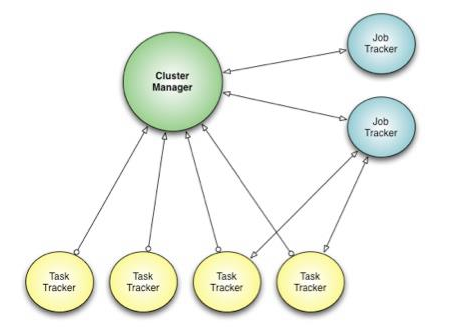

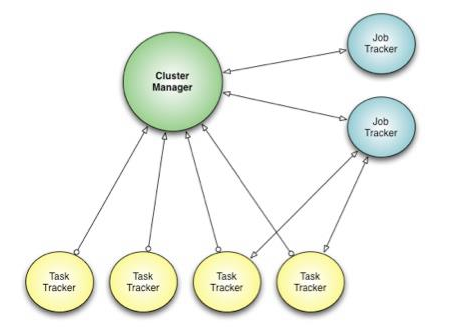

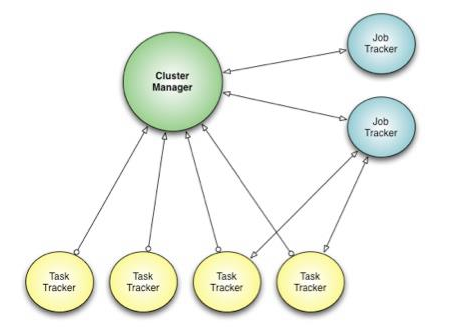

Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.”[/caption] Facebook’s engineers face a considerable challenge when it comes to managing the tidal wave of data flowing through the company’s infrastructure. Its data warehouse, which handles over half a petabyte of information each day, has expanded some 2500x in the past four years—and that growth isn’t going to end anytime soon. Until early 2011, those engineers relied on a MapReduce implementation from Apache Hadoop as the foundation of Facebook’s data infrastructure. Hadoop, an open-source framework for running data applications on large hardware clusters, has become a favorite of not only Facebook, but also IBM and other large firms that wrestle with enormous amounts of data. Still, despite Hadoop MapReduce’s ability to handle large datasets, Facebook’s scheduling framework (in which a large number of task trackers handle duties assigned by a job tracker) began to reach its limits. “At peak load, cluster utilization would drop precipitously due to scheduling overhead,” read a Nov. 8 posting on Facebook’s engineering blog. In addition, the job tracker required hard downtime during software upgrades. “Hadoop MapReduce is also constrained by its static slot-based resource management model,” the posting added. “Rather than using a true resource management system, a MapReduce cluster is divided into a fixed number of map and reduce slots based on a static configuration—so slots are wasted anytime the cluster workload does not fit the static configuration. Furthermore, the slot-based model makes it hard for non-MapReduce applications to be scheduled appropriately.” Throw in pre-defined delays when scheduling tasks for jobs, and it was clear that Facebook needed to revamp its system. Requirements for the new platform included better scalability, lower latency, an ability to upgrade the software without interrupting computation, and “scheduling based on actual task resource requirements rather than a count of map and reduce tasks.” No sweat, right? Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.” It features a dedicated job tracker for each job, as well as a cluster manager that tracks nodes in clusters and the amount of free resources in play. Because the actual job tracking is left to the job trackers, as opposed to the cluster manager, the latter can deal solely with scheduling decisions; that translates into simpler code (since the job trackers follow one job each) and more efficient cluster utilization. “One major difference from our previous Hadoop MapReduce implementation is that Corona uses push-based, rather than pull-based, scheduling,” the posting continued. “After the cluster manager receives resource requests from the job tracker, it pushes the resource grants back to the job tracker. Also, once the job tracker gets resource grants, it creates tasks and then pushes these tasks to the task trackers for running. There is no periodic heartbeat involved in this scheduling, so the scheduling latency is minimized.” Facebook’s engineers started by rolling Corona out to 500 nodes, then moving non-critical workloads to the Corona cluster. As the cluster grew to 1,000 nodes, they had to eliminate a bug in the cluster manager scheduler, a fix that proceeded “without much disruption because of the staged deployment.” After that, the team began shifting mission-critical workloads to Corona; by the middle of 2012, Facebook had it deployed “across all our production systems.” Facebook claims that implementing Corona has translated into better cluster utilization, scheduling fairness, and job latency. Even as it adds new features to the platform, it’s open-sourced the production version. While few companies face data issues on a Facebook scale, some of the lessons learned in building Corona could very well help other organizations. Image: Facebook

Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.”[/caption] Facebook’s engineers face a considerable challenge when it comes to managing the tidal wave of data flowing through the company’s infrastructure. Its data warehouse, which handles over half a petabyte of information each day, has expanded some 2500x in the past four years—and that growth isn’t going to end anytime soon. Until early 2011, those engineers relied on a MapReduce implementation from Apache Hadoop as the foundation of Facebook’s data infrastructure. Hadoop, an open-source framework for running data applications on large hardware clusters, has become a favorite of not only Facebook, but also IBM and other large firms that wrestle with enormous amounts of data. Still, despite Hadoop MapReduce’s ability to handle large datasets, Facebook’s scheduling framework (in which a large number of task trackers handle duties assigned by a job tracker) began to reach its limits. “At peak load, cluster utilization would drop precipitously due to scheduling overhead,” read a Nov. 8 posting on Facebook’s engineering blog. In addition, the job tracker required hard downtime during software upgrades. “Hadoop MapReduce is also constrained by its static slot-based resource management model,” the posting added. “Rather than using a true resource management system, a MapReduce cluster is divided into a fixed number of map and reduce slots based on a static configuration—so slots are wasted anytime the cluster workload does not fit the static configuration. Furthermore, the slot-based model makes it hard for non-MapReduce applications to be scheduled appropriately.” Throw in pre-defined delays when scheduling tasks for jobs, and it was clear that Facebook needed to revamp its system. Requirements for the new platform included better scalability, lower latency, an ability to upgrade the software without interrupting computation, and “scheduling based on actual task resource requirements rather than a count of map and reduce tasks.” No sweat, right? Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.” It features a dedicated job tracker for each job, as well as a cluster manager that tracks nodes in clusters and the amount of free resources in play. Because the actual job tracking is left to the job trackers, as opposed to the cluster manager, the latter can deal solely with scheduling decisions; that translates into simpler code (since the job trackers follow one job each) and more efficient cluster utilization. “One major difference from our previous Hadoop MapReduce implementation is that Corona uses push-based, rather than pull-based, scheduling,” the posting continued. “After the cluster manager receives resource requests from the job tracker, it pushes the resource grants back to the job tracker. Also, once the job tracker gets resource grants, it creates tasks and then pushes these tasks to the task trackers for running. There is no periodic heartbeat involved in this scheduling, so the scheduling latency is minimized.” Facebook’s engineers started by rolling Corona out to 500 nodes, then moving non-critical workloads to the Corona cluster. As the cluster grew to 1,000 nodes, they had to eliminate a bug in the cluster manager scheduler, a fix that proceeded “without much disruption because of the staged deployment.” After that, the team began shifting mission-critical workloads to Corona; by the middle of 2012, Facebook had it deployed “across all our production systems.” Facebook claims that implementing Corona has translated into better cluster utilization, scheduling fairness, and job latency. Even as it adds new features to the platform, it’s open-sourced the production version. While few companies face data issues on a Facebook scale, some of the lessons learned in building Corona could very well help other organizations. Image: Facebook Facebook’s Corona: When Hadoop MapReduce Wasn’t Enough

[caption id="attachment_5788" align="aligncenter" width="460"]  Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.”[/caption] Facebook’s engineers face a considerable challenge when it comes to managing the tidal wave of data flowing through the company’s infrastructure. Its data warehouse, which handles over half a petabyte of information each day, has expanded some 2500x in the past four years—and that growth isn’t going to end anytime soon. Until early 2011, those engineers relied on a MapReduce implementation from Apache Hadoop as the foundation of Facebook’s data infrastructure. Hadoop, an open-source framework for running data applications on large hardware clusters, has become a favorite of not only Facebook, but also IBM and other large firms that wrestle with enormous amounts of data. Still, despite Hadoop MapReduce’s ability to handle large datasets, Facebook’s scheduling framework (in which a large number of task trackers handle duties assigned by a job tracker) began to reach its limits. “At peak load, cluster utilization would drop precipitously due to scheduling overhead,” read a Nov. 8 posting on Facebook’s engineering blog. In addition, the job tracker required hard downtime during software upgrades. “Hadoop MapReduce is also constrained by its static slot-based resource management model,” the posting added. “Rather than using a true resource management system, a MapReduce cluster is divided into a fixed number of map and reduce slots based on a static configuration—so slots are wasted anytime the cluster workload does not fit the static configuration. Furthermore, the slot-based model makes it hard for non-MapReduce applications to be scheduled appropriately.” Throw in pre-defined delays when scheduling tasks for jobs, and it was clear that Facebook needed to revamp its system. Requirements for the new platform included better scalability, lower latency, an ability to upgrade the software without interrupting computation, and “scheduling based on actual task resource requirements rather than a count of map and reduce tasks.” No sweat, right? Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.” It features a dedicated job tracker for each job, as well as a cluster manager that tracks nodes in clusters and the amount of free resources in play. Because the actual job tracking is left to the job trackers, as opposed to the cluster manager, the latter can deal solely with scheduling decisions; that translates into simpler code (since the job trackers follow one job each) and more efficient cluster utilization. “One major difference from our previous Hadoop MapReduce implementation is that Corona uses push-based, rather than pull-based, scheduling,” the posting continued. “After the cluster manager receives resource requests from the job tracker, it pushes the resource grants back to the job tracker. Also, once the job tracker gets resource grants, it creates tasks and then pushes these tasks to the task trackers for running. There is no periodic heartbeat involved in this scheduling, so the scheduling latency is minimized.” Facebook’s engineers started by rolling Corona out to 500 nodes, then moving non-critical workloads to the Corona cluster. As the cluster grew to 1,000 nodes, they had to eliminate a bug in the cluster manager scheduler, a fix that proceeded “without much disruption because of the staged deployment.” After that, the team began shifting mission-critical workloads to Corona; by the middle of 2012, Facebook had it deployed “across all our production systems.” Facebook claims that implementing Corona has translated into better cluster utilization, scheduling fairness, and job latency. Even as it adds new features to the platform, it’s open-sourced the production version. While few companies face data issues on a Facebook scale, some of the lessons learned in building Corona could very well help other organizations. Image: Facebook

Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.”[/caption] Facebook’s engineers face a considerable challenge when it comes to managing the tidal wave of data flowing through the company’s infrastructure. Its data warehouse, which handles over half a petabyte of information each day, has expanded some 2500x in the past four years—and that growth isn’t going to end anytime soon. Until early 2011, those engineers relied on a MapReduce implementation from Apache Hadoop as the foundation of Facebook’s data infrastructure. Hadoop, an open-source framework for running data applications on large hardware clusters, has become a favorite of not only Facebook, but also IBM and other large firms that wrestle with enormous amounts of data. Still, despite Hadoop MapReduce’s ability to handle large datasets, Facebook’s scheduling framework (in which a large number of task trackers handle duties assigned by a job tracker) began to reach its limits. “At peak load, cluster utilization would drop precipitously due to scheduling overhead,” read a Nov. 8 posting on Facebook’s engineering blog. In addition, the job tracker required hard downtime during software upgrades. “Hadoop MapReduce is also constrained by its static slot-based resource management model,” the posting added. “Rather than using a true resource management system, a MapReduce cluster is divided into a fixed number of map and reduce slots based on a static configuration—so slots are wasted anytime the cluster workload does not fit the static configuration. Furthermore, the slot-based model makes it hard for non-MapReduce applications to be scheduled appropriately.” Throw in pre-defined delays when scheduling tasks for jobs, and it was clear that Facebook needed to revamp its system. Requirements for the new platform included better scalability, lower latency, an ability to upgrade the software without interrupting computation, and “scheduling based on actual task resource requirements rather than a count of map and reduce tasks.” No sweat, right? Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.” It features a dedicated job tracker for each job, as well as a cluster manager that tracks nodes in clusters and the amount of free resources in play. Because the actual job tracking is left to the job trackers, as opposed to the cluster manager, the latter can deal solely with scheduling decisions; that translates into simpler code (since the job trackers follow one job each) and more efficient cluster utilization. “One major difference from our previous Hadoop MapReduce implementation is that Corona uses push-based, rather than pull-based, scheduling,” the posting continued. “After the cluster manager receives resource requests from the job tracker, it pushes the resource grants back to the job tracker. Also, once the job tracker gets resource grants, it creates tasks and then pushes these tasks to the task trackers for running. There is no periodic heartbeat involved in this scheduling, so the scheduling latency is minimized.” Facebook’s engineers started by rolling Corona out to 500 nodes, then moving non-critical workloads to the Corona cluster. As the cluster grew to 1,000 nodes, they had to eliminate a bug in the cluster manager scheduler, a fix that proceeded “without much disruption because of the staged deployment.” After that, the team began shifting mission-critical workloads to Corona; by the middle of 2012, Facebook had it deployed “across all our production systems.” Facebook claims that implementing Corona has translated into better cluster utilization, scheduling fairness, and job latency. Even as it adds new features to the platform, it’s open-sourced the production version. While few companies face data issues on a Facebook scale, some of the lessons learned in building Corona could very well help other organizations. Image: Facebook

Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.”[/caption] Facebook’s engineers face a considerable challenge when it comes to managing the tidal wave of data flowing through the company’s infrastructure. Its data warehouse, which handles over half a petabyte of information each day, has expanded some 2500x in the past four years—and that growth isn’t going to end anytime soon. Until early 2011, those engineers relied on a MapReduce implementation from Apache Hadoop as the foundation of Facebook’s data infrastructure. Hadoop, an open-source framework for running data applications on large hardware clusters, has become a favorite of not only Facebook, but also IBM and other large firms that wrestle with enormous amounts of data. Still, despite Hadoop MapReduce’s ability to handle large datasets, Facebook’s scheduling framework (in which a large number of task trackers handle duties assigned by a job tracker) began to reach its limits. “At peak load, cluster utilization would drop precipitously due to scheduling overhead,” read a Nov. 8 posting on Facebook’s engineering blog. In addition, the job tracker required hard downtime during software upgrades. “Hadoop MapReduce is also constrained by its static slot-based resource management model,” the posting added. “Rather than using a true resource management system, a MapReduce cluster is divided into a fixed number of map and reduce slots based on a static configuration—so slots are wasted anytime the cluster workload does not fit the static configuration. Furthermore, the slot-based model makes it hard for non-MapReduce applications to be scheduled appropriately.” Throw in pre-defined delays when scheduling tasks for jobs, and it was clear that Facebook needed to revamp its system. Requirements for the new platform included better scalability, lower latency, an ability to upgrade the software without interrupting computation, and “scheduling based on actual task resource requirements rather than a count of map and reduce tasks.” No sweat, right? Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.” It features a dedicated job tracker for each job, as well as a cluster manager that tracks nodes in clusters and the amount of free resources in play. Because the actual job tracking is left to the job trackers, as opposed to the cluster manager, the latter can deal solely with scheduling decisions; that translates into simpler code (since the job trackers follow one job each) and more efficient cluster utilization. “One major difference from our previous Hadoop MapReduce implementation is that Corona uses push-based, rather than pull-based, scheduling,” the posting continued. “After the cluster manager receives resource requests from the job tracker, it pushes the resource grants back to the job tracker. Also, once the job tracker gets resource grants, it creates tasks and then pushes these tasks to the task trackers for running. There is no periodic heartbeat involved in this scheduling, so the scheduling latency is minimized.” Facebook’s engineers started by rolling Corona out to 500 nodes, then moving non-critical workloads to the Corona cluster. As the cluster grew to 1,000 nodes, they had to eliminate a bug in the cluster manager scheduler, a fix that proceeded “without much disruption because of the staged deployment.” After that, the team began shifting mission-critical workloads to Corona; by the middle of 2012, Facebook had it deployed “across all our production systems.” Facebook claims that implementing Corona has translated into better cluster utilization, scheduling fairness, and job latency. Even as it adds new features to the platform, it’s open-sourced the production version. While few companies face data issues on a Facebook scale, some of the lessons learned in building Corona could very well help other organizations. Image: Facebook

Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.”[/caption] Facebook’s engineers face a considerable challenge when it comes to managing the tidal wave of data flowing through the company’s infrastructure. Its data warehouse, which handles over half a petabyte of information each day, has expanded some 2500x in the past four years—and that growth isn’t going to end anytime soon. Until early 2011, those engineers relied on a MapReduce implementation from Apache Hadoop as the foundation of Facebook’s data infrastructure. Hadoop, an open-source framework for running data applications on large hardware clusters, has become a favorite of not only Facebook, but also IBM and other large firms that wrestle with enormous amounts of data. Still, despite Hadoop MapReduce’s ability to handle large datasets, Facebook’s scheduling framework (in which a large number of task trackers handle duties assigned by a job tracker) began to reach its limits. “At peak load, cluster utilization would drop precipitously due to scheduling overhead,” read a Nov. 8 posting on Facebook’s engineering blog. In addition, the job tracker required hard downtime during software upgrades. “Hadoop MapReduce is also constrained by its static slot-based resource management model,” the posting added. “Rather than using a true resource management system, a MapReduce cluster is divided into a fixed number of map and reduce slots based on a static configuration—so slots are wasted anytime the cluster workload does not fit the static configuration. Furthermore, the slot-based model makes it hard for non-MapReduce applications to be scheduled appropriately.” Throw in pre-defined delays when scheduling tasks for jobs, and it was clear that Facebook needed to revamp its system. Requirements for the new platform included better scalability, lower latency, an ability to upgrade the software without interrupting computation, and “scheduling based on actual task resource requirements rather than a count of map and reduce tasks.” No sweat, right? Facebook’s engineers went to the whiteboard and designed a new scheduling framework named “Corona.” It features a dedicated job tracker for each job, as well as a cluster manager that tracks nodes in clusters and the amount of free resources in play. Because the actual job tracking is left to the job trackers, as opposed to the cluster manager, the latter can deal solely with scheduling decisions; that translates into simpler code (since the job trackers follow one job each) and more efficient cluster utilization. “One major difference from our previous Hadoop MapReduce implementation is that Corona uses push-based, rather than pull-based, scheduling,” the posting continued. “After the cluster manager receives resource requests from the job tracker, it pushes the resource grants back to the job tracker. Also, once the job tracker gets resource grants, it creates tasks and then pushes these tasks to the task trackers for running. There is no periodic heartbeat involved in this scheduling, so the scheduling latency is minimized.” Facebook’s engineers started by rolling Corona out to 500 nodes, then moving non-critical workloads to the Corona cluster. As the cluster grew to 1,000 nodes, they had to eliminate a bug in the cluster manager scheduler, a fix that proceeded “without much disruption because of the staged deployment.” After that, the team began shifting mission-critical workloads to Corona; by the middle of 2012, Facebook had it deployed “across all our production systems.” Facebook claims that implementing Corona has translated into better cluster utilization, scheduling fairness, and job latency. Even as it adds new features to the platform, it’s open-sourced the production version. While few companies face data issues on a Facebook scale, some of the lessons learned in building Corona could very well help other organizations. Image: Facebook