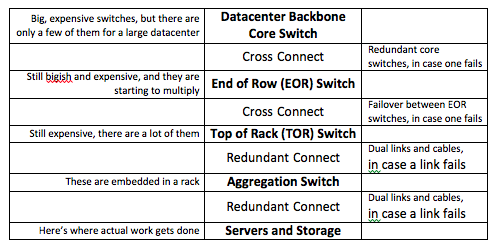

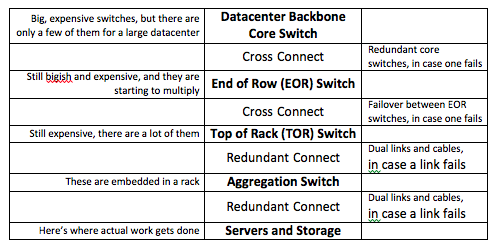

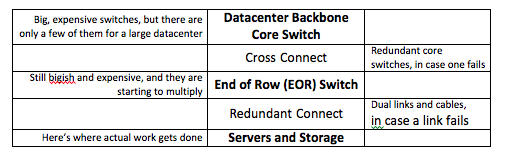

Software-defined networks have begun to dominate the news, with experts debating SDNs, server fabrics, and lots of virtualized stuff (machines, I/O, networks, etc.) with respect to new cloud and mega-datacenter build-outs. Which brings up a big question: what is so new and different about these new at-scale deployments? To begin, let’s look at a typical enterprise network “fat tree” hierarchy:

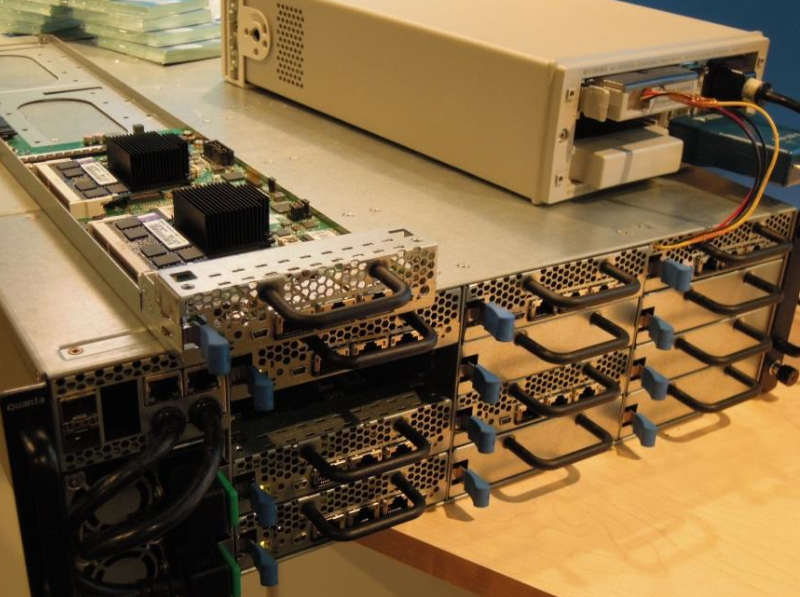

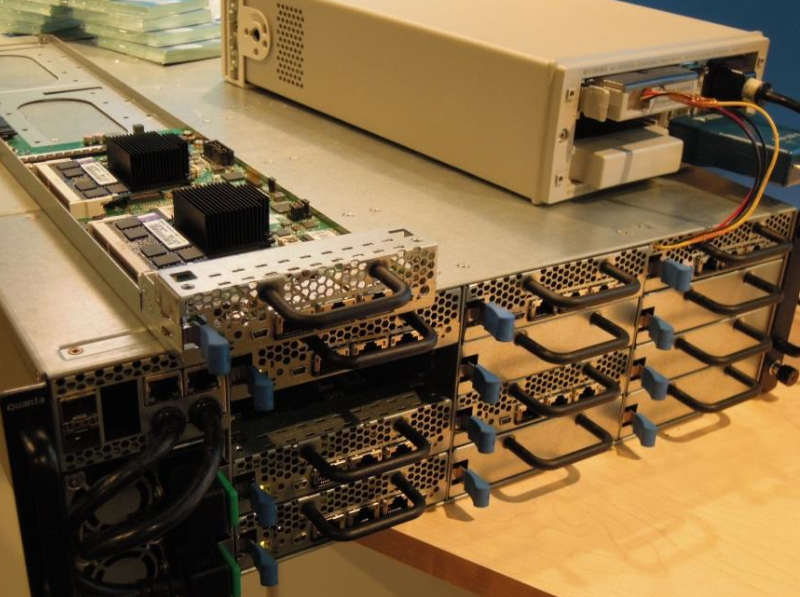

There’s a lot of redundancy built into this network architecture. Near the backbone, if a switch goes out of service or if a network link is lost, there’s plenty of redundancy to keep the datacenter moving. As you move to the rack level, if a TOR [Top-of-Rack] switch fails, the rack it serves will be out of action until the TOR switch can be replaced. If an aggregation switch fails, then the servers under its span will be out of action until it can be replaced. It works. It is expensive. And all those switches do is move data and runtime images around. With these new services-oriented, at-scale deployments it gets worse. The problem is that in a modern warehouse-sized datacenter, we’re stuffing more and more server nodes into each rack. That begs the question: what is a “server node” in today’s mega-datacenter context? For a visual answer, the first photo is a Quanta processor sled sitting on their 4U cloud chassis (taken at the Intel Developer Forum earlier this year). The sled has two processors on it—you can see the big heat sink stacks above the two Intel Xeon processors and a memory card by each one. Each processor has a memory card and support logic associated with it. Just as important, the two systems are not connected to each other; this is not a 2S (socket) or 2P (processor) symmetric multiprocessor (SMP) blade. Each of these two processor complexes connects to the datacenter through its own Ethernet link. We call each of these independent processor complexes a server “node,” and so this card contains two nodes.

Doing the math, this chassis can host 24 server nodes in a 4U rack, or 6 nodes per U. (One U is 1.75 inches of rack height.) Quanta is taking an incremental innovation approach to mega-datacenter infrastructure. This Calxeda processor card (photo below) contains four server nodes–you can see the four Calxeda EnergyCore SoCs (System on Chip, containing ARM cores) and memory associated with each processor. The difference between a processor and a SoC is that there is no other logic on the Calxeda boards; all of the logic to boot, manage and run a server node has been integrated onto one silicon chip.

This Boston Viridis chassis hosts 12 of these Calxeda EnergyCards in a 2U chassis, for 48 server nodes, or 24 nodes per U:

Just a few years ago, 1 node per U was state of the art. A node contained one or more single-core processor chips (typically 2 or 4 in an SMP configuration), a north bridge chip, a couple of south bridge chips, several memory sticks, et cetera. The two examples above cover 6 nodes per U with big honkin’ Xeon sockets and 24 nodes per U with Calxeda’s ARM-based SoCs. SeaMicro has implemented a server card with 6 Intel Atom processors on it–their 10U chassis hosts 64 of those cards for 384 nodes, or a little over 38 nodes per U. This is just the start. All of these systems have been shipping for less than a year. The difference between the traditional Quanta chassis, versus the future direction of the Calxeda and SeaMicro chassis, is in the network topologies they implement. If you look closely at the Quanta photo, you’ll see 3 x 1GbE (Gigabit Ethernet) ports on the front of each sled. That’s one per node plus a third, redundant port on each card in case one of the others fails. In this case that’s one Ethernet port per node (24 cables), plus one redundant port per card (12 more cables), representing power consumption on the sled, cabling complexity, and a huge amount of in-rack switch capacity. The 24 nodes with dedicated Ethernet bandwidth represent 36 switch ports and 24Gbps non-redundant Ethernet switch capacity, even though each node typically does not need anywhere near 1Gbps dedicated bandwidth. This solution does not scale very well. The Boston Viridis chassis has only two 10GbE ports (for redundancy) shared by all 48 nodes in the chassis. Two cables. It can talk directly to a TOR switch or even better an EOR switch. This is starting to scale well.

Looking Ahead

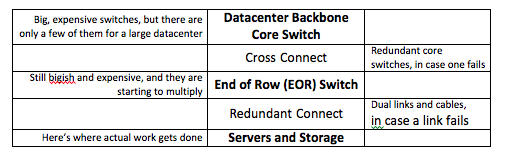

Calxeda, SeaMicro, and a new generation of in-rack network fabric architects are creating a high-bandwidth localized network topology connecting nodes with embedded switches or message passing logic–a “fabric”–within their chassis. They virtualize network and storage resources. Each node believes it has access to its own standard external switched Ethernet services. There’s proprietary goo involved in how each implements their network topologies and I/O virtualization, but to the datacenter each node looks no different than “normal” nodes from a connectivity and manageability viewpoint (although manageability was, and still is, an issue for traditional blades). The impact will be huge. Here’s one possible datacenter network topology endgame:

This is possibly a concern for Cisco and Juniper, who sold the switches we just deleted from the stack. A couple of months ago I looked at

fabric investment and M&A. Cisco’s very recent purchase of vCider shows that it starting to feel some heat. In a

more recent article I discussed small cores vs. big cores and virtualization. Here’s where small cores and fabrics really start to make sense and it all comes together. The goal is to combine:

Right-sized hardware threads–the goal here is to match processor core frequency and performance to the expected performance demands of software threads. This may be different on a workload by workload basis, but mega-datacenters already buy systems optimized for specific workloads in volume.

Fabric topologies optimized for specific workload I/O demands. We are in the early days of fabric-based rack development. Not only have winners not been determined, but there will be many experiments in tuning chassis- and rack-level fabric architecture and performance for high-value workloads. SDN may be a help in figuring out some of those topologies, but at the point where a services-oriented datacenter has tuned a fabric topology for a given workload, it will be crafted into dedicated fabric designs.

Zero virtualization for some workloads, increasing to a lightweight “virtualize for manageability” capability–minimize burning hardware thread performance and power on non-workload OS overhead. This is where heavily virtualizing big, fast, hot cores breaks down. Remember that in a service-oriented architecture a node is not running random workloads like in a cloud deployment–there are entire racks running the same workload, all the time. They are not migrating virtual images around that portion of the network. They are flowing primarily workload data through that portion of the network, and it impacts both virtualization strategy and network architecture.

Insanely good SoC [system-on-a-chip] and chassis-level power management–for the same reason that datacenters leverage source code access into efficiencies of scale, these new fabric-based chassis control power use of their local fabric at levels of efficiency that rack switches can’t attain, even with SDNs and Energy-Efficient Ethernet. And the in-chassis I/O virtualization of network and storage resources is transparent to the OS, hypervisor, and workloads. The result is increasingly higher thread capacity, in a very manageable power footprint, with a lot fewer cables running through the rack. It is simpler to install and maintain. It scales very well. So, when you hear vendors talking about the flexibility of software defined networks (SDN) at a rack level in services-oriented datacenters, those vendors are looking in the rearview mirror. Continuing to buy all of that exposed complexity and power consumption to gain a bit of temporary flexibility is a bad tradeoff. SDN has a place in the datacenter backbone and between datacenters, but at a rack level it is an admission that a vendor has no idea what a winning network topology should look like.

There’s a lot of redundancy built into this network architecture. Near the backbone, if a switch goes out of service or if a network link is lost, there’s plenty of redundancy to keep the datacenter moving. As you move to the rack level, if a TOR [Top-of-Rack] switch fails, the rack it serves will be out of action until the TOR switch can be replaced. If an aggregation switch fails, then the servers under its span will be out of action until it can be replaced. It works. It is expensive. And all those switches do is move data and runtime images around. With these new services-oriented, at-scale deployments it gets worse. The problem is that in a modern warehouse-sized datacenter, we’re stuffing more and more server nodes into each rack. That begs the question: what is a “server node” in today’s mega-datacenter context? For a visual answer, the first photo is a Quanta processor sled sitting on their 4U cloud chassis (taken at the Intel Developer Forum earlier this year). The sled has two processors on it—you can see the big heat sink stacks above the two Intel Xeon processors and a memory card by each one. Each processor has a memory card and support logic associated with it. Just as important, the two systems are not connected to each other; this is not a 2S (socket) or 2P (processor) symmetric multiprocessor (SMP) blade. Each of these two processor complexes connects to the datacenter through its own Ethernet link. We call each of these independent processor complexes a server “node,” and so this card contains two nodes.

There’s a lot of redundancy built into this network architecture. Near the backbone, if a switch goes out of service or if a network link is lost, there’s plenty of redundancy to keep the datacenter moving. As you move to the rack level, if a TOR [Top-of-Rack] switch fails, the rack it serves will be out of action until the TOR switch can be replaced. If an aggregation switch fails, then the servers under its span will be out of action until it can be replaced. It works. It is expensive. And all those switches do is move data and runtime images around. With these new services-oriented, at-scale deployments it gets worse. The problem is that in a modern warehouse-sized datacenter, we’re stuffing more and more server nodes into each rack. That begs the question: what is a “server node” in today’s mega-datacenter context? For a visual answer, the first photo is a Quanta processor sled sitting on their 4U cloud chassis (taken at the Intel Developer Forum earlier this year). The sled has two processors on it—you can see the big heat sink stacks above the two Intel Xeon processors and a memory card by each one. Each processor has a memory card and support logic associated with it. Just as important, the two systems are not connected to each other; this is not a 2S (socket) or 2P (processor) symmetric multiprocessor (SMP) blade. Each of these two processor complexes connects to the datacenter through its own Ethernet link. We call each of these independent processor complexes a server “node,” and so this card contains two nodes.

This Boston Viridis chassis hosts 12 of these Calxeda EnergyCards in a 2U chassis, for 48 server nodes, or 24 nodes per U:

This Boston Viridis chassis hosts 12 of these Calxeda EnergyCards in a 2U chassis, for 48 server nodes, or 24 nodes per U:  Just a few years ago, 1 node per U was state of the art. A node contained one or more single-core processor chips (typically 2 or 4 in an SMP configuration), a north bridge chip, a couple of south bridge chips, several memory sticks, et cetera. The two examples above cover 6 nodes per U with big honkin’ Xeon sockets and 24 nodes per U with Calxeda’s ARM-based SoCs. SeaMicro has implemented a server card with 6 Intel Atom processors on it–their 10U chassis hosts 64 of those cards for 384 nodes, or a little over 38 nodes per U. This is just the start. All of these systems have been shipping for less than a year. The difference between the traditional Quanta chassis, versus the future direction of the Calxeda and SeaMicro chassis, is in the network topologies they implement. If you look closely at the Quanta photo, you’ll see 3 x 1GbE (Gigabit Ethernet) ports on the front of each sled. That’s one per node plus a third, redundant port on each card in case one of the others fails. In this case that’s one Ethernet port per node (24 cables), plus one redundant port per card (12 more cables), representing power consumption on the sled, cabling complexity, and a huge amount of in-rack switch capacity. The 24 nodes with dedicated Ethernet bandwidth represent 36 switch ports and 24Gbps non-redundant Ethernet switch capacity, even though each node typically does not need anywhere near 1Gbps dedicated bandwidth. This solution does not scale very well. The Boston Viridis chassis has only two 10GbE ports (for redundancy) shared by all 48 nodes in the chassis. Two cables. It can talk directly to a TOR switch or even better an EOR switch. This is starting to scale well.

Just a few years ago, 1 node per U was state of the art. A node contained one or more single-core processor chips (typically 2 or 4 in an SMP configuration), a north bridge chip, a couple of south bridge chips, several memory sticks, et cetera. The two examples above cover 6 nodes per U with big honkin’ Xeon sockets and 24 nodes per U with Calxeda’s ARM-based SoCs. SeaMicro has implemented a server card with 6 Intel Atom processors on it–their 10U chassis hosts 64 of those cards for 384 nodes, or a little over 38 nodes per U. This is just the start. All of these systems have been shipping for less than a year. The difference between the traditional Quanta chassis, versus the future direction of the Calxeda and SeaMicro chassis, is in the network topologies they implement. If you look closely at the Quanta photo, you’ll see 3 x 1GbE (Gigabit Ethernet) ports on the front of each sled. That’s one per node plus a third, redundant port on each card in case one of the others fails. In this case that’s one Ethernet port per node (24 cables), plus one redundant port per card (12 more cables), representing power consumption on the sled, cabling complexity, and a huge amount of in-rack switch capacity. The 24 nodes with dedicated Ethernet bandwidth represent 36 switch ports and 24Gbps non-redundant Ethernet switch capacity, even though each node typically does not need anywhere near 1Gbps dedicated bandwidth. This solution does not scale very well. The Boston Viridis chassis has only two 10GbE ports (for redundancy) shared by all 48 nodes in the chassis. Two cables. It can talk directly to a TOR switch or even better an EOR switch. This is starting to scale well.

This is possibly a concern for Cisco and Juniper, who sold the switches we just deleted from the stack. A couple of months ago I looked at fabric investment and M&A. Cisco’s very recent purchase of vCider shows that it starting to feel some heat. In a more recent article I discussed small cores vs. big cores and virtualization. Here’s where small cores and fabrics really start to make sense and it all comes together. The goal is to combine: Right-sized hardware threads–the goal here is to match processor core frequency and performance to the expected performance demands of software threads. This may be different on a workload by workload basis, but mega-datacenters already buy systems optimized for specific workloads in volume. Fabric topologies optimized for specific workload I/O demands. We are in the early days of fabric-based rack development. Not only have winners not been determined, but there will be many experiments in tuning chassis- and rack-level fabric architecture and performance for high-value workloads. SDN may be a help in figuring out some of those topologies, but at the point where a services-oriented datacenter has tuned a fabric topology for a given workload, it will be crafted into dedicated fabric designs. Zero virtualization for some workloads, increasing to a lightweight “virtualize for manageability” capability–minimize burning hardware thread performance and power on non-workload OS overhead. This is where heavily virtualizing big, fast, hot cores breaks down. Remember that in a service-oriented architecture a node is not running random workloads like in a cloud deployment–there are entire racks running the same workload, all the time. They are not migrating virtual images around that portion of the network. They are flowing primarily workload data through that portion of the network, and it impacts both virtualization strategy and network architecture. Insanely good SoC [system-on-a-chip] and chassis-level power management–for the same reason that datacenters leverage source code access into efficiencies of scale, these new fabric-based chassis control power use of their local fabric at levels of efficiency that rack switches can’t attain, even with SDNs and Energy-Efficient Ethernet. And the in-chassis I/O virtualization of network and storage resources is transparent to the OS, hypervisor, and workloads. The result is increasingly higher thread capacity, in a very manageable power footprint, with a lot fewer cables running through the rack. It is simpler to install and maintain. It scales very well. So, when you hear vendors talking about the flexibility of software defined networks (SDN) at a rack level in services-oriented datacenters, those vendors are looking in the rearview mirror. Continuing to buy all of that exposed complexity and power consumption to gain a bit of temporary flexibility is a bad tradeoff. SDN has a place in the datacenter backbone and between datacenters, but at a rack level it is an admission that a vendor has no idea what a winning network topology should look like.

This is possibly a concern for Cisco and Juniper, who sold the switches we just deleted from the stack. A couple of months ago I looked at fabric investment and M&A. Cisco’s very recent purchase of vCider shows that it starting to feel some heat. In a more recent article I discussed small cores vs. big cores and virtualization. Here’s where small cores and fabrics really start to make sense and it all comes together. The goal is to combine: Right-sized hardware threads–the goal here is to match processor core frequency and performance to the expected performance demands of software threads. This may be different on a workload by workload basis, but mega-datacenters already buy systems optimized for specific workloads in volume. Fabric topologies optimized for specific workload I/O demands. We are in the early days of fabric-based rack development. Not only have winners not been determined, but there will be many experiments in tuning chassis- and rack-level fabric architecture and performance for high-value workloads. SDN may be a help in figuring out some of those topologies, but at the point where a services-oriented datacenter has tuned a fabric topology for a given workload, it will be crafted into dedicated fabric designs. Zero virtualization for some workloads, increasing to a lightweight “virtualize for manageability” capability–minimize burning hardware thread performance and power on non-workload OS overhead. This is where heavily virtualizing big, fast, hot cores breaks down. Remember that in a service-oriented architecture a node is not running random workloads like in a cloud deployment–there are entire racks running the same workload, all the time. They are not migrating virtual images around that portion of the network. They are flowing primarily workload data through that portion of the network, and it impacts both virtualization strategy and network architecture. Insanely good SoC [system-on-a-chip] and chassis-level power management–for the same reason that datacenters leverage source code access into efficiencies of scale, these new fabric-based chassis control power use of their local fabric at levels of efficiency that rack switches can’t attain, even with SDNs and Energy-Efficient Ethernet. And the in-chassis I/O virtualization of network and storage resources is transparent to the OS, hypervisor, and workloads. The result is increasingly higher thread capacity, in a very manageable power footprint, with a lot fewer cables running through the rack. It is simpler to install and maintain. It scales very well. So, when you hear vendors talking about the flexibility of software defined networks (SDN) at a rack level in services-oriented datacenters, those vendors are looking in the rearview mirror. Continuing to buy all of that exposed complexity and power consumption to gain a bit of temporary flexibility is a bad tradeoff. SDN has a place in the datacenter backbone and between datacenters, but at a rack level it is an admission that a vendor has no idea what a winning network topology should look like.