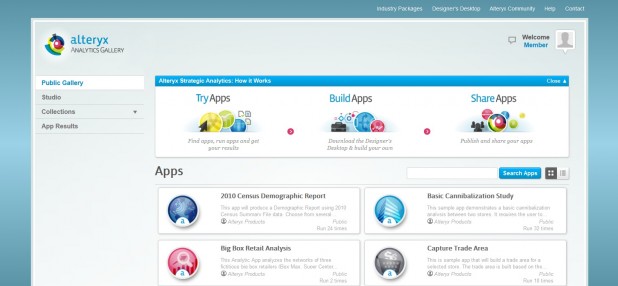

Alteryx Analytics Gallery offers 30 out-of-the-box applications accessible via the cloud.[/caption] Data management—especially when the data in question grows to epic proportions—remains a top goal of analysts and CIOs. In order to fulfill that need, some IT vendors have begun embedding tools into their software offerings that automate data management. Today, Alteryx will launch version 8.0 of Alteryx Strategic Analysis. Company officials suggest that the new release will make Big Data more accessible to the average analyst in a way that doesn’t necessarily require the intervention of data scientists with advanced degrees and years of experience. At the core of this new release is an Alteryx Analytics Gallery, which provides 30 out-of-the-box applications accessible via the cloud. The end result, say company officials, is a major step towards the “consumerization of Big Data.” Most organizations simply can‘t afford—let alone find—the data scientists often needed to handle massive amounts of information. By adding features that address data management issues, organizations can (at least in theory) achieve impressive returns on their Big Data investments without having to wait for a data scientist to become available or, once hired, actually finish a project. Analytics-applications builders are keenly aware that that, despite the business gains provided by their software, the market for these applications will remain relatively small without some built-in management capabilities. And while many of those vendors are moving forward with the aforementioned embedding of data-management functions into their applications, the scope of those offerings varies wildly depending on the vendor. ClickFox, for example, manages Big Data on behalf of customers who want to invoke its customer experience management application in the cloud. According to Jeff Gossman, vice president of product management, the company provides a service overlay on top of datasets that makes all that information more accessible to business users. “ClickFox does all the heavy lifting,” Gossman said, “because the shortage of data scientists has become a bottleneck.” Then there’s BIME, which developed an analytics application tightly coupled to a variety of Big Data services, including Google Big Query. Big-name vendors such as IBM and SAP give customers access to both on-premise and cloud options for analysis, with a bit more emphasis these days on the cloud. IBM, for example, offers an instance of IBM InfoSphere BigInsights, an implementation of Hadoop packaged with IBM analytics software that runs on its IBM SmartCloud Enterprise infrastructure-as-a-service offering. Despite the importance of Hadoop to many companies’ data-crunching efforts, Anjul Bhambhri, vice president of Big Data for IBM, insists that not all Big Data “is created equal.” Hadoop faces some batch-oriented limitations, Bhambhri added, which means that IT organizations with a variety of data manage need to employ a healthy mix of data-management solutions are capable of processing large amounts of data in real time. SAP, meanwhile, is pushing the adoption of cloud and on-premises analytics applications leverage its High Performance Analytics Appliance (HANA) in-memory database technology. SAP argues that the need to identify strategic insights in real time is driving the rise of in-memory computing. ScaleOut Software makes a similar case. The company just released ScaleOut Analytics Server, an In-memory data grid (IMDG) platform that includes a built in map/reduce functionality to process analytics queries 16 times faster than Hadoop. According to company CEO Dr. William Bain, the best part of this approach is that it supports traditional object-oriented approaches to building applications via an easily accessible API. “We’re giving programmers access to data parallelism in a very natural way,” he said. Opera Solutions offers a predictive analytics application that simply focuses on identifying patterns of Big Data. According to Shawn Blevins, executive vice president and general manager of sales, the Opera Solutions Signal Hub Suite includes a number of prebuilt models that effectively automate many of the tasks ordinarily performed by data scientists. “In our case the data is the application,” he said. “We’re not looking for logic, but rather patterns that match the models.” Other approaches for tacking data management include Nodeable, with a streaming analytics engine that pre-process Big Data in way that makes it feasible to store in a compressed format on Amazon Web Services (AWS). What’s really required, argues Fernando Lucini of Hewlett-Packard’s Autonomy unit, is a unified layer of management software for Big Data not only accessible by multiple analytics applications, but also capable of adapting rapidly to new information. HP Autonomy Intelligent Data Operating Layer (IDOL) software, says Lucini, is specifically designed to provide a federated way to manage data that automatically identifies patterns and creates associated models. “The simple truth is that no data scientist can create an ontology that can keep pace with changes in the real world,” he said. “Organizations are going to have to start thinking outside the search box when it comes to dealing with Big Data.” None of this is lost on HP rivals such as Oracle, IBM, SAP, EMC, Informatica and Software AG, all of which are racing to build comprehensive frameworks for managing Big Data. Image: Alteryx

Alteryx Analytics Gallery offers 30 out-of-the-box applications accessible via the cloud.[/caption] Data management—especially when the data in question grows to epic proportions—remains a top goal of analysts and CIOs. In order to fulfill that need, some IT vendors have begun embedding tools into their software offerings that automate data management. Today, Alteryx will launch version 8.0 of Alteryx Strategic Analysis. Company officials suggest that the new release will make Big Data more accessible to the average analyst in a way that doesn’t necessarily require the intervention of data scientists with advanced degrees and years of experience. At the core of this new release is an Alteryx Analytics Gallery, which provides 30 out-of-the-box applications accessible via the cloud. The end result, say company officials, is a major step towards the “consumerization of Big Data.” Most organizations simply can‘t afford—let alone find—the data scientists often needed to handle massive amounts of information. By adding features that address data management issues, organizations can (at least in theory) achieve impressive returns on their Big Data investments without having to wait for a data scientist to become available or, once hired, actually finish a project. Analytics-applications builders are keenly aware that that, despite the business gains provided by their software, the market for these applications will remain relatively small without some built-in management capabilities. And while many of those vendors are moving forward with the aforementioned embedding of data-management functions into their applications, the scope of those offerings varies wildly depending on the vendor. ClickFox, for example, manages Big Data on behalf of customers who want to invoke its customer experience management application in the cloud. According to Jeff Gossman, vice president of product management, the company provides a service overlay on top of datasets that makes all that information more accessible to business users. “ClickFox does all the heavy lifting,” Gossman said, “because the shortage of data scientists has become a bottleneck.” Then there’s BIME, which developed an analytics application tightly coupled to a variety of Big Data services, including Google Big Query. Big-name vendors such as IBM and SAP give customers access to both on-premise and cloud options for analysis, with a bit more emphasis these days on the cloud. IBM, for example, offers an instance of IBM InfoSphere BigInsights, an implementation of Hadoop packaged with IBM analytics software that runs on its IBM SmartCloud Enterprise infrastructure-as-a-service offering. Despite the importance of Hadoop to many companies’ data-crunching efforts, Anjul Bhambhri, vice president of Big Data for IBM, insists that not all Big Data “is created equal.” Hadoop faces some batch-oriented limitations, Bhambhri added, which means that IT organizations with a variety of data manage need to employ a healthy mix of data-management solutions are capable of processing large amounts of data in real time. SAP, meanwhile, is pushing the adoption of cloud and on-premises analytics applications leverage its High Performance Analytics Appliance (HANA) in-memory database technology. SAP argues that the need to identify strategic insights in real time is driving the rise of in-memory computing. ScaleOut Software makes a similar case. The company just released ScaleOut Analytics Server, an In-memory data grid (IMDG) platform that includes a built in map/reduce functionality to process analytics queries 16 times faster than Hadoop. According to company CEO Dr. William Bain, the best part of this approach is that it supports traditional object-oriented approaches to building applications via an easily accessible API. “We’re giving programmers access to data parallelism in a very natural way,” he said. Opera Solutions offers a predictive analytics application that simply focuses on identifying patterns of Big Data. According to Shawn Blevins, executive vice president and general manager of sales, the Opera Solutions Signal Hub Suite includes a number of prebuilt models that effectively automate many of the tasks ordinarily performed by data scientists. “In our case the data is the application,” he said. “We’re not looking for logic, but rather patterns that match the models.” Other approaches for tacking data management include Nodeable, with a streaming analytics engine that pre-process Big Data in way that makes it feasible to store in a compressed format on Amazon Web Services (AWS). What’s really required, argues Fernando Lucini of Hewlett-Packard’s Autonomy unit, is a unified layer of management software for Big Data not only accessible by multiple analytics applications, but also capable of adapting rapidly to new information. HP Autonomy Intelligent Data Operating Layer (IDOL) software, says Lucini, is specifically designed to provide a federated way to manage data that automatically identifies patterns and creates associated models. “The simple truth is that no data scientist can create an ontology that can keep pace with changes in the real world,” he said. “Organizations are going to have to start thinking outside the search box when it comes to dealing with Big Data.” None of this is lost on HP rivals such as Oracle, IBM, SAP, EMC, Informatica and Software AG, all of which are racing to build comprehensive frameworks for managing Big Data. Image: Alteryx Alteryx, IBM, ClickFox Highlight Need for Data Management Tools

[caption id="attachment_5033" align="aligncenter" width="618"]  Alteryx Analytics Gallery offers 30 out-of-the-box applications accessible via the cloud.[/caption] Data management—especially when the data in question grows to epic proportions—remains a top goal of analysts and CIOs. In order to fulfill that need, some IT vendors have begun embedding tools into their software offerings that automate data management. Today, Alteryx will launch version 8.0 of Alteryx Strategic Analysis. Company officials suggest that the new release will make Big Data more accessible to the average analyst in a way that doesn’t necessarily require the intervention of data scientists with advanced degrees and years of experience. At the core of this new release is an Alteryx Analytics Gallery, which provides 30 out-of-the-box applications accessible via the cloud. The end result, say company officials, is a major step towards the “consumerization of Big Data.” Most organizations simply can‘t afford—let alone find—the data scientists often needed to handle massive amounts of information. By adding features that address data management issues, organizations can (at least in theory) achieve impressive returns on their Big Data investments without having to wait for a data scientist to become available or, once hired, actually finish a project. Analytics-applications builders are keenly aware that that, despite the business gains provided by their software, the market for these applications will remain relatively small without some built-in management capabilities. And while many of those vendors are moving forward with the aforementioned embedding of data-management functions into their applications, the scope of those offerings varies wildly depending on the vendor. ClickFox, for example, manages Big Data on behalf of customers who want to invoke its customer experience management application in the cloud. According to Jeff Gossman, vice president of product management, the company provides a service overlay on top of datasets that makes all that information more accessible to business users. “ClickFox does all the heavy lifting,” Gossman said, “because the shortage of data scientists has become a bottleneck.” Then there’s BIME, which developed an analytics application tightly coupled to a variety of Big Data services, including Google Big Query. Big-name vendors such as IBM and SAP give customers access to both on-premise and cloud options for analysis, with a bit more emphasis these days on the cloud. IBM, for example, offers an instance of IBM InfoSphere BigInsights, an implementation of Hadoop packaged with IBM analytics software that runs on its IBM SmartCloud Enterprise infrastructure-as-a-service offering. Despite the importance of Hadoop to many companies’ data-crunching efforts, Anjul Bhambhri, vice president of Big Data for IBM, insists that not all Big Data “is created equal.” Hadoop faces some batch-oriented limitations, Bhambhri added, which means that IT organizations with a variety of data manage need to employ a healthy mix of data-management solutions are capable of processing large amounts of data in real time. SAP, meanwhile, is pushing the adoption of cloud and on-premises analytics applications leverage its High Performance Analytics Appliance (HANA) in-memory database technology. SAP argues that the need to identify strategic insights in real time is driving the rise of in-memory computing. ScaleOut Software makes a similar case. The company just released ScaleOut Analytics Server, an In-memory data grid (IMDG) platform that includes a built in map/reduce functionality to process analytics queries 16 times faster than Hadoop. According to company CEO Dr. William Bain, the best part of this approach is that it supports traditional object-oriented approaches to building applications via an easily accessible API. “We’re giving programmers access to data parallelism in a very natural way,” he said. Opera Solutions offers a predictive analytics application that simply focuses on identifying patterns of Big Data. According to Shawn Blevins, executive vice president and general manager of sales, the Opera Solutions Signal Hub Suite includes a number of prebuilt models that effectively automate many of the tasks ordinarily performed by data scientists. “In our case the data is the application,” he said. “We’re not looking for logic, but rather patterns that match the models.” Other approaches for tacking data management include Nodeable, with a streaming analytics engine that pre-process Big Data in way that makes it feasible to store in a compressed format on Amazon Web Services (AWS). What’s really required, argues Fernando Lucini of Hewlett-Packard’s Autonomy unit, is a unified layer of management software for Big Data not only accessible by multiple analytics applications, but also capable of adapting rapidly to new information. HP Autonomy Intelligent Data Operating Layer (IDOL) software, says Lucini, is specifically designed to provide a federated way to manage data that automatically identifies patterns and creates associated models. “The simple truth is that no data scientist can create an ontology that can keep pace with changes in the real world,” he said. “Organizations are going to have to start thinking outside the search box when it comes to dealing with Big Data.” None of this is lost on HP rivals such as Oracle, IBM, SAP, EMC, Informatica and Software AG, all of which are racing to build comprehensive frameworks for managing Big Data. Image: Alteryx

Alteryx Analytics Gallery offers 30 out-of-the-box applications accessible via the cloud.[/caption] Data management—especially when the data in question grows to epic proportions—remains a top goal of analysts and CIOs. In order to fulfill that need, some IT vendors have begun embedding tools into their software offerings that automate data management. Today, Alteryx will launch version 8.0 of Alteryx Strategic Analysis. Company officials suggest that the new release will make Big Data more accessible to the average analyst in a way that doesn’t necessarily require the intervention of data scientists with advanced degrees and years of experience. At the core of this new release is an Alteryx Analytics Gallery, which provides 30 out-of-the-box applications accessible via the cloud. The end result, say company officials, is a major step towards the “consumerization of Big Data.” Most organizations simply can‘t afford—let alone find—the data scientists often needed to handle massive amounts of information. By adding features that address data management issues, organizations can (at least in theory) achieve impressive returns on their Big Data investments without having to wait for a data scientist to become available or, once hired, actually finish a project. Analytics-applications builders are keenly aware that that, despite the business gains provided by their software, the market for these applications will remain relatively small without some built-in management capabilities. And while many of those vendors are moving forward with the aforementioned embedding of data-management functions into their applications, the scope of those offerings varies wildly depending on the vendor. ClickFox, for example, manages Big Data on behalf of customers who want to invoke its customer experience management application in the cloud. According to Jeff Gossman, vice president of product management, the company provides a service overlay on top of datasets that makes all that information more accessible to business users. “ClickFox does all the heavy lifting,” Gossman said, “because the shortage of data scientists has become a bottleneck.” Then there’s BIME, which developed an analytics application tightly coupled to a variety of Big Data services, including Google Big Query. Big-name vendors such as IBM and SAP give customers access to both on-premise and cloud options for analysis, with a bit more emphasis these days on the cloud. IBM, for example, offers an instance of IBM InfoSphere BigInsights, an implementation of Hadoop packaged with IBM analytics software that runs on its IBM SmartCloud Enterprise infrastructure-as-a-service offering. Despite the importance of Hadoop to many companies’ data-crunching efforts, Anjul Bhambhri, vice president of Big Data for IBM, insists that not all Big Data “is created equal.” Hadoop faces some batch-oriented limitations, Bhambhri added, which means that IT organizations with a variety of data manage need to employ a healthy mix of data-management solutions are capable of processing large amounts of data in real time. SAP, meanwhile, is pushing the adoption of cloud and on-premises analytics applications leverage its High Performance Analytics Appliance (HANA) in-memory database technology. SAP argues that the need to identify strategic insights in real time is driving the rise of in-memory computing. ScaleOut Software makes a similar case. The company just released ScaleOut Analytics Server, an In-memory data grid (IMDG) platform that includes a built in map/reduce functionality to process analytics queries 16 times faster than Hadoop. According to company CEO Dr. William Bain, the best part of this approach is that it supports traditional object-oriented approaches to building applications via an easily accessible API. “We’re giving programmers access to data parallelism in a very natural way,” he said. Opera Solutions offers a predictive analytics application that simply focuses on identifying patterns of Big Data. According to Shawn Blevins, executive vice president and general manager of sales, the Opera Solutions Signal Hub Suite includes a number of prebuilt models that effectively automate many of the tasks ordinarily performed by data scientists. “In our case the data is the application,” he said. “We’re not looking for logic, but rather patterns that match the models.” Other approaches for tacking data management include Nodeable, with a streaming analytics engine that pre-process Big Data in way that makes it feasible to store in a compressed format on Amazon Web Services (AWS). What’s really required, argues Fernando Lucini of Hewlett-Packard’s Autonomy unit, is a unified layer of management software for Big Data not only accessible by multiple analytics applications, but also capable of adapting rapidly to new information. HP Autonomy Intelligent Data Operating Layer (IDOL) software, says Lucini, is specifically designed to provide a federated way to manage data that automatically identifies patterns and creates associated models. “The simple truth is that no data scientist can create an ontology that can keep pace with changes in the real world,” he said. “Organizations are going to have to start thinking outside the search box when it comes to dealing with Big Data.” None of this is lost on HP rivals such as Oracle, IBM, SAP, EMC, Informatica and Software AG, all of which are racing to build comprehensive frameworks for managing Big Data. Image: Alteryx

Alteryx Analytics Gallery offers 30 out-of-the-box applications accessible via the cloud.[/caption] Data management—especially when the data in question grows to epic proportions—remains a top goal of analysts and CIOs. In order to fulfill that need, some IT vendors have begun embedding tools into their software offerings that automate data management. Today, Alteryx will launch version 8.0 of Alteryx Strategic Analysis. Company officials suggest that the new release will make Big Data more accessible to the average analyst in a way that doesn’t necessarily require the intervention of data scientists with advanced degrees and years of experience. At the core of this new release is an Alteryx Analytics Gallery, which provides 30 out-of-the-box applications accessible via the cloud. The end result, say company officials, is a major step towards the “consumerization of Big Data.” Most organizations simply can‘t afford—let alone find—the data scientists often needed to handle massive amounts of information. By adding features that address data management issues, organizations can (at least in theory) achieve impressive returns on their Big Data investments without having to wait for a data scientist to become available or, once hired, actually finish a project. Analytics-applications builders are keenly aware that that, despite the business gains provided by their software, the market for these applications will remain relatively small without some built-in management capabilities. And while many of those vendors are moving forward with the aforementioned embedding of data-management functions into their applications, the scope of those offerings varies wildly depending on the vendor. ClickFox, for example, manages Big Data on behalf of customers who want to invoke its customer experience management application in the cloud. According to Jeff Gossman, vice president of product management, the company provides a service overlay on top of datasets that makes all that information more accessible to business users. “ClickFox does all the heavy lifting,” Gossman said, “because the shortage of data scientists has become a bottleneck.” Then there’s BIME, which developed an analytics application tightly coupled to a variety of Big Data services, including Google Big Query. Big-name vendors such as IBM and SAP give customers access to both on-premise and cloud options for analysis, with a bit more emphasis these days on the cloud. IBM, for example, offers an instance of IBM InfoSphere BigInsights, an implementation of Hadoop packaged with IBM analytics software that runs on its IBM SmartCloud Enterprise infrastructure-as-a-service offering. Despite the importance of Hadoop to many companies’ data-crunching efforts, Anjul Bhambhri, vice president of Big Data for IBM, insists that not all Big Data “is created equal.” Hadoop faces some batch-oriented limitations, Bhambhri added, which means that IT organizations with a variety of data manage need to employ a healthy mix of data-management solutions are capable of processing large amounts of data in real time. SAP, meanwhile, is pushing the adoption of cloud and on-premises analytics applications leverage its High Performance Analytics Appliance (HANA) in-memory database technology. SAP argues that the need to identify strategic insights in real time is driving the rise of in-memory computing. ScaleOut Software makes a similar case. The company just released ScaleOut Analytics Server, an In-memory data grid (IMDG) platform that includes a built in map/reduce functionality to process analytics queries 16 times faster than Hadoop. According to company CEO Dr. William Bain, the best part of this approach is that it supports traditional object-oriented approaches to building applications via an easily accessible API. “We’re giving programmers access to data parallelism in a very natural way,” he said. Opera Solutions offers a predictive analytics application that simply focuses on identifying patterns of Big Data. According to Shawn Blevins, executive vice president and general manager of sales, the Opera Solutions Signal Hub Suite includes a number of prebuilt models that effectively automate many of the tasks ordinarily performed by data scientists. “In our case the data is the application,” he said. “We’re not looking for logic, but rather patterns that match the models.” Other approaches for tacking data management include Nodeable, with a streaming analytics engine that pre-process Big Data in way that makes it feasible to store in a compressed format on Amazon Web Services (AWS). What’s really required, argues Fernando Lucini of Hewlett-Packard’s Autonomy unit, is a unified layer of management software for Big Data not only accessible by multiple analytics applications, but also capable of adapting rapidly to new information. HP Autonomy Intelligent Data Operating Layer (IDOL) software, says Lucini, is specifically designed to provide a federated way to manage data that automatically identifies patterns and creates associated models. “The simple truth is that no data scientist can create an ontology that can keep pace with changes in the real world,” he said. “Organizations are going to have to start thinking outside the search box when it comes to dealing with Big Data.” None of this is lost on HP rivals such as Oracle, IBM, SAP, EMC, Informatica and Software AG, all of which are racing to build comprehensive frameworks for managing Big Data. Image: Alteryx

Alteryx Analytics Gallery offers 30 out-of-the-box applications accessible via the cloud.[/caption] Data management—especially when the data in question grows to epic proportions—remains a top goal of analysts and CIOs. In order to fulfill that need, some IT vendors have begun embedding tools into their software offerings that automate data management. Today, Alteryx will launch version 8.0 of Alteryx Strategic Analysis. Company officials suggest that the new release will make Big Data more accessible to the average analyst in a way that doesn’t necessarily require the intervention of data scientists with advanced degrees and years of experience. At the core of this new release is an Alteryx Analytics Gallery, which provides 30 out-of-the-box applications accessible via the cloud. The end result, say company officials, is a major step towards the “consumerization of Big Data.” Most organizations simply can‘t afford—let alone find—the data scientists often needed to handle massive amounts of information. By adding features that address data management issues, organizations can (at least in theory) achieve impressive returns on their Big Data investments without having to wait for a data scientist to become available or, once hired, actually finish a project. Analytics-applications builders are keenly aware that that, despite the business gains provided by their software, the market for these applications will remain relatively small without some built-in management capabilities. And while many of those vendors are moving forward with the aforementioned embedding of data-management functions into their applications, the scope of those offerings varies wildly depending on the vendor. ClickFox, for example, manages Big Data on behalf of customers who want to invoke its customer experience management application in the cloud. According to Jeff Gossman, vice president of product management, the company provides a service overlay on top of datasets that makes all that information more accessible to business users. “ClickFox does all the heavy lifting,” Gossman said, “because the shortage of data scientists has become a bottleneck.” Then there’s BIME, which developed an analytics application tightly coupled to a variety of Big Data services, including Google Big Query. Big-name vendors such as IBM and SAP give customers access to both on-premise and cloud options for analysis, with a bit more emphasis these days on the cloud. IBM, for example, offers an instance of IBM InfoSphere BigInsights, an implementation of Hadoop packaged with IBM analytics software that runs on its IBM SmartCloud Enterprise infrastructure-as-a-service offering. Despite the importance of Hadoop to many companies’ data-crunching efforts, Anjul Bhambhri, vice president of Big Data for IBM, insists that not all Big Data “is created equal.” Hadoop faces some batch-oriented limitations, Bhambhri added, which means that IT organizations with a variety of data manage need to employ a healthy mix of data-management solutions are capable of processing large amounts of data in real time. SAP, meanwhile, is pushing the adoption of cloud and on-premises analytics applications leverage its High Performance Analytics Appliance (HANA) in-memory database technology. SAP argues that the need to identify strategic insights in real time is driving the rise of in-memory computing. ScaleOut Software makes a similar case. The company just released ScaleOut Analytics Server, an In-memory data grid (IMDG) platform that includes a built in map/reduce functionality to process analytics queries 16 times faster than Hadoop. According to company CEO Dr. William Bain, the best part of this approach is that it supports traditional object-oriented approaches to building applications via an easily accessible API. “We’re giving programmers access to data parallelism in a very natural way,” he said. Opera Solutions offers a predictive analytics application that simply focuses on identifying patterns of Big Data. According to Shawn Blevins, executive vice president and general manager of sales, the Opera Solutions Signal Hub Suite includes a number of prebuilt models that effectively automate many of the tasks ordinarily performed by data scientists. “In our case the data is the application,” he said. “We’re not looking for logic, but rather patterns that match the models.” Other approaches for tacking data management include Nodeable, with a streaming analytics engine that pre-process Big Data in way that makes it feasible to store in a compressed format on Amazon Web Services (AWS). What’s really required, argues Fernando Lucini of Hewlett-Packard’s Autonomy unit, is a unified layer of management software for Big Data not only accessible by multiple analytics applications, but also capable of adapting rapidly to new information. HP Autonomy Intelligent Data Operating Layer (IDOL) software, says Lucini, is specifically designed to provide a federated way to manage data that automatically identifies patterns and creates associated models. “The simple truth is that no data scientist can create an ontology that can keep pace with changes in the real world,” he said. “Organizations are going to have to start thinking outside the search box when it comes to dealing with Big Data.” None of this is lost on HP rivals such as Oracle, IBM, SAP, EMC, Informatica and Software AG, all of which are racing to build comprehensive frameworks for managing Big Data. Image: Alteryx