There was a time just a few years ago when dinosaurs ruled the application processor space. If you wanted to run a user-mode application, there was only one real choice in processor architectures – you bought an x86-based system. Notebooks, desktops, workstations, and servers were all dominated by two vendors and what looked like one instruction set. But, as with most real world stories, that’s not really the case. What looked like one instruction set was and is not. Over the next few years a proliferation in core sizes, instruction set extensions, and heterogeneous compute offload in x86 and ARM processor lineups will fuel interesting new options to accelerate specific workloads. (While RISC processors are technically interesting, they account for under 3 percent of servers shipped and their share is still declining, according to IDC.) However, I’ll argue that datacenter-infrastructure software vendors have and will control the pace of deployment for any changes to processor architecture and instruction sets in both enterprise and cloud deployments.

What Is the x86 Instruction Set Architecture (ISA)?

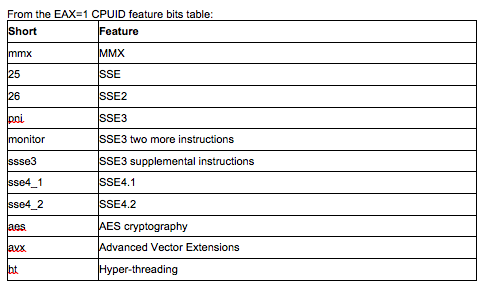

The core of the current x86 ISA was defined way back in the early 1990s with Intel’s Pentium P54C design. It incorporated the 486 integer ISA, the x87 floating point unit, and improvements enabled by a new (then) state-of-the-art superscalar design. Intel introduced

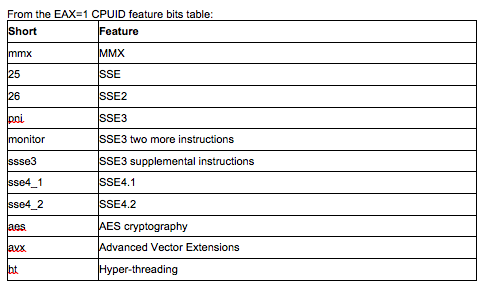

CPUID with the Pentium brand. CPUID provides a standard data structure for programmers to ask an x86 processor who built it, where it fits in that vendor’s product line, its performance attributes, and what features it contains. It contains everything a programmer needs to determine what an x86 processor is capable of, relative to other processors currently on the market and older legacy processors in the installed base. CPUID enabled software developers to keep up with an escalating war of additional instruction set packages between Intel and AMD. Without it, software developers would have adopted only a fraction of the performance that the new features enabled over the next 20 years.

This short table doesn’t include:

- AMD’s legacy 3DNow! instructions or their current Bulldozer SSE4a best-guess at SSE4.1

- The different virtualization and power management ISAs created by both Intel and AMD

- Intel’s “Haswell” AVX2, TXT, EPT ISAs and new integer instructions

- Intel’s Xeon Phi vector and scalar ISAs.

The picture is further complicated because not all current processors from either vendor have a complete set of current ISAs:

- Intel’s Atom processors may or may not have virtualization and 64-bit ISAs; all lack SSE4.x and AVX.

- AMD is usually half a cycle behind Intel on new ISAs – SSE4a will convert to SSE4.1 and 4.2 support in upcoming releases and they will implement AVX just as Intel is fielding AVX2.

Workload Acceleration and ISAs

Many datacenter workloads don’t need more than the 20-year-old core x86 ISA, albeit running at processor speeds of over 1.5GHz. But, many do – high performance computing (HPC) has always demanded more performance than is available in the market; business intelligence (BI) and big data are following that track. And then there are specialized applications, like cryptography, render farms, remote desktops, and whatever needs to be processed via Hadoop and new parallel processing frameworks. What’s really weird in this day and age is that, while software can query a processor in detail for its features, there is no standard way for a hypervisor to query runtime applications to figure out what target processor features an application was compiled for. It’s relatively easy to control if you’re in a walled garden. Say you’re operating your own cloud SaaS and you compiled all of your runtime code yourself, or you built a custom, homogeneous (because you probably bought it all at once) HPC cluster specifically to run your own clever apps (simulate a living cell, collide galaxies, blow stuff up, whatever). You can write to a specific ISA because you know what it is. It may permeate your entire HPC cluster or it may lurk in a group of purpose-configured racks behind your NoSQL database servers. But you know exactly where it is and what it can do. For these reasons, HPC has always been an early adopter of new and different technologies. I’ll extend that behavior, for the same reasons, to SaaS providers who control their own software stack – they are in a good position to experiment with new processor ISAs and determine which workloads and specific applications can take advantage of them. Where HPC is actively interested in GPU ISAs and other parallel math acceleration solutions, SaaS vendors are interested in exploring operational efficiencies via rapidly evolving ARM ISAs. However, on the other end of the spectrum, let’s say you want to rent compute cycles out as a private or public cloud to folks who have their own legacy applications. That’s a vastly different story.

Virtually There

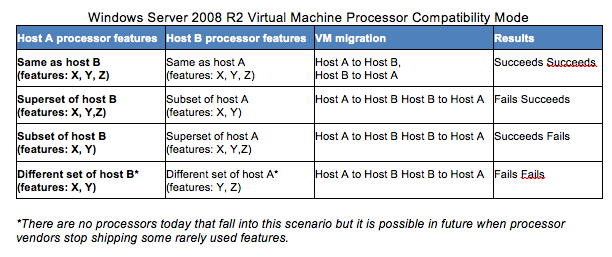

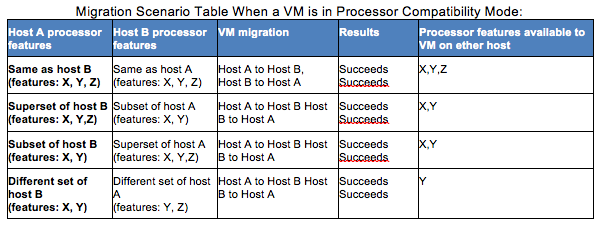

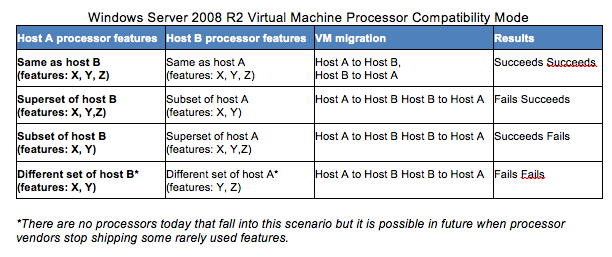

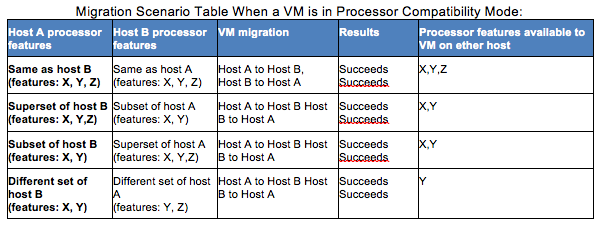

For a variety of reasons most datacenters can’t buy all of the servers they will ever need all at once. So, we’re stuck buying servers (with processors in them) in waves - whatever’s in our price-performance sweet spot when we need more. And that means that in a few short years we will have a bunch of differently-abled processors in our virtualized datacenter. It doesn’t really matter if they all came from one vendor, as I outlined above. As hypervisor vendors considered implementing live migration between processors in a virtual system, they didn’t want to specify an arbitrary feature set, as processor feature sets evolve on a different cadence than system software. So they designed the ability to “baseline” minimum common sets of processor features within a hypervisor’s scope. I’ve included Microsoft’s current schema here, as well as links to VMware and Citrix solutions:

Remember that none of this is predicated on the compile-time options in the runtime code bases – it is all dependent on reliably querying the underlying hardware infrastructure. A given application might not use any ISA features that differ from the original Pentium ISA, but the hypervisor has no way to know that. My takeaway here is that there is little room for a non-x86 ISA in a virtualized datacenter intended to run legacy applications. Heterogeneous x86 pools are too complicated as it is. Perhaps if a segment of the hosting industry evolved solely to serve newer virtual managed runtime stacks, so that all of the application code running under the hypervisor was not compiled for specific processor ISAs – that might work…

Conclusion

Monopolies do not last forever. There are real opportunities for alternate ISAs to make inroads against x86 under specific – but not uncommon – conditions. And as application developers continue their trend of moving away from writing applications code that needs to be compiled and toward managed code environments, that leaves even more of an opening to consider alternate processor ISAs. None of this factors in Intel or AMD's ability to develop efficient mini-cores as they have demonstrated this capability already. Data center operators and hypervisor/managed environment developers must meet in the middle to take advantage of new silicon products. If they don’t, they risk being displaced by more efficient SaaS vendors who can do so.

PaulTeich is a contributing analyst at MoorInsights & Strategy. All tables copyright 2012 Moor Insights and Strategy Inc. and ProductLens LLC. References: VMwareCompatibilityGuide EnhancedvMotionCompatibility (EVC) processorsupport ImpactofEnhancedvMotionCompatibilityonApplicationPerformance CitrixXenServer 6.0 QuickStartGuide UnderstandingHeterogeneousCPUPoolinginXenServer 5.6  This short table doesn’t include:

This short table doesn’t include:

Remember that none of this is predicated on the compile-time options in the runtime code bases – it is all dependent on reliably querying the underlying hardware infrastructure. A given application might not use any ISA features that differ from the original Pentium ISA, but the hypervisor has no way to know that. My takeaway here is that there is little room for a non-x86 ISA in a virtualized datacenter intended to run legacy applications. Heterogeneous x86 pools are too complicated as it is. Perhaps if a segment of the hosting industry evolved solely to serve newer virtual managed runtime stacks, so that all of the application code running under the hypervisor was not compiled for specific processor ISAs – that might work…

Remember that none of this is predicated on the compile-time options in the runtime code bases – it is all dependent on reliably querying the underlying hardware infrastructure. A given application might not use any ISA features that differ from the original Pentium ISA, but the hypervisor has no way to know that. My takeaway here is that there is little room for a non-x86 ISA in a virtualized datacenter intended to run legacy applications. Heterogeneous x86 pools are too complicated as it is. Perhaps if a segment of the hosting industry evolved solely to serve newer virtual managed runtime stacks, so that all of the application code running under the hypervisor was not compiled for specific processor ISAs – that might work…