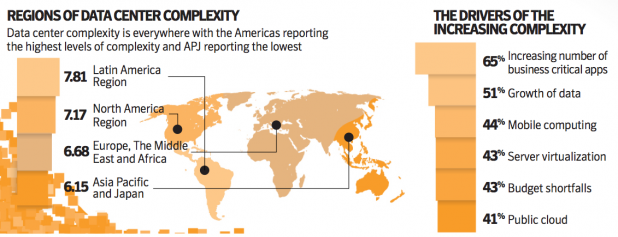

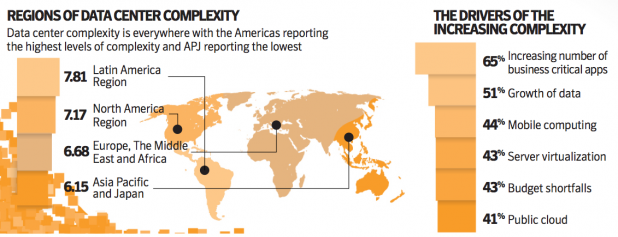

A typical data-center organization experienced an average of 16 data center outages in the last 12 months, costing an average of $5.1 million, according to a new Symantec survey on the state of the data center. Systems failures, then human error, followed by natural disasters were the most common causes, according to the company's "State of the Data Center 2012" survey. Symantec cited the “complexity” of data centers as a cause, with 44 percent of the survey's respondents blaming the rise of mobile devices. The solution? Information governance, Symantec claims. Ninety percent of companies have employed or are discussing information governance, according to its survey. “As today’s businesses generate more information and introduce new technologies into the data center, these changes can either act as a sail to catch the wind and accelerate growth, or an anchor holding organizations back,” said Brian Dye, vice president of the Information Intelligence Group within Symantec. “The difference is up to organizations, which can meet the challenges head on by implementing controls such as standardization or establishing an information governance strategy to keep information from becoming a liability.” Some 65 percent of those surveyed cited the emergence of more business-critical applications as the most common cause of complexity. Somewhat behind that was the growth of data (51 percent), mobile computing (44 percent), server virtualization (43 percent), budget shortfalls (43 percent), and 41 percent for the public cloud. To its credit, Symantec also included several anecdotes from participants in its focus group, adding some color to the findings. “You need new skill requirements; you need to keep up to date,” said one IT director for a healthcare organization. “Power and cooling requirements for the data center never go away. Even though you virtualize, there’s an amazing number of applications these days. These vendors want them to run on a single machine, so you run it on a single VM, but it just multiplies.” That IT chief added: “We may be saving money with virtualization, but the more I talk about going to the cloud, that’s an expense.” The top three consequences for the increased complexity were increased costs (47 percent), longer lead times for storage migration (39 percent), reduced agility (39 percent), longer lead times for provisioning storage (38 percent) and more time spent finding information (37 percent). Other consequences cited included security breaches, lost or misplaced data, and general downtime. The solution? Symantec claims businesses should push toward information governance, or a “formal program that allows organizations to proactively classify, retain and discover information in order to reduce information risk, reduce the cost of managing information, establish retention policies and streamline their eDiscovery process.” The company also offered some additional advice: establish C-level ownership of information governance; understand what IT assets you have, how they are being consumed, and by whom; reduce the number of backup applications and minimize capital expenses and operating expenses; deploy de-duplication; and use appliances to simplify operations. Symantec commissioned ReRez Research to field the survey, which was based on a total of 2,453 IT professionals at organizations in 32 countries; 250 apiece came from the United States and Canada. Image: Symantec

A typical data-center organization experienced an average of 16 data center outages in the last 12 months, costing an average of $5.1 million, according to a new Symantec survey on the state of the data center. Systems failures, then human error, followed by natural disasters were the most common causes, according to the company's "State of the Data Center 2012" survey. Symantec cited the “complexity” of data centers as a cause, with 44 percent of the survey's respondents blaming the rise of mobile devices. The solution? Information governance, Symantec claims. Ninety percent of companies have employed or are discussing information governance, according to its survey. “As today’s businesses generate more information and introduce new technologies into the data center, these changes can either act as a sail to catch the wind and accelerate growth, or an anchor holding organizations back,” said Brian Dye, vice president of the Information Intelligence Group within Symantec. “The difference is up to organizations, which can meet the challenges head on by implementing controls such as standardization or establishing an information governance strategy to keep information from becoming a liability.” Some 65 percent of those surveyed cited the emergence of more business-critical applications as the most common cause of complexity. Somewhat behind that was the growth of data (51 percent), mobile computing (44 percent), server virtualization (43 percent), budget shortfalls (43 percent), and 41 percent for the public cloud. To its credit, Symantec also included several anecdotes from participants in its focus group, adding some color to the findings. “You need new skill requirements; you need to keep up to date,” said one IT director for a healthcare organization. “Power and cooling requirements for the data center never go away. Even though you virtualize, there’s an amazing number of applications these days. These vendors want them to run on a single machine, so you run it on a single VM, but it just multiplies.” That IT chief added: “We may be saving money with virtualization, but the more I talk about going to the cloud, that’s an expense.” The top three consequences for the increased complexity were increased costs (47 percent), longer lead times for storage migration (39 percent), reduced agility (39 percent), longer lead times for provisioning storage (38 percent) and more time spent finding information (37 percent). Other consequences cited included security breaches, lost or misplaced data, and general downtime. The solution? Symantec claims businesses should push toward information governance, or a “formal program that allows organizations to proactively classify, retain and discover information in order to reduce information risk, reduce the cost of managing information, establish retention policies and streamline their eDiscovery process.” The company also offered some additional advice: establish C-level ownership of information governance; understand what IT assets you have, how they are being consumed, and by whom; reduce the number of backup applications and minimize capital expenses and operating expenses; deploy de-duplication; and use appliances to simplify operations. Symantec commissioned ReRez Research to field the survey, which was based on a total of 2,453 IT professionals at organizations in 32 countries; 250 apiece came from the United States and Canada. Image: Symantec Symantec Blames Data Center Outages on Complexity

A typical data-center organization experienced an average of 16 data center outages in the last 12 months, costing an average of $5.1 million, according to a new Symantec survey on the state of the data center. Systems failures, then human error, followed by natural disasters were the most common causes, according to the company's "State of the Data Center 2012" survey. Symantec cited the “complexity” of data centers as a cause, with 44 percent of the survey's respondents blaming the rise of mobile devices. The solution? Information governance, Symantec claims. Ninety percent of companies have employed or are discussing information governance, according to its survey. “As today’s businesses generate more information and introduce new technologies into the data center, these changes can either act as a sail to catch the wind and accelerate growth, or an anchor holding organizations back,” said Brian Dye, vice president of the Information Intelligence Group within Symantec. “The difference is up to organizations, which can meet the challenges head on by implementing controls such as standardization or establishing an information governance strategy to keep information from becoming a liability.” Some 65 percent of those surveyed cited the emergence of more business-critical applications as the most common cause of complexity. Somewhat behind that was the growth of data (51 percent), mobile computing (44 percent), server virtualization (43 percent), budget shortfalls (43 percent), and 41 percent for the public cloud. To its credit, Symantec also included several anecdotes from participants in its focus group, adding some color to the findings. “You need new skill requirements; you need to keep up to date,” said one IT director for a healthcare organization. “Power and cooling requirements for the data center never go away. Even though you virtualize, there’s an amazing number of applications these days. These vendors want them to run on a single machine, so you run it on a single VM, but it just multiplies.” That IT chief added: “We may be saving money with virtualization, but the more I talk about going to the cloud, that’s an expense.” The top three consequences for the increased complexity were increased costs (47 percent), longer lead times for storage migration (39 percent), reduced agility (39 percent), longer lead times for provisioning storage (38 percent) and more time spent finding information (37 percent). Other consequences cited included security breaches, lost or misplaced data, and general downtime. The solution? Symantec claims businesses should push toward information governance, or a “formal program that allows organizations to proactively classify, retain and discover information in order to reduce information risk, reduce the cost of managing information, establish retention policies and streamline their eDiscovery process.” The company also offered some additional advice: establish C-level ownership of information governance; understand what IT assets you have, how they are being consumed, and by whom; reduce the number of backup applications and minimize capital expenses and operating expenses; deploy de-duplication; and use appliances to simplify operations. Symantec commissioned ReRez Research to field the survey, which was based on a total of 2,453 IT professionals at organizations in 32 countries; 250 apiece came from the United States and Canada. Image: Symantec

A typical data-center organization experienced an average of 16 data center outages in the last 12 months, costing an average of $5.1 million, according to a new Symantec survey on the state of the data center. Systems failures, then human error, followed by natural disasters were the most common causes, according to the company's "State of the Data Center 2012" survey. Symantec cited the “complexity” of data centers as a cause, with 44 percent of the survey's respondents blaming the rise of mobile devices. The solution? Information governance, Symantec claims. Ninety percent of companies have employed or are discussing information governance, according to its survey. “As today’s businesses generate more information and introduce new technologies into the data center, these changes can either act as a sail to catch the wind and accelerate growth, or an anchor holding organizations back,” said Brian Dye, vice president of the Information Intelligence Group within Symantec. “The difference is up to organizations, which can meet the challenges head on by implementing controls such as standardization or establishing an information governance strategy to keep information from becoming a liability.” Some 65 percent of those surveyed cited the emergence of more business-critical applications as the most common cause of complexity. Somewhat behind that was the growth of data (51 percent), mobile computing (44 percent), server virtualization (43 percent), budget shortfalls (43 percent), and 41 percent for the public cloud. To its credit, Symantec also included several anecdotes from participants in its focus group, adding some color to the findings. “You need new skill requirements; you need to keep up to date,” said one IT director for a healthcare organization. “Power and cooling requirements for the data center never go away. Even though you virtualize, there’s an amazing number of applications these days. These vendors want them to run on a single machine, so you run it on a single VM, but it just multiplies.” That IT chief added: “We may be saving money with virtualization, but the more I talk about going to the cloud, that’s an expense.” The top three consequences for the increased complexity were increased costs (47 percent), longer lead times for storage migration (39 percent), reduced agility (39 percent), longer lead times for provisioning storage (38 percent) and more time spent finding information (37 percent). Other consequences cited included security breaches, lost or misplaced data, and general downtime. The solution? Symantec claims businesses should push toward information governance, or a “formal program that allows organizations to proactively classify, retain and discover information in order to reduce information risk, reduce the cost of managing information, establish retention policies and streamline their eDiscovery process.” The company also offered some additional advice: establish C-level ownership of information governance; understand what IT assets you have, how they are being consumed, and by whom; reduce the number of backup applications and minimize capital expenses and operating expenses; deploy de-duplication; and use appliances to simplify operations. Symantec commissioned ReRez Research to field the survey, which was based on a total of 2,453 IT professionals at organizations in 32 countries; 250 apiece came from the United States and Canada. Image: Symantec