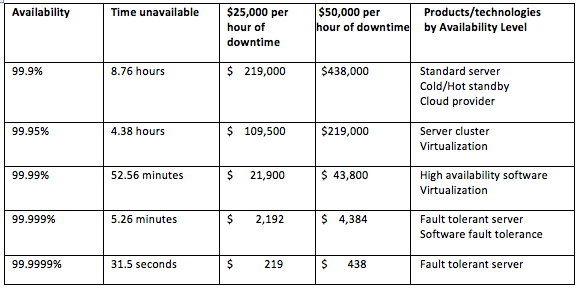

Time is money. Downtime is money, too. When business applications stop providing end users with services, the meter starts running. Depending on the application, costs may pile up rapidly and result in serious financial damage. Downtime costs for other apps may be inconsequential. Determining the value of applications to the business is the first step toward making informed technology choices that will deliver the appropriate level of availability for individual applications, or a collection of applications running on virtual machines. The ability of a chosen platform to protect applications from downtime is commonly expressed as “nines” … 99.9%, five nines, etc. Applying this metric to the array of server technologies commonly used today will tell you a lot about that solution’s ability to keep applications up and running, which is the focus of this article. A number of reputable industry analyst firms peg the cost of an hour of IT system downtime for the average company at well above $100,000. The biggest cost culprits, of course, are the applications your company relies on most and would want up and running first after an outage. The principal measure of an application’s value is revenue impact. Lost sales, diminished productivity, wages, reputation damage, financial penalties, waste and scrap, customer dissatisfaction and other factors can all contribute to revenue impact of a failed application. Unearthing all of the contributors is the only way to arrive at your true cost and, by extension, the degree of uptime protection you need. The “Cost of Downtime” chart shows average annual downtime for different levels of availability in 24/7 operation, and how much money that might cost given a certain downtime dollar value.

Cost of Downtime

The level of availability to the corresponding solution is not exact. A finely tuned and carefully managed cluster running a stable workload can achieve 99.99%, but that’s the exception. Virtualization can span availability levels depending on product capabilities in play. Current virtual fault tolerance solutions are limited in their ability to support true mission critical applications due to system overhead, lack of SMP support, and numerous configuration requirements. Hardware fault tolerance does not suffer these limitations. Vendors may attempt to seed confusion. For example, higher end x86 servers may come with redundant power supplies or fans. When the term fault tolerance is used to characterize the benefit of these duplicate components, it’s a complete misnomer. Fault tolerance is not a range or a scale of uptime, e.g. how fault tolerant is that server? A server either is fault tolerant or it’s not; it’s a matter of architecture, not of marketing. Any availability solution below four nines, like those on the chart, should be used only for applications where periods of downtime are tolerable, and the costs are manageable.

The level of availability to the corresponding solution is not exact. A finely tuned and carefully managed cluster running a stable workload can achieve 99.99%, but that’s the exception. Virtualization can span availability levels depending on product capabilities in play. Current virtual fault tolerance solutions are limited in their ability to support true mission critical applications due to system overhead, lack of SMP support, and numerous configuration requirements. Hardware fault tolerance does not suffer these limitations. Vendors may attempt to seed confusion. For example, higher end x86 servers may come with redundant power supplies or fans. When the term fault tolerance is used to characterize the benefit of these duplicate components, it’s a complete misnomer. Fault tolerance is not a range or a scale of uptime, e.g. how fault tolerant is that server? A server either is fault tolerant or it’s not; it’s a matter of architecture, not of marketing. Any availability solution below four nines, like those on the chart, should be used only for applications where periods of downtime are tolerable, and the costs are manageable.

Traditional high-availability solutions: uptime level of 99.9% - 99.95%

Traditional high-availability solutions are designed to recover from failures quickly, “recover” being the operative word. The most common of these solutions is server clustering. Two or more servers are configured with cluster software. Each server node in the cluster requires its own OS license and often its own application license, as well. The servers communicate with each other by continually checking for a heartbeat that confirms other servers in the cluster are available. If a server fails, its workload is moved and restarted on another server in the cluster, designated as the failover server. This results in application downtime, and data that is not written to disk is lost. The size and nature of the applications determines how long it will take to restart and return to production. A local area network (LAN) or wide area network (WAN) connects all the cluster nodes, which are managed by cluster software. Failover clusters require a storage area network (SAN) to provide the shared access to data required to enable failover capabilities. This means that it is necessary to have dedicated shared storage or redundant connections to the corporate SAN. While high-availability clusters improve uptime, their effectiveness is highly dependent on the skills of specialized IT personnel. Clusters can be complex and time-consuming to deploy and manage; even minor changes may require programming, testing, and administrative oversight. As a result, the total cost of ownership (TCO) is often high.

High-availability software solutions: uptime of 99.99%

The most advanced high-availability solutions are software designed to prevent downtime, data loss, and business interruption, with less complexity and lower cost than high-availability clusters. Not all are created equal. Among the key differences are operating systems supported, processing overhead consumed, monitoring and alerts, and price. The better ones are equipped with predictive features that automatically identify, report, and handle faults before they become problems and cause downtime. They support multiple operating systems and are very simple and intuitive to use. Two important features of advanced high-availability software are that it works with standard x86 servers and doesn’t require the skills of highly advanced IT staff to install or maintain it. SANs are not required, making the system easier to manage and lowering an organization’s TCO. Advanced high-availability software is designed to configure and manage its own operation, making the setup of application environments easier and more economical. There is a key difference between high-availability clusters and advanced high-availability software. Advanced HA software has the ability to continuously monitor for issues to prevent downtime from occurring, whereas cluster solutions are designed to recover after a failure has already occurred. The prevention of downtime is the goal of high-availability software. The most effective of these solutions provide more than 99.99% uptime, which translates to less than one hour of unscheduled downtime per year.

Fault-tolerant solutions: uptime of 99.999%

Fault-tolerant solutions, also referred to as continuous availability solutions, are not a cluster variant. Their architecture and treatment of failure events are distinct. Fault tolerance is achieved in a server by having a second set of completely redundant hardware components in the system. This means that end users never experience an interruption in server availability because downtime is preempted. The server’s software automatically synchronizes the replicated components, executing all processing in lockstep so that “in flight” data is always protected. The two sets of CPUs, RAM, motherboards, and power supplies are all processing the same information at the same time. If one component fails, the companion component is already there and operating, and the system keeps functioning. Fault-tolerant servers also have built-in, failsafe software technology that detects, isolates, and corrects system problems before they cause downtime. This means that the operating system, middleware, and application software are protected from errors. In-memory data is also constantly protected and maintained. A fault-tolerant server is managed exactly like a standard server, making the system easy to install, use, and maintain. No software modifications or special configurations are necessary, and the sophisticated back-end technology runs in the background, invisible to anyone administering the system. Even though the server is completely redundant, only one operating system license is required, and usually only one application license as well—representing a large cost savings.

Considering the Specialized Needs of a Virtualized Environment

High availability is an issue that most consolidated virtual environments face. After all, if a company puts all of its eggs (in this case, virtual servers) into one basket, extreme care must be taken to not to drop that basket to the floor. The virtualization software layer lets one physical computing server run multiple virtual machines. The organization saves money by consolidating a number of applications on the same physical server and provides an environment that is easier for IT staff to manage. The capital costs and operating costs for a data center can be significantly reduced with virtualization, often by a factor of four or greater. Relying on virtualization, however, means that a greater number of applications are exposed to downtime. Features within virtualization software can increase redundancy and availability, but cannot guarantee continuous operation. The physical server still has the potential to be a single point of failure for all the virtual machines it supports. Applications cannot be migrated off a dead server. Therefore, when you consolidate dozens of virtual machines to a single physical machine, that system becomes mission critical—even if the individual applications are not. If you are depending upon a virtualized environment, it is crucial to employ a continuous availability strategy in order to ensure uptime and guarantee that virtual machines can continue to function. Many industry experts recommend using a fault-tolerant solution in a virtualized environment. According to Managing Editor Dr. Bill Highleyman of The Availability Digest, “Especially when the cost of downtime is considered, virtualized fault tolerance can bring continuous availability to a data center at a competitive cost and with no special administrative skills.” I started this article by stating downtime is money. A discussion of determining downtime cost is beyond our scope here. However, a comprehensive analysis to determine your cost of downtime –especially an outage of your most critical business applications—is a wise first step in determining your availability requirements and your ROI. Dave Laurello is president and CEO of Stratus Technologies (@stratus4uptime). He rejoined Stratus in January 2000, coming from Lucent Technologies, where he held the position of vice president and general manager of the CNS business unit. Image: Arjuna Kodisinghe/Shutterstock.com