[/caption] The Hadoop Summit, which took place June 13-14 in San Jose, Calif., was packed with attendees—with the exception of a session on B.I. integration hosted by Abe Taha, vice president of engineering at KarmaSphere. That was a shame. Taha gave lots of reasons why Big Data and B.I. can peacefully co-exist, and are even complementary. That might come as a mild shock to many who wrestle with massive datasets: the amount of data flooding organizations has become so enormous that it threatens to overwhelm the capabilities of many B.I. tools, even when the organizations in question start deploying data-crunching frameworks such as Hadoop. With B.I., you’re looking for the answers to particular questions; with Big Data, you don’t even know what questions to ask. B.I. reports on the business, while Big Data can be used to optimize business operations. With B.I., workers need to set up schemas and structure in advance of actual analysis; with Big Data, analysis can be performed on the fly, with no thought of prior schemas. Combining the two could create a powerful system for any organization wrestling with how to best manage and analyze its data. As Scott Cappiello, a director of program management for traditional BI vendor MicroStrategy told me: "We want to supplement and complement BI, and not rip out data warehouses." (Of course, the company also sells data warehouses, which gives it something of a vested self-interest in their survival.) Nevertheless, he full expects that "everyone of our customers will have Hadoop in their infrastructure eventually—it will be in the mix." Taha had several suggestions on how to make sure that your Big Data project can be integrated into a traditional B.I. shop, and they are worth reviewing here: Leave no data behind. Storage is cheap: why not make use of it? One company at the Summit told me that a major retailer was now storing ten years' worth of store-by-store data, just because they could. A silicon chipmaker used its huge collection of signal monitoring from its QA processes to predict chip failures, saving them tons of money. This wouldn't have been possible with traditional databases. You never know if someone is going to come up with a problem or a question that can tap this treasure trove. Use all of your analytic assets. Hadoop has its HIVE add-on, but you don't have to devote yourself to just the Hadoop world: pick up whatever assets you already use in your data warehouse and other places, and see if you can make use of them in your Big Data situations. Provide all users some kind of self-service portal to do their own analysis. Karmasphere and Datameer, for example, both offer tools that make ad hoc analysis an easier proposition. Datameer even uses a spreadsheet-like display for people more comfortable with rows and columns. But realize that you will have to provide some structure to your analysis eventually. Build a collaborative environment. You want to encourage users with varying skill sets to participate in your Big Data analysis, and be able to cross-pollinate their ideas with the traditional B.I, methods and perspectives. Make it easy to do queries with a combination of Web-based forms and tools with user-friendly elements such as drag-and-drop. Leverage your existing SQL skill sets to make things as familiar and comfortable as possible to your B.I. team members, too. Be as open and as extensible as possible. The more APIs you can document and make available to your users, the better. Some of the Big Data products, like Kognitio, output data to ODBC and JDBC datasets to make their interaction with the traditional BI side easier. Microsoft offers add-on products that enable Excel and SQL Server to analyze Hadoop data and store it on its Azure cloud services. (And there is an excellent tutorial on how to use R with Hadoop by David Smith.) Use the best of breed reporting tools. Cappiello suggests that, in order to have the right tool for the job, an organization should take the time to examine different Hadoop distributions. Don’t be afraid to experiment with Big Data, he added: “The cost is low.” With Big Data, you can quickly deploy lots of machine resources when you need them. For example, Netflix, which is a big Hadoop shop, sets up special clusters for answering specific queries, so as not to impact its production clusters. "We need to focus on the endgame and make sure that the solutions we create are easier to consume and understand for business users," Scott Gnau, the President of Teradata Labs, said at one of the Hadoop conference keynotes. “We have to figure out how to make enterprise adoption easier and find repeatable references solutions that can drive immediate business value." Or as one Big Data engineer from Netflix told me: "It isn't hard to make the transition to Hadoop. [You] just have to think differently about your data."

[/caption] The Hadoop Summit, which took place June 13-14 in San Jose, Calif., was packed with attendees—with the exception of a session on B.I. integration hosted by Abe Taha, vice president of engineering at KarmaSphere. That was a shame. Taha gave lots of reasons why Big Data and B.I. can peacefully co-exist, and are even complementary. That might come as a mild shock to many who wrestle with massive datasets: the amount of data flooding organizations has become so enormous that it threatens to overwhelm the capabilities of many B.I. tools, even when the organizations in question start deploying data-crunching frameworks such as Hadoop. With B.I., you’re looking for the answers to particular questions; with Big Data, you don’t even know what questions to ask. B.I. reports on the business, while Big Data can be used to optimize business operations. With B.I., workers need to set up schemas and structure in advance of actual analysis; with Big Data, analysis can be performed on the fly, with no thought of prior schemas. Combining the two could create a powerful system for any organization wrestling with how to best manage and analyze its data. As Scott Cappiello, a director of program management for traditional BI vendor MicroStrategy told me: "We want to supplement and complement BI, and not rip out data warehouses." (Of course, the company also sells data warehouses, which gives it something of a vested self-interest in their survival.) Nevertheless, he full expects that "everyone of our customers will have Hadoop in their infrastructure eventually—it will be in the mix." Taha had several suggestions on how to make sure that your Big Data project can be integrated into a traditional B.I. shop, and they are worth reviewing here: Leave no data behind. Storage is cheap: why not make use of it? One company at the Summit told me that a major retailer was now storing ten years' worth of store-by-store data, just because they could. A silicon chipmaker used its huge collection of signal monitoring from its QA processes to predict chip failures, saving them tons of money. This wouldn't have been possible with traditional databases. You never know if someone is going to come up with a problem or a question that can tap this treasure trove. Use all of your analytic assets. Hadoop has its HIVE add-on, but you don't have to devote yourself to just the Hadoop world: pick up whatever assets you already use in your data warehouse and other places, and see if you can make use of them in your Big Data situations. Provide all users some kind of self-service portal to do their own analysis. Karmasphere and Datameer, for example, both offer tools that make ad hoc analysis an easier proposition. Datameer even uses a spreadsheet-like display for people more comfortable with rows and columns. But realize that you will have to provide some structure to your analysis eventually. Build a collaborative environment. You want to encourage users with varying skill sets to participate in your Big Data analysis, and be able to cross-pollinate their ideas with the traditional B.I, methods and perspectives. Make it easy to do queries with a combination of Web-based forms and tools with user-friendly elements such as drag-and-drop. Leverage your existing SQL skill sets to make things as familiar and comfortable as possible to your B.I. team members, too. Be as open and as extensible as possible. The more APIs you can document and make available to your users, the better. Some of the Big Data products, like Kognitio, output data to ODBC and JDBC datasets to make their interaction with the traditional BI side easier. Microsoft offers add-on products that enable Excel and SQL Server to analyze Hadoop data and store it on its Azure cloud services. (And there is an excellent tutorial on how to use R with Hadoop by David Smith.) Use the best of breed reporting tools. Cappiello suggests that, in order to have the right tool for the job, an organization should take the time to examine different Hadoop distributions. Don’t be afraid to experiment with Big Data, he added: “The cost is low.” With Big Data, you can quickly deploy lots of machine resources when you need them. For example, Netflix, which is a big Hadoop shop, sets up special clusters for answering specific queries, so as not to impact its production clusters. "We need to focus on the endgame and make sure that the solutions we create are easier to consume and understand for business users," Scott Gnau, the President of Teradata Labs, said at one of the Hadoop conference keynotes. “We have to figure out how to make enterprise adoption easier and find repeatable references solutions that can drive immediate business value." Or as one Big Data engineer from Netflix told me: "It isn't hard to make the transition to Hadoop. [You] just have to think differently about your data." B.I. and Big Data Can Play Together Nicely

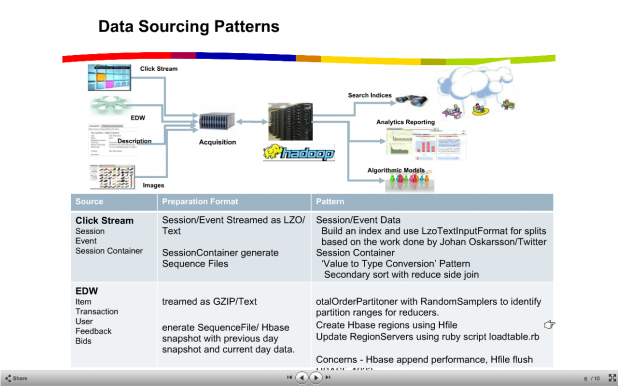

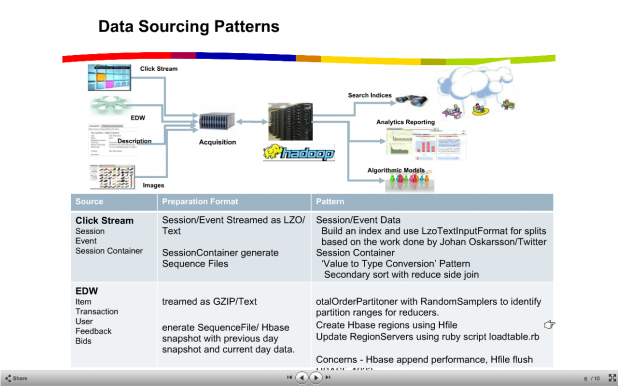

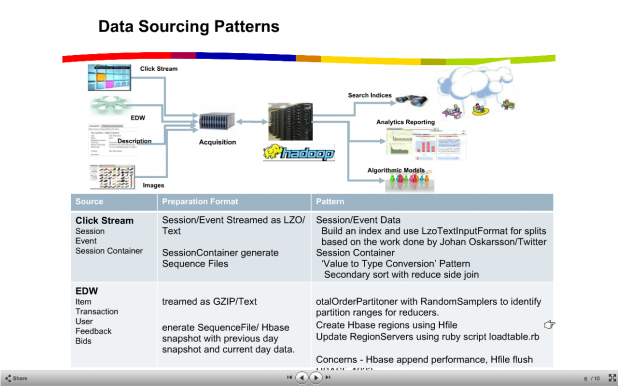

[caption id="attachment_1709" align="aligncenter" width="618" caption="An example of the complexity of data analytics, in the case of eBay. From a presentation by eBay's Shalini Madan (slideshare.net/madananil)."]  [/caption] The Hadoop Summit, which took place June 13-14 in San Jose, Calif., was packed with attendees—with the exception of a session on B.I. integration hosted by Abe Taha, vice president of engineering at KarmaSphere. That was a shame. Taha gave lots of reasons why Big Data and B.I. can peacefully co-exist, and are even complementary. That might come as a mild shock to many who wrestle with massive datasets: the amount of data flooding organizations has become so enormous that it threatens to overwhelm the capabilities of many B.I. tools, even when the organizations in question start deploying data-crunching frameworks such as Hadoop. With B.I., you’re looking for the answers to particular questions; with Big Data, you don’t even know what questions to ask. B.I. reports on the business, while Big Data can be used to optimize business operations. With B.I., workers need to set up schemas and structure in advance of actual analysis; with Big Data, analysis can be performed on the fly, with no thought of prior schemas. Combining the two could create a powerful system for any organization wrestling with how to best manage and analyze its data. As Scott Cappiello, a director of program management for traditional BI vendor MicroStrategy told me: "We want to supplement and complement BI, and not rip out data warehouses." (Of course, the company also sells data warehouses, which gives it something of a vested self-interest in their survival.) Nevertheless, he full expects that "everyone of our customers will have Hadoop in their infrastructure eventually—it will be in the mix." Taha had several suggestions on how to make sure that your Big Data project can be integrated into a traditional B.I. shop, and they are worth reviewing here: Leave no data behind. Storage is cheap: why not make use of it? One company at the Summit told me that a major retailer was now storing ten years' worth of store-by-store data, just because they could. A silicon chipmaker used its huge collection of signal monitoring from its QA processes to predict chip failures, saving them tons of money. This wouldn't have been possible with traditional databases. You never know if someone is going to come up with a problem or a question that can tap this treasure trove. Use all of your analytic assets. Hadoop has its HIVE add-on, but you don't have to devote yourself to just the Hadoop world: pick up whatever assets you already use in your data warehouse and other places, and see if you can make use of them in your Big Data situations. Provide all users some kind of self-service portal to do their own analysis. Karmasphere and Datameer, for example, both offer tools that make ad hoc analysis an easier proposition. Datameer even uses a spreadsheet-like display for people more comfortable with rows and columns. But realize that you will have to provide some structure to your analysis eventually. Build a collaborative environment. You want to encourage users with varying skill sets to participate in your Big Data analysis, and be able to cross-pollinate their ideas with the traditional B.I, methods and perspectives. Make it easy to do queries with a combination of Web-based forms and tools with user-friendly elements such as drag-and-drop. Leverage your existing SQL skill sets to make things as familiar and comfortable as possible to your B.I. team members, too. Be as open and as extensible as possible. The more APIs you can document and make available to your users, the better. Some of the Big Data products, like Kognitio, output data to ODBC and JDBC datasets to make their interaction with the traditional BI side easier. Microsoft offers add-on products that enable Excel and SQL Server to analyze Hadoop data and store it on its Azure cloud services. (And there is an excellent tutorial on how to use R with Hadoop by David Smith.) Use the best of breed reporting tools. Cappiello suggests that, in order to have the right tool for the job, an organization should take the time to examine different Hadoop distributions. Don’t be afraid to experiment with Big Data, he added: “The cost is low.” With Big Data, you can quickly deploy lots of machine resources when you need them. For example, Netflix, which is a big Hadoop shop, sets up special clusters for answering specific queries, so as not to impact its production clusters. "We need to focus on the endgame and make sure that the solutions we create are easier to consume and understand for business users," Scott Gnau, the President of Teradata Labs, said at one of the Hadoop conference keynotes. “We have to figure out how to make enterprise adoption easier and find repeatable references solutions that can drive immediate business value." Or as one Big Data engineer from Netflix told me: "It isn't hard to make the transition to Hadoop. [You] just have to think differently about your data."

[/caption] The Hadoop Summit, which took place June 13-14 in San Jose, Calif., was packed with attendees—with the exception of a session on B.I. integration hosted by Abe Taha, vice president of engineering at KarmaSphere. That was a shame. Taha gave lots of reasons why Big Data and B.I. can peacefully co-exist, and are even complementary. That might come as a mild shock to many who wrestle with massive datasets: the amount of data flooding organizations has become so enormous that it threatens to overwhelm the capabilities of many B.I. tools, even when the organizations in question start deploying data-crunching frameworks such as Hadoop. With B.I., you’re looking for the answers to particular questions; with Big Data, you don’t even know what questions to ask. B.I. reports on the business, while Big Data can be used to optimize business operations. With B.I., workers need to set up schemas and structure in advance of actual analysis; with Big Data, analysis can be performed on the fly, with no thought of prior schemas. Combining the two could create a powerful system for any organization wrestling with how to best manage and analyze its data. As Scott Cappiello, a director of program management for traditional BI vendor MicroStrategy told me: "We want to supplement and complement BI, and not rip out data warehouses." (Of course, the company also sells data warehouses, which gives it something of a vested self-interest in their survival.) Nevertheless, he full expects that "everyone of our customers will have Hadoop in their infrastructure eventually—it will be in the mix." Taha had several suggestions on how to make sure that your Big Data project can be integrated into a traditional B.I. shop, and they are worth reviewing here: Leave no data behind. Storage is cheap: why not make use of it? One company at the Summit told me that a major retailer was now storing ten years' worth of store-by-store data, just because they could. A silicon chipmaker used its huge collection of signal monitoring from its QA processes to predict chip failures, saving them tons of money. This wouldn't have been possible with traditional databases. You never know if someone is going to come up with a problem or a question that can tap this treasure trove. Use all of your analytic assets. Hadoop has its HIVE add-on, but you don't have to devote yourself to just the Hadoop world: pick up whatever assets you already use in your data warehouse and other places, and see if you can make use of them in your Big Data situations. Provide all users some kind of self-service portal to do their own analysis. Karmasphere and Datameer, for example, both offer tools that make ad hoc analysis an easier proposition. Datameer even uses a spreadsheet-like display for people more comfortable with rows and columns. But realize that you will have to provide some structure to your analysis eventually. Build a collaborative environment. You want to encourage users with varying skill sets to participate in your Big Data analysis, and be able to cross-pollinate their ideas with the traditional B.I, methods and perspectives. Make it easy to do queries with a combination of Web-based forms and tools with user-friendly elements such as drag-and-drop. Leverage your existing SQL skill sets to make things as familiar and comfortable as possible to your B.I. team members, too. Be as open and as extensible as possible. The more APIs you can document and make available to your users, the better. Some of the Big Data products, like Kognitio, output data to ODBC and JDBC datasets to make their interaction with the traditional BI side easier. Microsoft offers add-on products that enable Excel and SQL Server to analyze Hadoop data and store it on its Azure cloud services. (And there is an excellent tutorial on how to use R with Hadoop by David Smith.) Use the best of breed reporting tools. Cappiello suggests that, in order to have the right tool for the job, an organization should take the time to examine different Hadoop distributions. Don’t be afraid to experiment with Big Data, he added: “The cost is low.” With Big Data, you can quickly deploy lots of machine resources when you need them. For example, Netflix, which is a big Hadoop shop, sets up special clusters for answering specific queries, so as not to impact its production clusters. "We need to focus on the endgame and make sure that the solutions we create are easier to consume and understand for business users," Scott Gnau, the President of Teradata Labs, said at one of the Hadoop conference keynotes. “We have to figure out how to make enterprise adoption easier and find repeatable references solutions that can drive immediate business value." Or as one Big Data engineer from Netflix told me: "It isn't hard to make the transition to Hadoop. [You] just have to think differently about your data."

[/caption] The Hadoop Summit, which took place June 13-14 in San Jose, Calif., was packed with attendees—with the exception of a session on B.I. integration hosted by Abe Taha, vice president of engineering at KarmaSphere. That was a shame. Taha gave lots of reasons why Big Data and B.I. can peacefully co-exist, and are even complementary. That might come as a mild shock to many who wrestle with massive datasets: the amount of data flooding organizations has become so enormous that it threatens to overwhelm the capabilities of many B.I. tools, even when the organizations in question start deploying data-crunching frameworks such as Hadoop. With B.I., you’re looking for the answers to particular questions; with Big Data, you don’t even know what questions to ask. B.I. reports on the business, while Big Data can be used to optimize business operations. With B.I., workers need to set up schemas and structure in advance of actual analysis; with Big Data, analysis can be performed on the fly, with no thought of prior schemas. Combining the two could create a powerful system for any organization wrestling with how to best manage and analyze its data. As Scott Cappiello, a director of program management for traditional BI vendor MicroStrategy told me: "We want to supplement and complement BI, and not rip out data warehouses." (Of course, the company also sells data warehouses, which gives it something of a vested self-interest in their survival.) Nevertheless, he full expects that "everyone of our customers will have Hadoop in their infrastructure eventually—it will be in the mix." Taha had several suggestions on how to make sure that your Big Data project can be integrated into a traditional B.I. shop, and they are worth reviewing here: Leave no data behind. Storage is cheap: why not make use of it? One company at the Summit told me that a major retailer was now storing ten years' worth of store-by-store data, just because they could. A silicon chipmaker used its huge collection of signal monitoring from its QA processes to predict chip failures, saving them tons of money. This wouldn't have been possible with traditional databases. You never know if someone is going to come up with a problem or a question that can tap this treasure trove. Use all of your analytic assets. Hadoop has its HIVE add-on, but you don't have to devote yourself to just the Hadoop world: pick up whatever assets you already use in your data warehouse and other places, and see if you can make use of them in your Big Data situations. Provide all users some kind of self-service portal to do their own analysis. Karmasphere and Datameer, for example, both offer tools that make ad hoc analysis an easier proposition. Datameer even uses a spreadsheet-like display for people more comfortable with rows and columns. But realize that you will have to provide some structure to your analysis eventually. Build a collaborative environment. You want to encourage users with varying skill sets to participate in your Big Data analysis, and be able to cross-pollinate their ideas with the traditional B.I, methods and perspectives. Make it easy to do queries with a combination of Web-based forms and tools with user-friendly elements such as drag-and-drop. Leverage your existing SQL skill sets to make things as familiar and comfortable as possible to your B.I. team members, too. Be as open and as extensible as possible. The more APIs you can document and make available to your users, the better. Some of the Big Data products, like Kognitio, output data to ODBC and JDBC datasets to make their interaction with the traditional BI side easier. Microsoft offers add-on products that enable Excel and SQL Server to analyze Hadoop data and store it on its Azure cloud services. (And there is an excellent tutorial on how to use R with Hadoop by David Smith.) Use the best of breed reporting tools. Cappiello suggests that, in order to have the right tool for the job, an organization should take the time to examine different Hadoop distributions. Don’t be afraid to experiment with Big Data, he added: “The cost is low.” With Big Data, you can quickly deploy lots of machine resources when you need them. For example, Netflix, which is a big Hadoop shop, sets up special clusters for answering specific queries, so as not to impact its production clusters. "We need to focus on the endgame and make sure that the solutions we create are easier to consume and understand for business users," Scott Gnau, the President of Teradata Labs, said at one of the Hadoop conference keynotes. “We have to figure out how to make enterprise adoption easier and find repeatable references solutions that can drive immediate business value." Or as one Big Data engineer from Netflix told me: "It isn't hard to make the transition to Hadoop. [You] just have to think differently about your data."

[/caption] The Hadoop Summit, which took place June 13-14 in San Jose, Calif., was packed with attendees—with the exception of a session on B.I. integration hosted by Abe Taha, vice president of engineering at KarmaSphere. That was a shame. Taha gave lots of reasons why Big Data and B.I. can peacefully co-exist, and are even complementary. That might come as a mild shock to many who wrestle with massive datasets: the amount of data flooding organizations has become so enormous that it threatens to overwhelm the capabilities of many B.I. tools, even when the organizations in question start deploying data-crunching frameworks such as Hadoop. With B.I., you’re looking for the answers to particular questions; with Big Data, you don’t even know what questions to ask. B.I. reports on the business, while Big Data can be used to optimize business operations. With B.I., workers need to set up schemas and structure in advance of actual analysis; with Big Data, analysis can be performed on the fly, with no thought of prior schemas. Combining the two could create a powerful system for any organization wrestling with how to best manage and analyze its data. As Scott Cappiello, a director of program management for traditional BI vendor MicroStrategy told me: "We want to supplement and complement BI, and not rip out data warehouses." (Of course, the company also sells data warehouses, which gives it something of a vested self-interest in their survival.) Nevertheless, he full expects that "everyone of our customers will have Hadoop in their infrastructure eventually—it will be in the mix." Taha had several suggestions on how to make sure that your Big Data project can be integrated into a traditional B.I. shop, and they are worth reviewing here: Leave no data behind. Storage is cheap: why not make use of it? One company at the Summit told me that a major retailer was now storing ten years' worth of store-by-store data, just because they could. A silicon chipmaker used its huge collection of signal monitoring from its QA processes to predict chip failures, saving them tons of money. This wouldn't have been possible with traditional databases. You never know if someone is going to come up with a problem or a question that can tap this treasure trove. Use all of your analytic assets. Hadoop has its HIVE add-on, but you don't have to devote yourself to just the Hadoop world: pick up whatever assets you already use in your data warehouse and other places, and see if you can make use of them in your Big Data situations. Provide all users some kind of self-service portal to do their own analysis. Karmasphere and Datameer, for example, both offer tools that make ad hoc analysis an easier proposition. Datameer even uses a spreadsheet-like display for people more comfortable with rows and columns. But realize that you will have to provide some structure to your analysis eventually. Build a collaborative environment. You want to encourage users with varying skill sets to participate in your Big Data analysis, and be able to cross-pollinate their ideas with the traditional B.I, methods and perspectives. Make it easy to do queries with a combination of Web-based forms and tools with user-friendly elements such as drag-and-drop. Leverage your existing SQL skill sets to make things as familiar and comfortable as possible to your B.I. team members, too. Be as open and as extensible as possible. The more APIs you can document and make available to your users, the better. Some of the Big Data products, like Kognitio, output data to ODBC and JDBC datasets to make their interaction with the traditional BI side easier. Microsoft offers add-on products that enable Excel and SQL Server to analyze Hadoop data and store it on its Azure cloud services. (And there is an excellent tutorial on how to use R with Hadoop by David Smith.) Use the best of breed reporting tools. Cappiello suggests that, in order to have the right tool for the job, an organization should take the time to examine different Hadoop distributions. Don’t be afraid to experiment with Big Data, he added: “The cost is low.” With Big Data, you can quickly deploy lots of machine resources when you need them. For example, Netflix, which is a big Hadoop shop, sets up special clusters for answering specific queries, so as not to impact its production clusters. "We need to focus on the endgame and make sure that the solutions we create are easier to consume and understand for business users," Scott Gnau, the President of Teradata Labs, said at one of the Hadoop conference keynotes. “We have to figure out how to make enterprise adoption easier and find repeatable references solutions that can drive immediate business value." Or as one Big Data engineer from Netflix told me: "It isn't hard to make the transition to Hadoop. [You] just have to think differently about your data."