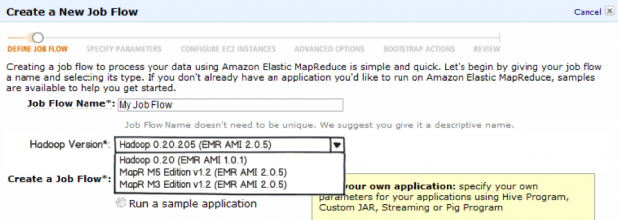

The world of Hadoop can be a confusing one. Several commercial vendors (as well as numerous open source projects) offer products that extend the framework’s Big Data capabilities. But there are efforts underway to make Hadoop more suitable for large-scale business deployments—including the addition of integral elements such as high availability, referential integrity, failovers, and the like. Here are some pointers for starting (and understanding) a Hadoop enterprise deployment. Don't get hung up on version numbers and customer wins. If you’re expecting a world where products come out with regular, well-tested releases, think again. Many Hadoop vendors have fewer than 100 paying customers, with products still at versions that begin with 0, let alone 1.0. Don't let that bother you: some, like MapR, have done seven figure deals. AWS has more than a million Hadoop clusters already. Others—including eBay, Netflix and Twitter—rely on it as the basis of their IT infrastructure. A good starting point is pre-packed enterprise distributions of Hadoop from Cloudera, Hortonworks' Data Platform (which was announced earlier in June), and MapR. Hortonworks includes several open-source projects such as Ambari monitoring and management, metadata services from HCatalog, Talend Open Studio for data integration, and other components to make setting up a full installation easier. Cloudera is on version four of its suite of tools. You need to understand the implications of scaling to huge data repositories. Yes, one of the attractions of Hadoop is that it can analyze a lot of data. But that much data demands different thinking: one key decision is whether to keep it on premises or move it to the cloud. Many of the largest Hadoop installations run their own servers, such as eBay and Yahoo (the latter has a cluster of over 42,000 servers). But others, including Netflix, make use of Amazon Web Services to run the business. Netflix has one petabyte of data at any moment stored on Amazon's S3; Adam Gray, a senior product manager for Amazon, told attendees at the Hadoop Summit in June that his company tracks 50 billion daily events using other Amazon services such as Elastic Map Reduce. One reason to stick with an on-premises installation is keeping your data secure. Vendors such as Dataguise are trying to add a better security layer to Hadoop with a centralized detection and control tool that tracks sensitive data in motion or at rest. It can store unprotected and protected data in the same Hadoop implementation, or quarantine the sensitive data in a separate cluster. The tool will mask the sensitive data elements, such as a Social Security number, while in transit. Gray also spoke about the use of different computing clusters for particular tasks. One of the nice things about having SaaS-based computing is that you can spin up a new instance when you need more processing, so having a cluster devoted to a particular query isn't totally out of line. Unlike a SQL database, the ad hoc nature of Hadoop means the framework doesn't require a data schema before the data is written. This is great for those digital pack rats who just want to suck everything into the data storage and worry about how to structure it later. Don't worry about resources—you can always grab more when you need them. In the traditional BI world, you had to ensure your database was sized to the processing power and storage you had at hand. Now the cloud makes things more flexible, and you can dynamically change your servers when you need more horsepower. For example, Netflix' peak times are Saturday nights, so they automatically bring up new clusters to handle the additional load. "We can turn on new capacity when we need it in a moment, and automatically too," said one Netflix engineer at the Hadoop Summit. "No one but AWS could handle that kind of capacity." One new aspect of on-demand scaling came earlier this month, when VMware announced a new open-source project, Serengeti, to help deploy Hadoop in virtual and hybrid cloud environments. This will make it easier to bring up new instances of Hadoop using the existing VMware management tools. Keep track of Amazon's Hadoop-related offerings. Amazon is constantly improving their support of Hadoop; earlier this month, the company announced it will offer two different versions of MapR as part of the Elastic Map Reduce service (pictured at the top of this article). Both M3 and M5 editions are available. M3 is free, while M5 carries per-hourly surcharges. MapR is a special version of Hadoop that offers high availability, snapshot data recovery, mirroring, and better performance over other Hadoop distributions. All of these could prove attractive for enterprise installations. As you can see, the Hadoop world is still evolving and moving rapidly, with many different add-ons and products.