[/caption] Hadoop many have started out life as a comparatively inexpensive way to store a lot of data, but it’s quickly evolving into a platform for developing applications. As a framework for processing massive amounts of unstructured data, Hadoop has a lot of fans. But for end-users to access that data, it still needs to be transferred across networks—which can become a prohibitively expensive proposition. So rather than transfer data across a network in order for applications to make use of it, a lot of folks are beginning to think in terms of bringing applications to the data. “We already seen a number of higher level frameworks for building applications on top of Hadoop,” said Omer Trajman, vice president of technical solutions for Cloudera, a provider of a Hadoop distribution. “And based on what we’re hearing from our venture capital friends, there will be a lot more.” However, working with Hadoop on any level offers some challenges—for starters, the MapReduce interface used to access Hadoop data. Not only is there a shortage of people who know how to work with MapReduce, it presents a fairly low-level construct that many IT organizations find too cumbersome to use. This has given rise to any number of approaches, such as Hive and Hbase, that allow developers to make use of more familiar interfaces such as SQL (designed to handle structured data residing in a relational database) to access Hadoop data. Companies such as Concurrent have also created alternatives to working with MapReduce. Concurrent this week released Cascading 2.0, a framework that makes it easier for Java developers to invoke Hadoop. “Cascading 2.0 is designed to make it easier for Java developers to work with complex data flows using Hadoop,” said Concurrent CEO Chris Wensel. One of the most ambitious application development efforts for Hadoop comes from EMC Greenplum. This unit of EMC just launched Greenplum Analytics Workbench, an implementation of Hadoop in the cloud that developers can leverage to create applications. That effort comes on the heels of the launch of a Chorus framework, for facilitating the collaborative development of Hadoop applications, and the acquisition of Pivotal Labs, a firm that builds applications on behalf of corporate customers. Other vendors providing application frameworks for Hadoop include Karmasphere, a provider of application development tools for Hadoop that include support for SQL, and VMware, which has integrated the Spring development environment with Hadoop. According to Milind Bhandarkar, chief architect of Greenplum Labs, it’s much better to build applications directly on Hadoop for no other reason than the extract, transform and load (ETL) process can wind up consuming as much as 40 to 50 percent of the processing time in Hadoop environments. Rather than moving the data to the applications, he said, a lot of people are deciding that it’s much better to move the applications to the data. MapReduce, he added, is fairly primitive interface that traces its lineage back to LISP programming models, so it’s unsurprising that organizations are looking for ways to abstract that complexity. David Nichols, partner and CIO services Leader for the Americas at the IT consulting firm Ernst & Young, suggests that the biggest issue with any Big Data project is the need for processes that ensure an organization knows what it wants from its Big Data investments. That usually requires the hiring of a data scientist to help define the parameters of the project, advises Nichols. “In some respects the challenge with data has always been that IT is looking for the needle in the haystack, he said. “But now we’re not even completely sure that it’s a needle that we’re actually looking for.” Metaphorical needles aside, Hadoop and associated applications can help those businesses and data scientists leverage in-house data to best possible use. Image: Google

[/caption] Hadoop many have started out life as a comparatively inexpensive way to store a lot of data, but it’s quickly evolving into a platform for developing applications. As a framework for processing massive amounts of unstructured data, Hadoop has a lot of fans. But for end-users to access that data, it still needs to be transferred across networks—which can become a prohibitively expensive proposition. So rather than transfer data across a network in order for applications to make use of it, a lot of folks are beginning to think in terms of bringing applications to the data. “We already seen a number of higher level frameworks for building applications on top of Hadoop,” said Omer Trajman, vice president of technical solutions for Cloudera, a provider of a Hadoop distribution. “And based on what we’re hearing from our venture capital friends, there will be a lot more.” However, working with Hadoop on any level offers some challenges—for starters, the MapReduce interface used to access Hadoop data. Not only is there a shortage of people who know how to work with MapReduce, it presents a fairly low-level construct that many IT organizations find too cumbersome to use. This has given rise to any number of approaches, such as Hive and Hbase, that allow developers to make use of more familiar interfaces such as SQL (designed to handle structured data residing in a relational database) to access Hadoop data. Companies such as Concurrent have also created alternatives to working with MapReduce. Concurrent this week released Cascading 2.0, a framework that makes it easier for Java developers to invoke Hadoop. “Cascading 2.0 is designed to make it easier for Java developers to work with complex data flows using Hadoop,” said Concurrent CEO Chris Wensel. One of the most ambitious application development efforts for Hadoop comes from EMC Greenplum. This unit of EMC just launched Greenplum Analytics Workbench, an implementation of Hadoop in the cloud that developers can leverage to create applications. That effort comes on the heels of the launch of a Chorus framework, for facilitating the collaborative development of Hadoop applications, and the acquisition of Pivotal Labs, a firm that builds applications on behalf of corporate customers. Other vendors providing application frameworks for Hadoop include Karmasphere, a provider of application development tools for Hadoop that include support for SQL, and VMware, which has integrated the Spring development environment with Hadoop. According to Milind Bhandarkar, chief architect of Greenplum Labs, it’s much better to build applications directly on Hadoop for no other reason than the extract, transform and load (ETL) process can wind up consuming as much as 40 to 50 percent of the processing time in Hadoop environments. Rather than moving the data to the applications, he said, a lot of people are deciding that it’s much better to move the applications to the data. MapReduce, he added, is fairly primitive interface that traces its lineage back to LISP programming models, so it’s unsurprising that organizations are looking for ways to abstract that complexity. David Nichols, partner and CIO services Leader for the Americas at the IT consulting firm Ernst & Young, suggests that the biggest issue with any Big Data project is the need for processes that ensure an organization knows what it wants from its Big Data investments. That usually requires the hiring of a data scientist to help define the parameters of the project, advises Nichols. “In some respects the challenge with data has always been that IT is looking for the needle in the haystack, he said. “But now we’re not even completely sure that it’s a needle that we’re actually looking for.” Metaphorical needles aside, Hadoop and associated applications can help those businesses and data scientists leverage in-house data to best possible use. Image: Google Hadoop Emerges as Application Development Platform

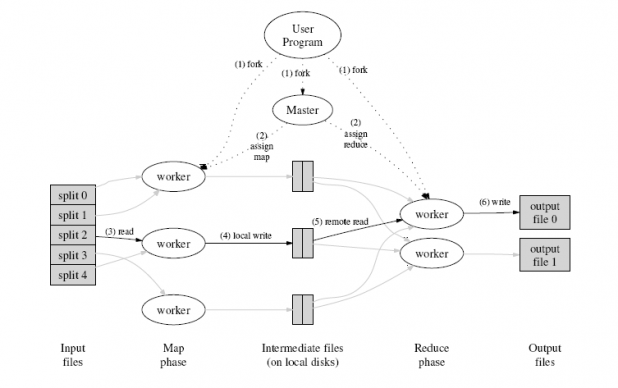

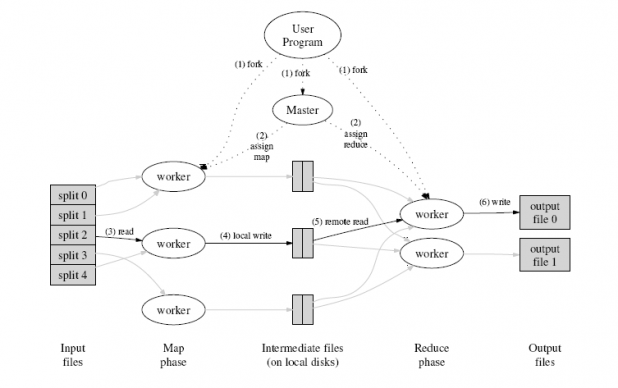

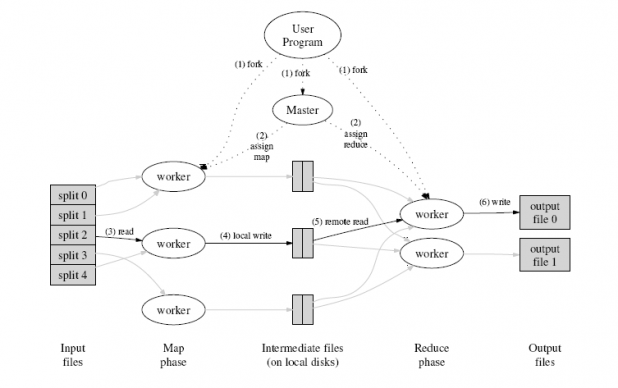

[caption id="attachment_1484" align="aligncenter" width="500" caption="Companies want to leverage Hadoop for applications, but working with MapReduce offers headaches for some IT pros."]  [/caption] Hadoop many have started out life as a comparatively inexpensive way to store a lot of data, but it’s quickly evolving into a platform for developing applications. As a framework for processing massive amounts of unstructured data, Hadoop has a lot of fans. But for end-users to access that data, it still needs to be transferred across networks—which can become a prohibitively expensive proposition. So rather than transfer data across a network in order for applications to make use of it, a lot of folks are beginning to think in terms of bringing applications to the data. “We already seen a number of higher level frameworks for building applications on top of Hadoop,” said Omer Trajman, vice president of technical solutions for Cloudera, a provider of a Hadoop distribution. “And based on what we’re hearing from our venture capital friends, there will be a lot more.” However, working with Hadoop on any level offers some challenges—for starters, the MapReduce interface used to access Hadoop data. Not only is there a shortage of people who know how to work with MapReduce, it presents a fairly low-level construct that many IT organizations find too cumbersome to use. This has given rise to any number of approaches, such as Hive and Hbase, that allow developers to make use of more familiar interfaces such as SQL (designed to handle structured data residing in a relational database) to access Hadoop data. Companies such as Concurrent have also created alternatives to working with MapReduce. Concurrent this week released Cascading 2.0, a framework that makes it easier for Java developers to invoke Hadoop. “Cascading 2.0 is designed to make it easier for Java developers to work with complex data flows using Hadoop,” said Concurrent CEO Chris Wensel. One of the most ambitious application development efforts for Hadoop comes from EMC Greenplum. This unit of EMC just launched Greenplum Analytics Workbench, an implementation of Hadoop in the cloud that developers can leverage to create applications. That effort comes on the heels of the launch of a Chorus framework, for facilitating the collaborative development of Hadoop applications, and the acquisition of Pivotal Labs, a firm that builds applications on behalf of corporate customers. Other vendors providing application frameworks for Hadoop include Karmasphere, a provider of application development tools for Hadoop that include support for SQL, and VMware, which has integrated the Spring development environment with Hadoop. According to Milind Bhandarkar, chief architect of Greenplum Labs, it’s much better to build applications directly on Hadoop for no other reason than the extract, transform and load (ETL) process can wind up consuming as much as 40 to 50 percent of the processing time in Hadoop environments. Rather than moving the data to the applications, he said, a lot of people are deciding that it’s much better to move the applications to the data. MapReduce, he added, is fairly primitive interface that traces its lineage back to LISP programming models, so it’s unsurprising that organizations are looking for ways to abstract that complexity. David Nichols, partner and CIO services Leader for the Americas at the IT consulting firm Ernst & Young, suggests that the biggest issue with any Big Data project is the need for processes that ensure an organization knows what it wants from its Big Data investments. That usually requires the hiring of a data scientist to help define the parameters of the project, advises Nichols. “In some respects the challenge with data has always been that IT is looking for the needle in the haystack, he said. “But now we’re not even completely sure that it’s a needle that we’re actually looking for.” Metaphorical needles aside, Hadoop and associated applications can help those businesses and data scientists leverage in-house data to best possible use. Image: Google

[/caption] Hadoop many have started out life as a comparatively inexpensive way to store a lot of data, but it’s quickly evolving into a platform for developing applications. As a framework for processing massive amounts of unstructured data, Hadoop has a lot of fans. But for end-users to access that data, it still needs to be transferred across networks—which can become a prohibitively expensive proposition. So rather than transfer data across a network in order for applications to make use of it, a lot of folks are beginning to think in terms of bringing applications to the data. “We already seen a number of higher level frameworks for building applications on top of Hadoop,” said Omer Trajman, vice president of technical solutions for Cloudera, a provider of a Hadoop distribution. “And based on what we’re hearing from our venture capital friends, there will be a lot more.” However, working with Hadoop on any level offers some challenges—for starters, the MapReduce interface used to access Hadoop data. Not only is there a shortage of people who know how to work with MapReduce, it presents a fairly low-level construct that many IT organizations find too cumbersome to use. This has given rise to any number of approaches, such as Hive and Hbase, that allow developers to make use of more familiar interfaces such as SQL (designed to handle structured data residing in a relational database) to access Hadoop data. Companies such as Concurrent have also created alternatives to working with MapReduce. Concurrent this week released Cascading 2.0, a framework that makes it easier for Java developers to invoke Hadoop. “Cascading 2.0 is designed to make it easier for Java developers to work with complex data flows using Hadoop,” said Concurrent CEO Chris Wensel. One of the most ambitious application development efforts for Hadoop comes from EMC Greenplum. This unit of EMC just launched Greenplum Analytics Workbench, an implementation of Hadoop in the cloud that developers can leverage to create applications. That effort comes on the heels of the launch of a Chorus framework, for facilitating the collaborative development of Hadoop applications, and the acquisition of Pivotal Labs, a firm that builds applications on behalf of corporate customers. Other vendors providing application frameworks for Hadoop include Karmasphere, a provider of application development tools for Hadoop that include support for SQL, and VMware, which has integrated the Spring development environment with Hadoop. According to Milind Bhandarkar, chief architect of Greenplum Labs, it’s much better to build applications directly on Hadoop for no other reason than the extract, transform and load (ETL) process can wind up consuming as much as 40 to 50 percent of the processing time in Hadoop environments. Rather than moving the data to the applications, he said, a lot of people are deciding that it’s much better to move the applications to the data. MapReduce, he added, is fairly primitive interface that traces its lineage back to LISP programming models, so it’s unsurprising that organizations are looking for ways to abstract that complexity. David Nichols, partner and CIO services Leader for the Americas at the IT consulting firm Ernst & Young, suggests that the biggest issue with any Big Data project is the need for processes that ensure an organization knows what it wants from its Big Data investments. That usually requires the hiring of a data scientist to help define the parameters of the project, advises Nichols. “In some respects the challenge with data has always been that IT is looking for the needle in the haystack, he said. “But now we’re not even completely sure that it’s a needle that we’re actually looking for.” Metaphorical needles aside, Hadoop and associated applications can help those businesses and data scientists leverage in-house data to best possible use. Image: Google

[/caption] Hadoop many have started out life as a comparatively inexpensive way to store a lot of data, but it’s quickly evolving into a platform for developing applications. As a framework for processing massive amounts of unstructured data, Hadoop has a lot of fans. But for end-users to access that data, it still needs to be transferred across networks—which can become a prohibitively expensive proposition. So rather than transfer data across a network in order for applications to make use of it, a lot of folks are beginning to think in terms of bringing applications to the data. “We already seen a number of higher level frameworks for building applications on top of Hadoop,” said Omer Trajman, vice president of technical solutions for Cloudera, a provider of a Hadoop distribution. “And based on what we’re hearing from our venture capital friends, there will be a lot more.” However, working with Hadoop on any level offers some challenges—for starters, the MapReduce interface used to access Hadoop data. Not only is there a shortage of people who know how to work with MapReduce, it presents a fairly low-level construct that many IT organizations find too cumbersome to use. This has given rise to any number of approaches, such as Hive and Hbase, that allow developers to make use of more familiar interfaces such as SQL (designed to handle structured data residing in a relational database) to access Hadoop data. Companies such as Concurrent have also created alternatives to working with MapReduce. Concurrent this week released Cascading 2.0, a framework that makes it easier for Java developers to invoke Hadoop. “Cascading 2.0 is designed to make it easier for Java developers to work with complex data flows using Hadoop,” said Concurrent CEO Chris Wensel. One of the most ambitious application development efforts for Hadoop comes from EMC Greenplum. This unit of EMC just launched Greenplum Analytics Workbench, an implementation of Hadoop in the cloud that developers can leverage to create applications. That effort comes on the heels of the launch of a Chorus framework, for facilitating the collaborative development of Hadoop applications, and the acquisition of Pivotal Labs, a firm that builds applications on behalf of corporate customers. Other vendors providing application frameworks for Hadoop include Karmasphere, a provider of application development tools for Hadoop that include support for SQL, and VMware, which has integrated the Spring development environment with Hadoop. According to Milind Bhandarkar, chief architect of Greenplum Labs, it’s much better to build applications directly on Hadoop for no other reason than the extract, transform and load (ETL) process can wind up consuming as much as 40 to 50 percent of the processing time in Hadoop environments. Rather than moving the data to the applications, he said, a lot of people are deciding that it’s much better to move the applications to the data. MapReduce, he added, is fairly primitive interface that traces its lineage back to LISP programming models, so it’s unsurprising that organizations are looking for ways to abstract that complexity. David Nichols, partner and CIO services Leader for the Americas at the IT consulting firm Ernst & Young, suggests that the biggest issue with any Big Data project is the need for processes that ensure an organization knows what it wants from its Big Data investments. That usually requires the hiring of a data scientist to help define the parameters of the project, advises Nichols. “In some respects the challenge with data has always been that IT is looking for the needle in the haystack, he said. “But now we’re not even completely sure that it’s a needle that we’re actually looking for.” Metaphorical needles aside, Hadoop and associated applications can help those businesses and data scientists leverage in-house data to best possible use. Image: Google

[/caption] Hadoop many have started out life as a comparatively inexpensive way to store a lot of data, but it’s quickly evolving into a platform for developing applications. As a framework for processing massive amounts of unstructured data, Hadoop has a lot of fans. But for end-users to access that data, it still needs to be transferred across networks—which can become a prohibitively expensive proposition. So rather than transfer data across a network in order for applications to make use of it, a lot of folks are beginning to think in terms of bringing applications to the data. “We already seen a number of higher level frameworks for building applications on top of Hadoop,” said Omer Trajman, vice president of technical solutions for Cloudera, a provider of a Hadoop distribution. “And based on what we’re hearing from our venture capital friends, there will be a lot more.” However, working with Hadoop on any level offers some challenges—for starters, the MapReduce interface used to access Hadoop data. Not only is there a shortage of people who know how to work with MapReduce, it presents a fairly low-level construct that many IT organizations find too cumbersome to use. This has given rise to any number of approaches, such as Hive and Hbase, that allow developers to make use of more familiar interfaces such as SQL (designed to handle structured data residing in a relational database) to access Hadoop data. Companies such as Concurrent have also created alternatives to working with MapReduce. Concurrent this week released Cascading 2.0, a framework that makes it easier for Java developers to invoke Hadoop. “Cascading 2.0 is designed to make it easier for Java developers to work with complex data flows using Hadoop,” said Concurrent CEO Chris Wensel. One of the most ambitious application development efforts for Hadoop comes from EMC Greenplum. This unit of EMC just launched Greenplum Analytics Workbench, an implementation of Hadoop in the cloud that developers can leverage to create applications. That effort comes on the heels of the launch of a Chorus framework, for facilitating the collaborative development of Hadoop applications, and the acquisition of Pivotal Labs, a firm that builds applications on behalf of corporate customers. Other vendors providing application frameworks for Hadoop include Karmasphere, a provider of application development tools for Hadoop that include support for SQL, and VMware, which has integrated the Spring development environment with Hadoop. According to Milind Bhandarkar, chief architect of Greenplum Labs, it’s much better to build applications directly on Hadoop for no other reason than the extract, transform and load (ETL) process can wind up consuming as much as 40 to 50 percent of the processing time in Hadoop environments. Rather than moving the data to the applications, he said, a lot of people are deciding that it’s much better to move the applications to the data. MapReduce, he added, is fairly primitive interface that traces its lineage back to LISP programming models, so it’s unsurprising that organizations are looking for ways to abstract that complexity. David Nichols, partner and CIO services Leader for the Americas at the IT consulting firm Ernst & Young, suggests that the biggest issue with any Big Data project is the need for processes that ensure an organization knows what it wants from its Big Data investments. That usually requires the hiring of a data scientist to help define the parameters of the project, advises Nichols. “In some respects the challenge with data has always been that IT is looking for the needle in the haystack, he said. “But now we’re not even completely sure that it’s a needle that we’re actually looking for.” Metaphorical needles aside, Hadoop and associated applications can help those businesses and data scientists leverage in-house data to best possible use. Image: Google