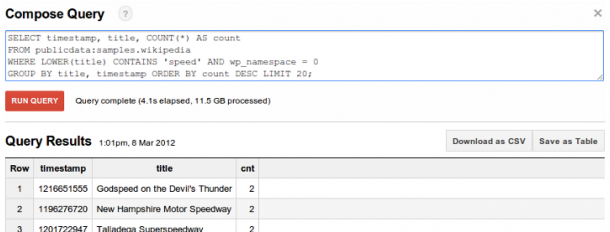

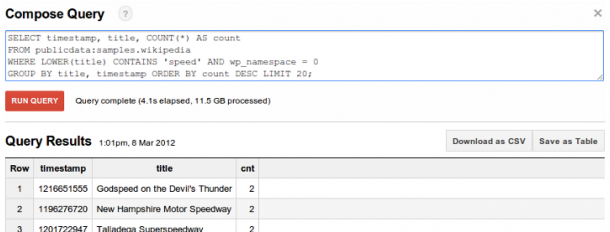

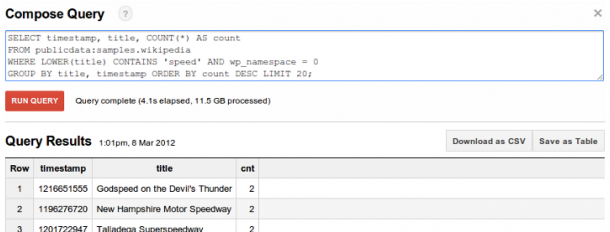

[/caption] Google’s BigQuery, rolled out to the general public May 1 after months of limited preview, has the potential to greatly alter the competitive landscape for B.I. vendors. The new cloud service lets users apply what Google calls “SQL-like query language” to analyze massive amounts of data (the company claims billions of rows in seconds). It features secure SSL access, group- and user-based permissions via Google accounts, and the capability to scale to trillions of records. It is meant to handle massive amounts of data better than Google Cloud SQL, the company’s hosted MySQL instance. According to a May 1 posting on the Official Google Enterprise Blog, analysts and developers can access BigQuery through a simple UI or REST interface. The first 100GB of data per month is free; after that, storage costs $0.12 per month (with a limit of 2TB) and queries $0.035 per GB processed. The platform’s API Overview and General Usage Guide can be found on the Google Developers Website. A BigQuery “Premier offering” will support “significantly larger volumes” beyond those mentioned in the pricing scheme; that being said, organizations wrestling with petabytes of data might end up looking for other ways to meet their analysis needs. There’s Big Query, and then there’s Bigger Query,” Ray Wang, principal analyst and CEO of Constellation Research, said in an interview. “In Google World, BigQuery is an enterprise play, which to them means more than 15 people involved. They’re democratizing big data for smaller companies.” At the same time, he added, an enterprise likely won’t rely on something along the lines of BigQuery for storing their customer log files or other data: “It’s not a service for that market.” Other Google offerings, namely its MapReduce framework, are better suited for extra-large data sets. Instead, BigQuery might prove more suitable for departments or smaller business units looking to digest smaller amounts of information. For the aforementioned smaller companies, though, BigQuery could prove a time-saver. Analytics firm Claritics, one of BigQuery’s early testers, claimed in a corporate blog posting that utilizing SQL-like commands via a RESTful API allows the user to explore and analyze data more quickly. “One of the challenges of running an analytics company like ours, where we’re dealing with terabytes of big data, is managing the horsepower required to crunch such enormous volumes of data in real-time,” read that posting. BigQuery lets Claritics “process billions of rows of social and mobile game events data in mere seconds.” BigQuery could end up challenging on-premises offerings from IBM, as well as Amazon Web Services (AWS). “The storage part of BigQuery is priced just below AWS ($0.12.0 per GB vs. $0.12.5), and Google’s arriving at market before Amazon launches a similar offering,” Charles King, principal analyst of Pund-IT, wrote in an email. “The larger question is whether an online service like BigQuery can undercut dedicated solutions, and for the time being I’d say it’s unlikely.” King agreed with Wang about BigQuery’s effect on smaller companies. “Over time, if Google can prove the value of BigQuery, it could revolutionize the market by democratizing access to Big Data,” he wrote, “allowing businesses and organizations of every size to enjoy the benefits of a technology that’s largely relegated to well-heeled companies today.” Image: Google

[/caption] Google’s BigQuery, rolled out to the general public May 1 after months of limited preview, has the potential to greatly alter the competitive landscape for B.I. vendors. The new cloud service lets users apply what Google calls “SQL-like query language” to analyze massive amounts of data (the company claims billions of rows in seconds). It features secure SSL access, group- and user-based permissions via Google accounts, and the capability to scale to trillions of records. It is meant to handle massive amounts of data better than Google Cloud SQL, the company’s hosted MySQL instance. According to a May 1 posting on the Official Google Enterprise Blog, analysts and developers can access BigQuery through a simple UI or REST interface. The first 100GB of data per month is free; after that, storage costs $0.12 per month (with a limit of 2TB) and queries $0.035 per GB processed. The platform’s API Overview and General Usage Guide can be found on the Google Developers Website. A BigQuery “Premier offering” will support “significantly larger volumes” beyond those mentioned in the pricing scheme; that being said, organizations wrestling with petabytes of data might end up looking for other ways to meet their analysis needs. There’s Big Query, and then there’s Bigger Query,” Ray Wang, principal analyst and CEO of Constellation Research, said in an interview. “In Google World, BigQuery is an enterprise play, which to them means more than 15 people involved. They’re democratizing big data for smaller companies.” At the same time, he added, an enterprise likely won’t rely on something along the lines of BigQuery for storing their customer log files or other data: “It’s not a service for that market.” Other Google offerings, namely its MapReduce framework, are better suited for extra-large data sets. Instead, BigQuery might prove more suitable for departments or smaller business units looking to digest smaller amounts of information. For the aforementioned smaller companies, though, BigQuery could prove a time-saver. Analytics firm Claritics, one of BigQuery’s early testers, claimed in a corporate blog posting that utilizing SQL-like commands via a RESTful API allows the user to explore and analyze data more quickly. “One of the challenges of running an analytics company like ours, where we’re dealing with terabytes of big data, is managing the horsepower required to crunch such enormous volumes of data in real-time,” read that posting. BigQuery lets Claritics “process billions of rows of social and mobile game events data in mere seconds.” BigQuery could end up challenging on-premises offerings from IBM, as well as Amazon Web Services (AWS). “The storage part of BigQuery is priced just below AWS ($0.12.0 per GB vs. $0.12.5), and Google’s arriving at market before Amazon launches a similar offering,” Charles King, principal analyst of Pund-IT, wrote in an email. “The larger question is whether an online service like BigQuery can undercut dedicated solutions, and for the time being I’d say it’s unlikely.” King agreed with Wang about BigQuery’s effect on smaller companies. “Over time, if Google can prove the value of BigQuery, it could revolutionize the market by democratizing access to Big Data,” he wrote, “allowing businesses and organizations of every size to enjoy the benefits of a technology that’s largely relegated to well-heeled companies today.” Image: Google Google's BigQuery Could Help Democratize Big Data

[caption id="attachment_624" align="aligncenter" width="610" caption="Google's BigQuery: Making the banana stand more efficient than ever."]  [/caption] Google’s BigQuery, rolled out to the general public May 1 after months of limited preview, has the potential to greatly alter the competitive landscape for B.I. vendors. The new cloud service lets users apply what Google calls “SQL-like query language” to analyze massive amounts of data (the company claims billions of rows in seconds). It features secure SSL access, group- and user-based permissions via Google accounts, and the capability to scale to trillions of records. It is meant to handle massive amounts of data better than Google Cloud SQL, the company’s hosted MySQL instance. According to a May 1 posting on the Official Google Enterprise Blog, analysts and developers can access BigQuery through a simple UI or REST interface. The first 100GB of data per month is free; after that, storage costs $0.12 per month (with a limit of 2TB) and queries $0.035 per GB processed. The platform’s API Overview and General Usage Guide can be found on the Google Developers Website. A BigQuery “Premier offering” will support “significantly larger volumes” beyond those mentioned in the pricing scheme; that being said, organizations wrestling with petabytes of data might end up looking for other ways to meet their analysis needs. There’s Big Query, and then there’s Bigger Query,” Ray Wang, principal analyst and CEO of Constellation Research, said in an interview. “In Google World, BigQuery is an enterprise play, which to them means more than 15 people involved. They’re democratizing big data for smaller companies.” At the same time, he added, an enterprise likely won’t rely on something along the lines of BigQuery for storing their customer log files or other data: “It’s not a service for that market.” Other Google offerings, namely its MapReduce framework, are better suited for extra-large data sets. Instead, BigQuery might prove more suitable for departments or smaller business units looking to digest smaller amounts of information. For the aforementioned smaller companies, though, BigQuery could prove a time-saver. Analytics firm Claritics, one of BigQuery’s early testers, claimed in a corporate blog posting that utilizing SQL-like commands via a RESTful API allows the user to explore and analyze data more quickly. “One of the challenges of running an analytics company like ours, where we’re dealing with terabytes of big data, is managing the horsepower required to crunch such enormous volumes of data in real-time,” read that posting. BigQuery lets Claritics “process billions of rows of social and mobile game events data in mere seconds.” BigQuery could end up challenging on-premises offerings from IBM, as well as Amazon Web Services (AWS). “The storage part of BigQuery is priced just below AWS ($0.12.0 per GB vs. $0.12.5), and Google’s arriving at market before Amazon launches a similar offering,” Charles King, principal analyst of Pund-IT, wrote in an email. “The larger question is whether an online service like BigQuery can undercut dedicated solutions, and for the time being I’d say it’s unlikely.” King agreed with Wang about BigQuery’s effect on smaller companies. “Over time, if Google can prove the value of BigQuery, it could revolutionize the market by democratizing access to Big Data,” he wrote, “allowing businesses and organizations of every size to enjoy the benefits of a technology that’s largely relegated to well-heeled companies today.” Image: Google

[/caption] Google’s BigQuery, rolled out to the general public May 1 after months of limited preview, has the potential to greatly alter the competitive landscape for B.I. vendors. The new cloud service lets users apply what Google calls “SQL-like query language” to analyze massive amounts of data (the company claims billions of rows in seconds). It features secure SSL access, group- and user-based permissions via Google accounts, and the capability to scale to trillions of records. It is meant to handle massive amounts of data better than Google Cloud SQL, the company’s hosted MySQL instance. According to a May 1 posting on the Official Google Enterprise Blog, analysts and developers can access BigQuery through a simple UI or REST interface. The first 100GB of data per month is free; after that, storage costs $0.12 per month (with a limit of 2TB) and queries $0.035 per GB processed. The platform’s API Overview and General Usage Guide can be found on the Google Developers Website. A BigQuery “Premier offering” will support “significantly larger volumes” beyond those mentioned in the pricing scheme; that being said, organizations wrestling with petabytes of data might end up looking for other ways to meet their analysis needs. There’s Big Query, and then there’s Bigger Query,” Ray Wang, principal analyst and CEO of Constellation Research, said in an interview. “In Google World, BigQuery is an enterprise play, which to them means more than 15 people involved. They’re democratizing big data for smaller companies.” At the same time, he added, an enterprise likely won’t rely on something along the lines of BigQuery for storing their customer log files or other data: “It’s not a service for that market.” Other Google offerings, namely its MapReduce framework, are better suited for extra-large data sets. Instead, BigQuery might prove more suitable for departments or smaller business units looking to digest smaller amounts of information. For the aforementioned smaller companies, though, BigQuery could prove a time-saver. Analytics firm Claritics, one of BigQuery’s early testers, claimed in a corporate blog posting that utilizing SQL-like commands via a RESTful API allows the user to explore and analyze data more quickly. “One of the challenges of running an analytics company like ours, where we’re dealing with terabytes of big data, is managing the horsepower required to crunch such enormous volumes of data in real-time,” read that posting. BigQuery lets Claritics “process billions of rows of social and mobile game events data in mere seconds.” BigQuery could end up challenging on-premises offerings from IBM, as well as Amazon Web Services (AWS). “The storage part of BigQuery is priced just below AWS ($0.12.0 per GB vs. $0.12.5), and Google’s arriving at market before Amazon launches a similar offering,” Charles King, principal analyst of Pund-IT, wrote in an email. “The larger question is whether an online service like BigQuery can undercut dedicated solutions, and for the time being I’d say it’s unlikely.” King agreed with Wang about BigQuery’s effect on smaller companies. “Over time, if Google can prove the value of BigQuery, it could revolutionize the market by democratizing access to Big Data,” he wrote, “allowing businesses and organizations of every size to enjoy the benefits of a technology that’s largely relegated to well-heeled companies today.” Image: Google

[/caption] Google’s BigQuery, rolled out to the general public May 1 after months of limited preview, has the potential to greatly alter the competitive landscape for B.I. vendors. The new cloud service lets users apply what Google calls “SQL-like query language” to analyze massive amounts of data (the company claims billions of rows in seconds). It features secure SSL access, group- and user-based permissions via Google accounts, and the capability to scale to trillions of records. It is meant to handle massive amounts of data better than Google Cloud SQL, the company’s hosted MySQL instance. According to a May 1 posting on the Official Google Enterprise Blog, analysts and developers can access BigQuery through a simple UI or REST interface. The first 100GB of data per month is free; after that, storage costs $0.12 per month (with a limit of 2TB) and queries $0.035 per GB processed. The platform’s API Overview and General Usage Guide can be found on the Google Developers Website. A BigQuery “Premier offering” will support “significantly larger volumes” beyond those mentioned in the pricing scheme; that being said, organizations wrestling with petabytes of data might end up looking for other ways to meet their analysis needs. There’s Big Query, and then there’s Bigger Query,” Ray Wang, principal analyst and CEO of Constellation Research, said in an interview. “In Google World, BigQuery is an enterprise play, which to them means more than 15 people involved. They’re democratizing big data for smaller companies.” At the same time, he added, an enterprise likely won’t rely on something along the lines of BigQuery for storing their customer log files or other data: “It’s not a service for that market.” Other Google offerings, namely its MapReduce framework, are better suited for extra-large data sets. Instead, BigQuery might prove more suitable for departments or smaller business units looking to digest smaller amounts of information. For the aforementioned smaller companies, though, BigQuery could prove a time-saver. Analytics firm Claritics, one of BigQuery’s early testers, claimed in a corporate blog posting that utilizing SQL-like commands via a RESTful API allows the user to explore and analyze data more quickly. “One of the challenges of running an analytics company like ours, where we’re dealing with terabytes of big data, is managing the horsepower required to crunch such enormous volumes of data in real-time,” read that posting. BigQuery lets Claritics “process billions of rows of social and mobile game events data in mere seconds.” BigQuery could end up challenging on-premises offerings from IBM, as well as Amazon Web Services (AWS). “The storage part of BigQuery is priced just below AWS ($0.12.0 per GB vs. $0.12.5), and Google’s arriving at market before Amazon launches a similar offering,” Charles King, principal analyst of Pund-IT, wrote in an email. “The larger question is whether an online service like BigQuery can undercut dedicated solutions, and for the time being I’d say it’s unlikely.” King agreed with Wang about BigQuery’s effect on smaller companies. “Over time, if Google can prove the value of BigQuery, it could revolutionize the market by democratizing access to Big Data,” he wrote, “allowing businesses and organizations of every size to enjoy the benefits of a technology that’s largely relegated to well-heeled companies today.” Image: Google

[/caption] Google’s BigQuery, rolled out to the general public May 1 after months of limited preview, has the potential to greatly alter the competitive landscape for B.I. vendors. The new cloud service lets users apply what Google calls “SQL-like query language” to analyze massive amounts of data (the company claims billions of rows in seconds). It features secure SSL access, group- and user-based permissions via Google accounts, and the capability to scale to trillions of records. It is meant to handle massive amounts of data better than Google Cloud SQL, the company’s hosted MySQL instance. According to a May 1 posting on the Official Google Enterprise Blog, analysts and developers can access BigQuery through a simple UI or REST interface. The first 100GB of data per month is free; after that, storage costs $0.12 per month (with a limit of 2TB) and queries $0.035 per GB processed. The platform’s API Overview and General Usage Guide can be found on the Google Developers Website. A BigQuery “Premier offering” will support “significantly larger volumes” beyond those mentioned in the pricing scheme; that being said, organizations wrestling with petabytes of data might end up looking for other ways to meet their analysis needs. There’s Big Query, and then there’s Bigger Query,” Ray Wang, principal analyst and CEO of Constellation Research, said in an interview. “In Google World, BigQuery is an enterprise play, which to them means more than 15 people involved. They’re democratizing big data for smaller companies.” At the same time, he added, an enterprise likely won’t rely on something along the lines of BigQuery for storing their customer log files or other data: “It’s not a service for that market.” Other Google offerings, namely its MapReduce framework, are better suited for extra-large data sets. Instead, BigQuery might prove more suitable for departments or smaller business units looking to digest smaller amounts of information. For the aforementioned smaller companies, though, BigQuery could prove a time-saver. Analytics firm Claritics, one of BigQuery’s early testers, claimed in a corporate blog posting that utilizing SQL-like commands via a RESTful API allows the user to explore and analyze data more quickly. “One of the challenges of running an analytics company like ours, where we’re dealing with terabytes of big data, is managing the horsepower required to crunch such enormous volumes of data in real-time,” read that posting. BigQuery lets Claritics “process billions of rows of social and mobile game events data in mere seconds.” BigQuery could end up challenging on-premises offerings from IBM, as well as Amazon Web Services (AWS). “The storage part of BigQuery is priced just below AWS ($0.12.0 per GB vs. $0.12.5), and Google’s arriving at market before Amazon launches a similar offering,” Charles King, principal analyst of Pund-IT, wrote in an email. “The larger question is whether an online service like BigQuery can undercut dedicated solutions, and for the time being I’d say it’s unlikely.” King agreed with Wang about BigQuery’s effect on smaller companies. “Over time, if Google can prove the value of BigQuery, it could revolutionize the market by democratizing access to Big Data,” he wrote, “allowing businesses and organizations of every size to enjoy the benefits of a technology that’s largely relegated to well-heeled companies today.” Image: Google